Introduction

AI fashion model photography promises broad representation at a fraction of traditional shoot costs. But the same technology can entrench narrow beauty standards, sideline models of color, and let brands perform inclusion without practicing it. For fashion brands, marketers, and e-commerce teams, that tension is both an opportunity and a liability.

Grand View Research estimates AI in fashion technology reached approximately $46.8 billion globally in 2024, with projections hitting $71.2 billion by 2030. At that scale, the design choices baked into these systems — whose faces appear, which body types are trained on, what "diversity" actually means in practice — carry real consequences for real people.

This article answers three core questions: Can AI genuinely advance diversity in fashion imagery? Where do the real ethical risks lie? And what does responsible deployment actually look like?

TLDR:

- AI-generated fashion models enable representation across body types, ethnicities, ages, and sizes across entire product catalogs

- AI trained on biased datasets amplifies Eurocentric beauty standards unless actively corrected

- Using synthetic diversity while eliminating human models of color constitutes performative inclusion

- Ethical deployment requires diversity by design, consent for real likenesses, transparent disclosure, and bias auditing

- Brand trust depends on using AI to expand representation — not as a shortcut to eliminate it

AI Fashion Models and the Diversity Opportunity

Traditional fashion photography has systematically excluded diverse bodies—not by accident but through structural barriers. Vogue Business's Fall/Winter 2026 Size Inclusivity Report analyzed 7,817 looks across 182 shows: 97.6% were straight-size (US 0-4), 2.1% were mid-size, and only 0.3% were plus-size. Mid-size representation actually dropped from 2.8% the prior season to 1.5%.

These exclusions stem from cost barriers, geography-limited talent pools, and industry gatekeeping. The economic reality matters: Shawn Grain Carter, professor at the Fashion Institute of Technology, explained to NBC News that "when you have to hire a model, book an agency, have a stylist, do the makeup, feed them on set—all that costs money."

AI model photography addresses this gap directly. The technology can generate models representing virtually any ethnicity, body size, or age group at scale — giving smaller brands the ability to show 10-12 body types per product rather than one.

When built with diversity as an actual design goal, this approach:

- Closes a real commercial gap — consumers who can't see themselves represented are less likely to purchase

- Reduces return rates by helping shoppers assess fit across body types

- Reflects actual shopper demographics rather than an aspirational narrow ideal

- Lets brands with limited budgets achieve inclusive casting without agency fees

Platforms that pair this with human review and a deliberately curated model library produce meaningfully different results than off-the-shelf generative tools. MetaModels.ai takes this approach — a diverse model library spanning ethnicities, body types, and demographics, with human-reviewed outputs for garment accuracy and no use of real people's likenesses.

Levi's March 2023 partnership with Lalaland.ai — framed as a move to "supplement human models" and "create a more inclusive, personal and sustainable shopping experience" — sparked immediate backlash. Critics accused the brand of "digital Blackface" and cost-cutting disguised as inclusion. Levi's clarified within six days that the partnership was "not a substitute for real action" on diversity commitments—but the damage illustrated consumer expectations.

When AI platforms are built by diverse teams with diversity as a design principle rather than an afterthought, outputs differ fundamentally from generic generative tools. The distinction between intentional diversity architecture and algorithmic defaults determines whether AI advances or undermines representation.

The Ethical Fault Lines: Bias, Likeness, and Exploitation

Bias Inheritance and Amplification

AI image models trained on internet-scraped data absorb embedded biases—lighter skin preference, Eurocentric facial features, narrow body standards, stereotyped styling. These defaults reproduce and amplify existing inequities rather than correcting them.

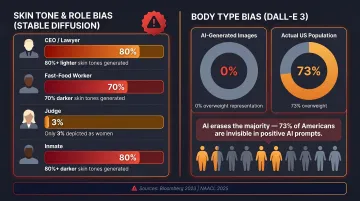

Bloomberg's June 2023 analysis tested Stable Diffusion v1.5 across 5,100 generated images. Results were stark:

- Over 80% of "CEO" and "lawyer" images depicted people with lighter skin

- 70% of "fast-food worker" images showed darker skin tones (versus 70% of actual US fast-food workers being white)

- Only 3% of "judge" images were women (versus 34% in reality)

- Over 80% of "inmate" images depicted darker skin

Body type bias proves equally severe. NAACL 2025 research testing DALL-E 3 with 4,000 images found 0% of people in positive-prompt images were overweight or obese. Only 3.4% of all generated images depicted overweight/obese body types—compared to 73% of the US population being clinically overweight or obese.

Likeness and Consent Exploitation

A critical divide exists between platforms generating entirely original synthetic models versus those training on real models' images or creating digital replicas without consent.

AP News reported in April 2024 that New York-based model Yve Edmond was asked to perform physical movements—moving her arms, squatting, walking—under the guise of a "fitting." She suspected the company was collecting data to build an AI avatar without proper disclosure or compensation. She refused.

The British Fashion Models Association warned in November 2025 that indiscriminate web scraping of model images for AI training occurs without consent, with over 40 legal disputes globally regarding such practices. Models, typically classified as independent contractors, have few labor protections—creating an environment with no binding labor standards.

New York's Fashion Workers Act, effective June 2025, now requires separate, explicit written consent before creating, altering, or using a model's digital replica. It's the first US law to formally recognize digital replica exploitation in fashion.

The Beauty Standards Trap

Unlike human models who carry real imperfections, AI models default to idealized, hyper-symmetrical features and bodies unless deliberately constrained. Left unchecked, this creates a feedback loop: brands generate images optimized for engagement, algorithms reward them, and audiences—particularly young consumers—internalize those standards as normal.

Research from the American Psychological Association consistently links exposure to idealized digital imagery with body dissatisfaction. When AI systems produce only one body type by default, they don't just reflect a standard. They reinforce it at scale.

Job Displacement and Inequity

AI displaces not only human models but photographers, makeup artists, hair stylists, and studio staff. The workers most at risk are those with the least structural protection.

The scale is significant:

- McKinsey's "The Future of Women at Work" (2019) projected ~107 million women globally (20% of the female workforce) could face job displacement from automation by 2030

- Women are disproportionately concentrated in routine cognitive roles — the category most exposed to automation

- The British Fashion Models Association estimates 10,000 UK models face displacement from AI, alongside photographers, stylists, and other creative professionals

Women of color, who gained meaningful representation in modeling only recently, face the steepest drop.

Training Data Provenance Risk

Many AI image systems were trained on scraped, unlicensed imagery—runway photos, editorial content, model portfolios—that owners never consented to. Brands using such tools carry downstream liability.

Getty Images' lawsuit against Stability AI illustrates this risk materializing. While the UK High Court rejected secondary copyright claims in November 2025, limited trademark infringement was found where Getty watermarks appeared in outputs. The parallel US case remains pending, with trademark claims proceeding as of April 2026.

Diversity Theater vs. Genuine Inclusion

Diversity theater in AI fashion means using AI-generated models of color to fulfill visual diversity requirements while reducing or eliminating employment of diverse human talent. This decouples representation from economic opportunity for the communities being depicted—a regression, not progress.

Cultural Context and Appropriation Risk

Depicting a person of color in an AI-generated image carries no cultural knowledge, lived experience, or community accountability. Historical failures—Dove's October 2017 ad showing a Black woman "turning white," H&M's January 2018 "coolest monkey in the jungle" hoodie on a Black child—demonstrate what happens when representation is created without cultural understanding.

AI makes this risk more acute, not less. There is no talent on set to flag insensitivity before an image goes live.

Consumer Backlash to Ersatz Diversity

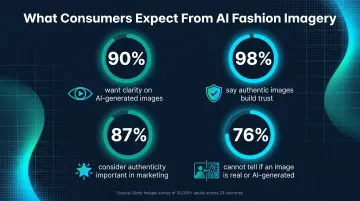

Getty Images' April 2024 survey of over 30,000 adults across 25 countries found:

- 90% want clarity on whether an image was AI-generated

- 98% say authentic images are central to establishing trust

- 87% consider authenticity important in marketing imagery

- 76% admit they cannot tell if an image is real or AI-generated

That last number is the problem. Consumers expect authenticity they cannot verify—a trust gap brands ignore at their peril.

The Levi's backlash revealed consumer expectations clearly: synthetic diversity substituting for real hiring is economically motivated cost-cutting disguised as inclusion. The speed and intensity of response—forcing corporate clarification within six days—shows consumers and advocates can distinguish performative inclusion from the real thing.

The Strategic Choice Brands Must Make

The key distinction: AI as a tool for expanding representation (adding body types, demographics, global markets to an existing diverse cast) versus AI as a cost-cutting substitute (replacing diverse human talent entirely).

Brands need to make this choice explicitly and document it in DEI commitments, not leave it as an operational default. Model Alliance founder Sara Ziff argues that using AI to "distort racial representation" marginalizes actual models of color, revealing a gap between stated diversity goals and actions.

What Ethical AI Fashion Model Photography Looks Like in Practice

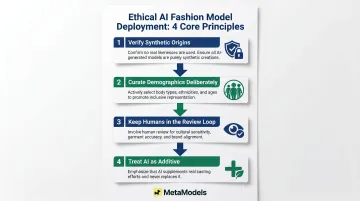

Ethical deployment isn't a checklist you run once — it's built into how you select, review, and use AI-generated imagery. These four principles form the operational foundation:

1. Verify synthetic model origins — Confirm that your AI provider generates entirely original models, not replicas of real people. Using a real model's likeness without consent opens exposure to right-of-publicity claims and labor rights violations.

2. Curate demographics deliberately — Don't accept algorithmic defaults. Actively select models representing the body types, ethnicities, ages, and backgrounds that reflect your actual customer base.

3. Keep humans in the review loop — Every AI-generated image should pass human review for cultural sensitivity and garment accuracy. MetaModels.ai, for example, has fashion specialists check every image before delivery — verifying color accuracy, proportions, and garment fit.

4. Treat AI as additive, not a replacement — AI should supplement real casting commitments, not eliminate them. Document this position explicitly in your diversity strategy.

With those principles in place, the next step is verifying they hold over time.

Bias Auditing in Practice

Test your AI tool outputs across demographic prompts and document whether results reflect genuine diversity or revert to narrow defaults. Treat this as standard image QA:

- Generate test batches across ethnicity, body type, and age parameters

- Compare outputs to your diversity targets

- Document deviations and adjust selection criteria

- Review quarterly to ensure consistency

Disclosure Best Practices

Regardless of local disclosure requirements, labeling AI-generated imagery builds consumer trust. Transparency about AI use, paired with visible diversity, is a stronger brand position than silence.

The Transparency and Regulatory Landscape

EU AI Act (Article 50): Requires disclosure that content has been "artificially generated or manipulated," including a uniform visual "AI" cue recognizable across the EU, explanatory text, machine-readable metadata, and imperceptible watermarking. These transparency rules become applicable August 2, 2026.

New York Fashion Workers Act: Effective June 2025, prohibits creating, altering, or using a model's digital replica without separate, explicit written consent. Agencies cannot use, license, or sell digital replicas without clear authorization.

Getty Images vs. Stability AI: The US case remains pending with trademark claims proceeding, while the UK case found limited trademark infringement. These disputes shape training data rights and downstream liability.

Documentation for Protection

Maintain records to protect legal and ethical accountability:

- Prompt histories and model selection records

- Human edit logs and creative decision trails

- Bias audit results across demographic categories

- Consent documentation for any real likenesses used

These records support copyright defensibility and demonstrate that diversity choices were deliberate — not incidental.

Consumer Expectations Outpace Regulation

A Getty Images survey found 90% of consumers want clear AI disclosure. The reputational cost of appearing deceptive — even unintentionally — far exceeds the effort of adding a label or updating image metadata. Brands that disclose AI use now, before mandates kick in, are better positioned when EU and state-level rules take full effect.

Frequently Asked Questions

Does using AI fashion models mean brands no longer need to hire diverse human models?

No. AI models should supplement, not replace, human casting. Using AI-generated diversity to avoid hiring diverse human talent is performative inclusion that consumers and industry advocates increasingly call out. Ethical use means adding representation, not substituting it.

How can fashion brands avoid bias in AI-generated model imagery?

Actively curate diverse model selections rather than accepting algorithmic defaults. Choose platforms that have built diversity into their model libraries by design. Conduct bias auditing by testing tool outputs across demographic categories and documenting results as part of standard QA.

Are brands legally required to disclose when a model image is AI-generated?

Requirements vary by jurisdiction. The EU AI Act mandates disclosure starting August 2026; US rules are evolving. Regardless of legal obligation, disclosure is broadly considered best practice and builds consumer trust—90% of consumers want to know when images are AI-generated.

Can AI models be trained on real people's likenesses without their consent?

No. Using a real model's likeness without consent raises serious legal and ethical issues—right-of-publicity claims, labor rights violations, and under laws like New York's Fashion Workers Act, potential liability for digital replicas. Verify that your AI provider uses only original or properly licensed model data.

How do consumers feel about AI-generated models in fashion advertising?

Getty Images research shows 90% of consumers want transparency about AI-generated images, and 98% say authentic images are critical to establishing trust. Consumers respond less favorably toward brands using AI-generated visuals without disclosure.

What is the difference between ethical and unethical use of AI fashion models?

Ethical use means original synthetic models, intentional diversity by design, transparent disclosure, and human oversight—without displacing real diverse talent. Unethical use involves training on unlicensed likenesses, substituting synthetic diversity for genuine inclusion commitments, and deploying biased tools without auditing.

Regulation is tightening and consumer scrutiny is rising in parallel. The operational choices brands make now—on disclosure, diversity sourcing, and bias auditing—will set the standard for whether AI fashion imagery moves the industry forward or simply repackages the same exclusions in a new format.