Introduction: AI Model Photography Is Efficient — But Are You Exposed?

AI model photography promises dramatic cost savings for fashion brands and e-commerce companies. One reported case study showed per-image costs dropping from $18 to $2.40—an 87% reduction—while another D2C brand cut costs from $175 per traditional photo to just $0.50–$2.00 using AI tools. That efficiency, however, has introduced legal and ethical risks most marketing teams haven't fully addressed.

Levi's faced intense public backlash in March 2023 after announcing a partnership with an AI model platform—critics accused the brand of "cheapening diversity" by using synthetic models instead of hiring diverse human talent. Separately, Getty Images is suing Stability AI in multiple jurisdictions over training data usage. The U.S. Copyright Office has also confirmed that purely AI-generated images cannot receive copyright protection, leaving brands vulnerable to copying by competitors.

This guide covers copyright issues with AI-generated imagery, right of publicity and digital replica laws, ethical responsibilities, and how to build a workflow that protects your brand without sacrificing efficiency.

Legal compliance and ethical responsibility are not the same thing. Many brands can be "technically legal" and still face reputational damage—or contribute to practices the industry is actively pushing back against.

TLDR:

- AI-generated fashion images cannot be copyrighted and may expose brands to training data liability

- New York and California digital replica laws require specific consent before using AI likenesses of real people

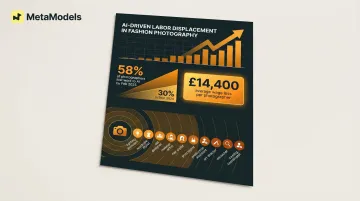

- 58% of photographers had lost work to AI by early 2025, with significant average wage losses reported

- EU AI Act mandates transparency disclosures; U.S. states are following with disclosure requirements

- Compliant workflows require documenting AI tools used, screening outputs for unintended resemblance, and adding disclosure labels

What the Law Actually Says About AI-Generated Model Photography

Copyright Status of AI-Generated Images

The U.S. Copyright Office's position is unambiguous: works generated entirely by AI without meaningful human creative authorship cannot receive copyright protection. This means your AI-generated model images are likely not copyrightable—competitors can copy them without legal consequence.

The Copyright Office published official guidance in March 2023 stating that if a work's "traditional elements of authorship were produced by a machine, the work lacks human authorship and the Office will not register it." Text prompts are treated as "instructions to a commissioned artist" rather than creative control.

The Zarya of the Dawn case illustrates what this means in practice. The Office ruled that a graphic novel's human-authored text and selection/arrangement were copyrightable, but individual Midjourney-generated images were not.

The absence of copyright protection doesn't resolve whether the AI platform's training process was legally sound. If the model was trained on copyrighted photographs scraped without licenses, using its outputs may expose brands to contributory infringement claims.

The legal landscape is still taking shape. In November 2025, a UK High Court ruled in Getty Images v. Stability AI that AI model parameters are not "infringing copies" of training images under UK copyright law—but Getty's claims were rejected largely on jurisdictional grounds.

Courts are weighing a four-factor fair use test, including whether training is "transformative" and whether outputs substitute for original works. In a significant U.S. ruling, Thomson Reuters v. Ross Intelligence, a Delaware court rejected the fair use defense for using copyrighted legal headnotes to train a competing AI tool. The court found that even a "potential" licensing market—not an existing one—is sufficient to establish market harm. What remains unresolved is the transformative use question specifically for image-generating AI—particularly when outputs closely resemble the style or composition of training works.

AI-Assisted vs. AI-Generated: What Can Be Protected

That copyright gap points to a practical question for brands using AI imagery: does any human involvement in your workflow earn protection? There is a meaningful legal distinction between AI-assisted work—where a human makes substantial creative decisions and AI is a tool—and purely AI-generated outputs. Works where human creative involvement is significant may still be registrable with the U.S. Copyright Office.

The distinction hinges on "ultimate creative control." If a human exercises creative selection, arranges AI-generated elements thoughtfully, or makes substantial modifications to AI outputs, copyright protection may attach to the human-authored portions. The Copyright Office evaluates this case by case.

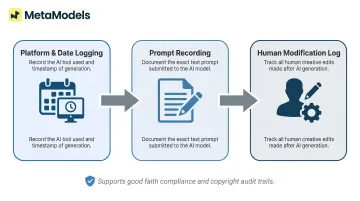

Regardless of where your workflow falls on that spectrum, documentation matters. For every image published, brands should track:

- Which AI platform was used and on what date

- The exact prompts or inputs that generated the image

- Any human modifications made to the AI output

This paper trail demonstrates good faith and establishes origin—important if training data litigation produces rulings affecting historical usage. Even if your images aren't copyrightable, documentation protects against misrepresentation claims and supports compliance audits.

Right of Publicity and State Digital Replica Laws

Right of Publicity: When Generated Faces Trigger Real Liability

Individuals hold exclusive rights over their name, image, voice, and likeness for commercial purposes under state right of publicity laws. When AI-generated models produce features closely resembling a real, identifiable person—even without intent—brands can face legal exposure. California, New York, and Texas have particularly strong protections; brands selling nationally must follow the strictest applicable standard.

AI systems generate statistically probable human features from training data, meaning resemblance to real people can occur without deliberate copying. This is a documented possibility when training datasets are heavily weighted toward specific individuals or when generative models overproduce features common in celebrity or influencer imagery.

Brands should implement human review of AI-generated model imagery to screen for unintended likeness matches before publication. This review should ask: "Does this face closely resemble any identifiable celebrity, public figure, or influencer?" If the answer is yes or uncertain, the image should be regenerated or discarded.

Key State Laws Brands Must Know

New York Fashion Workers Act (effective June 19, 2025): Requires clear written approval from models before creating or using their digital replica. The approval must specify scope, purpose, pay rate, and duration. Model management companies face civil penalties of $3,000–$5,000 per violation.

This law applies to "clients" broadly—including retailers and advertisers, not just modeling agencies. If you're a fashion brand operating in New York or arranging work performed in the state, you must comply.

The law also requires model management companies to register with the New York Department of Labor and post surety bonds of up to $50,000. Legal analysis from Benesch confirms these provisions apply extraterritorially to out-of-state agencies working with New York models.

California AB 2602 (effective January 1, 2025): Requires specific affirmative consent before digital replicas of an individual's voice or likeness are used. Contract provisions allowing use of a performer's digital replica are unenforceable if the use replaces work the performer could have done in person, lacks a "reasonably specific description" of intended use, and the performer lacked legal or union representation when contracting.

California AB 1836 (effective January 1, 2025): Extends protections to digital replicas of deceased personalities, requiring estate permission. Penalties include the greater of $10,000 or actual damages. Exemptions exist for news, parody, fleeting uses, and historical/biographical purposes—but not for commercial fashion advertising.

Federal protections are coming. The NO FAKES Act has been introduced in both the Senate and House to protect intellectual property rights in the voice and visual likeness of individuals. The FCC has already ruled that AI-generated voice calls require prior express consent under the TCPA. Given that New York and California laws are already in effect, brands using AI-generated imagery should have written consent policies, internal review checklists, and contract language in place before federal requirements add another compliance layer.

Ethical Considerations: The Issues That Laws Haven't Caught Up With Yet

AI model photography can theoretically showcase greater body diversity, but the reality depends on the platform and training data. Brands that use AI models to appear diverse in imagery while cutting costs on diverse hiring face legitimate criticism of performative inclusion.

Research published in the Journal of Advertising found that when fashion brands use AI-generated plus-size models to promote body positivity, consumers perceive the brands as hypocritical. The study of 700+ U.S. women showed AI-generated models score low on "social presence"—perceived humanness and relatability—leading to lower brand attitudes and reduced purchase intentions. Transparent disclosures explaining that AI systems were "trained on licensed photos of real people" representing diverse body types made AI ads perform comparably to those with real human models.

Authentic representation requires intentional curation, not just "any diversity the algorithm generates." Brands should audit the demographic range their AI tools actually produce and ask vendors directly about bias testing.

Labor displacement is a growing concern. According to research cited by Vogue Business, the share of photographers losing assignments to generative AI jumped from 30% in September 2024 to 58% by February 2025. The downstream impact extends well beyond photographers:

- Average wage loss per photographer: £14,400 between late 2024 and early 2025

- Every displaced shoot affects up to 10 additional workers—assistants, stylists, set designers, makeup artists

- Entry-level and e-commerce product work is being displaced first

Brands adopting AI should weigh this displacement carefully. Hybrid approaches can preserve some of that work — MetaModels.ai, for instance, incorporates human review by fashion specialists into every image delivery, maintaining creative employment while scaling AI output.

Beyond labor, there's a separate concern about what AI tools actually produce. Generative systems trained on fashion imagery can perpetuate unrealistic body standards at scale — producing images that a human creative team might have pushed back on. Brands carry ethical responsibility for the visual standards they amplify, even when a machine made the initial choices.

AlgorithmWatch tested DALL-E 3, Stable Diffusion XL 1.0, and MidJourney v6 and documented systematic racial, gender, and body-type biases in how these tools represent people. The biases aren't random — they reflect what was in the training data. Brands should audit outputs across different demographic descriptors and ask vendors directly what bias testing their models have undergone.

AI-Generated Models vs. Digital Replicas: A Critical Distinction

These two terms get used interchangeably, but they carry very different legal weight:

| Term | Definition | Legal Exposure |

|---|---|---|

| Digital replica | A computer-generated likeness of a specific, real person — their face, body, or voice | High — governed by laws like NY Fashion Workers Act and CA AB 2602 |

| AI-generated model | A synthetic person not based on any particular individual | Lower — not inherently restricted by right-of-publicity statutes |

This distinction matters practically: brands using platforms that generate novel AI models (not derived from any real person's identity) face substantially lower right-of-publicity exposure than those using digital twin technology to replicate real models or celebrities.

MetaModels.ai, for example, builds its model library from curated AI personas rather than real-person likeness data. Because no individual's identity is replicated, the platform sidesteps traditional model release and royalty requirements entirely — while human review keeps quality consistent.

That said, even fully synthetic models carry some residual risk. If a model's training data skewed heavily toward specific individuals, the output can inadvertently resemble a real person. Before publishing any AI-generated model imagery, run a quick visual check: does this face closely resemble anyone identifiable? If uncertain, regenerate. Human review at this stage isn't optional — it's the last practical safeguard against unintended likeness claims.

Disclosure Requirements and Platform Policies

The EU AI Act Article 50 requires providers and users of AI systems that generate or substantially manipulate images to ensure such content is clearly identifiable as artificial, unless the use involves only standard editing that does not materially change meaning. Companies placing AI models on the EU market—including U.S. fashion brands with European customers—must provide transparency about AI-generated content.

Practically, this means product listings, ad campaigns, and editorial content featuring AI-generated models must include disclosure. Acceptable formats include visible labels ("AI-generated model"), alt-text disclosures, and product listing metadata. The EU's framework is the most developed globally and is influencing standards elsewhere.

While no federal U.S. law yet mandates AI imagery disclosure, the FTC has penalized deceptive AI claims in adjacent contexts. In Operation AI Comply (September 2024), the FTC targeted five companies for deceptive AI-related practices, including tools that generated fake consumer reviews.

The FTC's authority under Section 5 of the FTC Act—prohibiting deceptive practices—applies to any AI-generated content that misleads consumers about product characteristics.

At the state level, several U.S. states are moving faster than Congress. New York's Fashion Workers Act includes provisions on disclosure of digital replicas in commercial advertising, with enforcement beginning in 2026. Getting ahead of these requirements now avoids rushed compliance later.

Platform-Level Requirements

Beyond regulation, major e-commerce platforms set their own AI content rules:

- Amazon: Main product images must show the actual item against a white background. Secondary lifestyle images may use AI-generated elements, but nothing that misleads consumers about product characteristics. Amazon has removed listings where AI models created false impressions, particularly in apparel.

- Shopify: More permissive overall, but starting in 2026, merchants must explicitly disclose when product images contain AI-generated elements or have been substantially altered by AI.

Platform terms of service establish a compliance floor — not a ceiling. Brands that disclose voluntarily, beyond what platforms currently require, are better positioned as enforcement tightens.

Building a Compliant and Ethical AI Model Photography Workflow

Establish a pre-publication review checklist for every AI-generated image:

- Document the AI platform used, generation date, and prompts

- Conduct a human review specifically screening for unintended real-person resemblance

- Add disclosure labels in product metadata and visible image descriptions

- Confirm the AI vendor's training data practices and whether they have licensing agreements or opt-out compliance for rights holders

Vet Your AI Platform Before You Commit

Vet AI platforms rigorously before adoption. Ask vendors directly about their training data sourcing, licensing, and bias-testing practices. Prioritize platforms that build on transparently sourced or proprietary data.

MetaModels.ai, for example, addresses several of these vetting criteria directly: its library of diverse AI models operates on a "no models, no royalties, no limits" structure that removes traditional model release and royalty exposure. Every image is reviewed by fashion specialists for garment accuracy, color, shape, and proportions before delivery, adding a quality check before images reach your channels.

Beyond platform selection, plan for ongoing compliance:

- Avoid exclusive dependence on any single AI platform

- Monitor legal developments in jurisdictions where you operate

- Schedule periodic legal reviews of your AI imagery practices

- Build written AI imagery policies for your marketing and product teams that are updated as regulations develop

The legal framework governing AI model photography is still forming. Brands that build compliance structures now, before enforcement catches up, are far better positioned than those scrambling to retrofit policies after an incident.

Frequently Asked Questions

What is a legal AI model workflow for photography and brand approval?

A legal workflow covers four key steps: document the AI platform, prompts, and generation date for each image; run a human review for unintended real-person resemblances; apply disclosure labels; and confirm the vendor's training data is properly licensed.

Is it legal to create or train an AI model using copyrighted works without permission?

This remains legally unsettled in the U.S. — ongoing litigation such as Thomson Reuters v. Ross Intelligence shows the fair use argument carries real risk. The EU AI Act adds further exposure by requiring transparency and giving rights holders the ability to opt out of training use.

Can I legally use AI-generated images for my brand or photography work?

Yes, you can generally use AI-generated images commercially, but you cannot copyright protect them and may inherit liability if the AI platform's training data was infringing. Always vet the platform's data sourcing practices and keep documentation of what tools were used.

What is the difference between AI-generated models and digital replicas?

Digital replicas are AI-enhanced likenesses of specific real people (governed by state laws like the NY Fashion Workers Act), while AI-generated models are entirely synthetic figures not based on real individuals—which carry significantly lower right-of-publicity risk when no real person's likeness is used as a reference.

Do I need to disclose when model photography is AI-generated?

The EU AI Act requires transparency for brands reaching European consumers, and several U.S. states are moving toward mandatory disclosure for AI advertising imagery. Proactive labeling is recommended regardless of jurisdiction — it also guards against FTC-style misrepresentation claims.

What are the key digital replica laws U.S. fashion brands must comply with?

New York's Fashion Workers Act (effective June 2025) and California's AB 2602 (effective January 2025) are the most immediate obligations, both requiring written consent before using a model's digital replica commercially. The proposed federal NO FAKES Act would add a national layer on top of these state requirements.