Introduction: The Hidden Risk in AI Fashion Photography

AI fashion photography has exploded from a $1.51 billion market in 2024 to a projected $2.01 billion industry, with 83% of advertising executives now using AI in creative production. The technology eliminates model bookings, studio costs, and weeks-long production timelines — replacing them with scalable, on-demand visual content.

Speed has outpaced awareness. Brands deploying AI-generated imagery without understanding the legal, ethical, and consumer trust frameworks now face real consequences: legal penalties under new disclosure laws, platform policy violations that can sink ad campaigns, and consumer backlash that damages brand equity.

Gucci, Valentino, and J.Crew have all faced public criticism for AI imagery. The issue wasn't using AI — it was getting the compliance and transparency pieces wrong.

This guide covers exactly what fashion brands must get right: the legal landscape, disclosure best practices, ethical compliance standards, platform-specific requirements, and a pre-launch checklist to protect your brand while putting AI to work.

TLDR:

- New York (June 2026) and EU law require AI disclosure; EU fines reach €15M

- 50%+ of Gen Z and Millennials expect AI labeling — transparency builds trust

- Use synthetic models, verify training data licensing, and human-review every image

- TikTok mandates AI labels; Amazon requires accuracy; Meta auto-tags AI content

- Compliance protects your brand legally, builds consumer trust, and reduces return rates

Why Trust and Compliance Are Now Non-Negotiable

Consumer awareness of AI-generated content has grown rapidly. Over 50% of Gen Z and Millennial consumers now expect disclosure when AI-generated images or video appear in advertising. More striking: there's a 37-point perception gap between what advertisers think consumers feel about AI ads (82% positive) and what consumers actually feel (45% positive). Gen Z negative sentiment toward AI ads nearly doubled from 21% in 2024 to 39% in 2026.

Legal timelines are compressing fast. Three major frameworks take effect in 2026 alone:

- New York AI Transparency in Advertising Act (June 9, 2026): Requires conspicuous disclosure for ads featuring synthetic performers; civil penalties start at $1,000 per violation

- EU AI Act transparency obligations (August 2, 2026): Penalties reach €15 million or 3% of global annual turnover for non-compliance

- California, Tennessee, and other US states: AI likeness protection laws already enacted, with minimum fines of $10,000

Brand equity is equally exposed. When Gucci released AI-generated visuals for Milan Fashion Week, consumers called the work "tacky," "cheap," and "slop." Valentino faced anger despite clearly labeling its AI-generated DeVain handbag video. Meanwhile, brands like H&M that proactively disclosed their AI model partnerships and emphasized transparency saw more neutral or positive responses. The difference wasn't the AI — it was the compliance and communication strategy.

The industry is split. While H&M, Levi's, and Prada experiment with AI-generated content, Aerie launched a high-profile anti-AI campaign ("You Can't Prompt This") and reported a 23% sales increase after pledging to avoid AI-generated bodies.

Brands caught between cost efficiency and creative authenticity need clear policy frameworks in place before a crisis forces their hand.

Reputational risk isn't the only concern. Inaccurate AI imagery — wrong button colors, unnatural draping, distorted fit — directly affects conversion and returns. eBay sellers have already flagged AI-generated model overlays distorting sleeve details and collar shapes, raising product misrepresentation concerns. Accuracy isn't just an ethical obligation; it's a revenue one.

The Legal Landscape: What Fashion Brands Must Know

Intellectual Property and Copyright

Who owns the copyright in AI-generated images? In most jurisdictions, purely AI-generated content without meaningful human authorship may not be eligible for copyright protection. The US Copyright Office requires human creative contribution as a prerequisite for copyright eligibility, analyzing authorship on a case-by-case basis. The Supreme Court affirmed this standard in March 2026 by denying certiorari in the Thaler/Creativity Machine case.

Document your human creative inputs — prompt engineering, model selection, composition decisions, post-generation edits — to establish a defensible ownership claim. If your platform generates outputs based solely on automated inputs without meaningful human direction, your copyright claim weakens.

Training data copyright is a growing risk. AI models trained on unlicensed photography create downstream IP liability for brands using those platforms. Getty Images' lawsuit against Stability AI is ongoing, and AI copyright infringement cases more than doubled from approximately 30 at the end of 2024 to over 60 by the end of 2025.

A Delaware court in February 2025 issued the first federal ruling rejecting the fair use defense for AI training data in Thomson Reuters v. ROSS Intelligence — a precedent that shifts risk exposure squarely onto brands using non-compliant platforms.

Choose platforms that use licensed or originally sourced training data. Verify this in vendor contracts before uploading proprietary product images.

Consent and Model Likeness Rights

Training data liability is one exposure. Likeness rights are another — and the legal lines have sharpened considerably.

Using AI to replicate a real person's likeness without consent is illegal in most jurisdictions. Right of publicity laws in the US and similar protections in the EU prohibit unauthorized commercial use of identifiable individuals' likenesses — including AI-generated images that closely resemble real people.

New York's expanded protections now include posthumous rights. S.8391/A.8882 requires consent from heirs or executors for commercial use of deceased persons' AI-generated likenesses, with statutory damages starting at $2,000 plus actual and potential punitive damages.

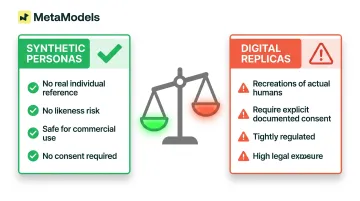

Two categories carry very different risk profiles:

- Synthetic personas — created without reference to any real individual — carry no likeness risk and are safe for commercial use

- Digital replicas — AI recreations of actual humans — require explicit, documented consent and are tightly regulated

California's AB 2602 goes further: it bars contract provisions allowing digital replicas to replace a person's work unless that individual was represented by counsel or a union, and the permitted uses were specifically named in the agreement.

Use platforms that generate synthetic personas, not real-person replicas. Confirm this in writing before deployment.

Disclosure and Advertising Standards

US requirements carry financial penalties. New York's S.8420-A/A.8887-B requires "conspicuous disclosure" when ads distributed in New York feature synthetic performers. First offense: $1,000; subsequent offenses: $5,000. The FTC has signaled there's "no AI exemption from the laws on the books," launching Operation AI Comply in September 2024 to crack down on deceptive AI content.

What compliant labeling looks like: "Virtual Model," "AI-Generated," or similar language placed visibly near the image — not hidden in fine print or buried in terms. The disclosure must be clear enough that a reasonable consumer would notice it.

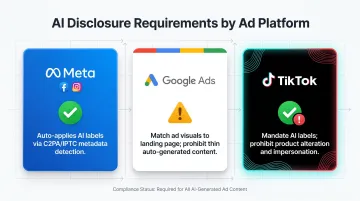

Platform policies layer on top of legal requirements — and each channel has its own rules:

- Meta began auto-labeling AI-generated content in May 2024 by detecting industry-standard AI image indicators

- TikTok Shop requires sellers to label AI content and prohibits using AI to alter a product's appearance beyond reality

- Google Ads prohibits thin, auto-generated content without substantial value and requires clear visual match between ad imagery and landing pages

Failing either the legal or platform standard creates compounding risk — a single non-compliant campaign could trigger regulatory fines and ad account suspension simultaneously.

Building Consumer Trust: Disclosure and Transparency in Practice

What the Research Says About Shopper Perception

The data challenges the assumption that AI disclosure hurts sales. 73% of Gen Z and Millennials say knowing an ad was AI-generated would either increase or have no difference on their purchase likelihood. When brands are transparent, it often increases trust rather than decreasing it — because honesty signals integrity.

Execution quality matters more than AI origin. Shoppers care less about whether an image is AI or real and more about whether it accurately represents the product. Inaccuracies in fabric texture, garment fit, color, or proportions break purchase confidence — not AI origin itself.

The risk of undisclosed AI is real. Research from SMU's Temerlin Advertising Institute found that when fashion brands use AI-generated plus-size models to promote body positivity, women perceive the brands as hypocritical and are less likely to buy, recommend, or view them favorably. The problem was perceived inauthenticity, not the AI technology itself.

How to Disclose Effectively Without Damaging Conversions

Where and how to place disclosure labels:

- Product detail pages: "Virtual Model" or "AI-Generated Image" label placed near the image, not hidden

- Campaign imagery: Disclosure in caption or overlay text visible without clicking through

- Social posts: Label in post copy or graphic overlay, positioned where viewers naturally look

As disclosure becomes standard practice across fashion retail, it loses novelty and its potential negative associations fade. Brands that move first establish transparency as a brand value — not a legal footnote.

Address AI Use Proactively in Brand Communications

Include AI photography in your "About" content or FAQs, framing it as a commitment to inclusive, scalable representation rather than a cost-cutting shortcut. H&M officially announced its AI-generated digital twins in July 2025 with a "Responsible AI" framework including diversity audits and human oversight. Levi's clarified that AI models would supplement — not replace — human models following initial backlash. Both examples show that proactive framing shifts the story from controversy to intention.

Support AI Imagery with Clear Return Policies

Direct survey data linking return policies to AI comfort is limited, but the underlying logic holds: return policies reduce purchase risk. When paired with accurate AI imagery, they signal that the brand stands behind its product representation — closing the remaining confidence gap for hesitant shoppers.

Ethical Compliance: Consent, Diversity, and Model Rights

Consent Standards for AI Model Libraries

Reputable platforms create synthetic model personas not based on any real individual's likeness, eliminating right-of-publicity concerns. MetaModels.ai, for example, maintains a curated library of AI models built without real-person likenesses, with human review on every output to ensure garment accuracy and ethical consistency.

Before adopting any platform, verify in writing:

- Models are synthetic, not digital replicas of real individuals

- Training data is licensed or originally sourced

- The platform provides documentation confirming these safeguards

Diversity: An Ethical and Commercial Requirement

Consent compliance is just one side of responsible AI model use — who you show matters just as much. Using a non-diverse model set creates both ethical risk and measurable revenue loss. 75% of consumers globally say a brand's inclusion reputation influences their purchase decisions, and progressive, inclusive advertising drives a 16% sales uplift compared to less representative content.

Underrepresented shoppers who don't see themselves in imagery are less likely to convert. Audit your AI model selection for diversity across:

- Body types

- Skin tones

- Ages

- Gender expressions

Data Privacy: What to Check Before You Upload

If you upload proprietary product images, customer data, or model reference photos to an AI platform, GDPR (in Europe) or CCPA (in California) may govern those uploads.

Review the data processing terms of any AI vendor before uploading sensitive materials, and confirm that appropriate Data Processing Agreements (DPAs) are in place.

Platform-Specific Compliance: Where Your AI Images Actually Live

E-commerce platforms currently allow AI-generated product images provided they accurately represent the product — but each platform has its own standards.

| Platform | AI Policy | Key Requirement |

|---|---|---|

| Amazon | No explicit AI-specific policy | Images must "accurately represent the product" and match the title |

| Etsy | AI classified as "Designed by a Seller" | Sellers must disclose AI use in listing description; physical items must use original product photos |

| eBay | Testing AI model overlays | Sellers report product distortions in auto-generated AI images; no opt-out available |

| Shopify | No AI-specific policy | General product accuracy standards apply |

| TikTok Shop | Explicit AI content policy | Must label AI content; cannot alter product appearance/color/texture; no impersonation |

Paid advertising platforms have specific AI disclosure requirements:

- Meta (Facebook/Instagram): Auto-applies "AI info" labels by detecting C2PA and IPTC metadata

- Google Ads: Requires clear content match between ad imagery and landing page; prohibits thin, auto-generated content

- TikTok: Mandates AI labeling for ads; prohibits impersonation and product appearance alteration

Organic social media posts are subject to the same disclosure rules as paid advertising — and that gap is closing fast. New York's advertising disclosure law, widely cited as a model for similar legislation in other states, doesn't distinguish between paid and organic brand content. If your post features a synthetic performer, the disclosure requirement applies whether or not you paid to run it. Treat all brand content — paid or organic — with the same transparency standards.

Your AI Fashion Photography Compliance Checklist

Pre-Launch Legal and Platform Check:

Before deploying AI fashion images, confirm:

- ✅ Platform verification: The AI platform uses licensed training data and synthetic model personas (not real-person replicas)

- ✅ Disclosure labeling: Generated images are labeled appropriately on PDPs, campaign assets, and paid ads ("Virtual Model," "AI-Generated," etc.)

- ✅ Platform compliance: Content meets the accuracy and disclosure standards of each platform where it will appear (Amazon, Meta, TikTok, etc.)

- ✅ Documentation: Vendor contracts confirm training data licensing, synthetic persona creation, and data processing compliance (GDPR/CCPA if applicable)

Image Quality and Accuracy Gate:

Run every AI-generated image through human review before publication:

- Fabric texture matches the real product

- Colors are accurate to product specifications

- Garment fits naturally and drapes correctly

- Buttons, zippers, stitching, prints, and embellishments are all present and accurate

This review is both a trust-building measure and a returns-reduction strategy. MetaModels.ai builds this step into every delivery — human fashion specialists check each image for garment-specific accuracy, from menswear shoulder fit to luxury embellishment placement.

Ongoing Compliance Maintenance:

Quality review protects your brand image. Regulatory compliance protects everything else. New state and federal laws governing AI-generated content are expected throughout 2026, and platform policies tend to shift alongside them.

- Assign ownership: Designate a team member to monitor regulatory updates quarterly

- Review platform policies: Check ad platform policy updates each time you launch a new AI content campaign

- Update internal guidelines: Revise disclosure templates, vendor agreements, and quality checklists as regulations evolve

Frequently Asked Questions

Can I use AI models for my clothing brand?

Yes. Brands can legally use AI-generated synthetic models for clothing imagery. The key requirements: models must not replicate real individuals' likenesses without consent, and images must be disclosed appropriately in commercial advertising contexts as required by applicable laws.

Can you legally use AI-generated images?

AI-generated images are legal to use commercially in most jurisdictions. Brands should document human creative inputs to establish copyright ownership, avoid replicating unlicensed likenesses, and comply with disclosure laws — including New York's June 2026 requirement and the EU AI Act.

What are the pros and cons of AI models?

Pros: Cost savings, faster production, scalability, and inclusive representation without model booking constraints.

Cons: Consumer skepticism if undisclosed, legal liability from unlicensed likeness replication, and quality issues (inaccurate colors or fit) that increase returns.

What brands use AI fashion models?

Major brands including H&M (AI digital twins, July 2025), Levi's (via Lalaland.ai), Prada, Gucci, and Valentino have all incorporated AI models into recent campaigns. Adoption is accelerating across every tier, from luxury to fast fashion.

Do I need to disclose AI-generated images on e-commerce platforms?

Requirements vary by platform and jurisdiction. US advertising law — including New York's 2026 legislation and FTC guidelines — mandates disclosure in commercial ads. Shopify and Amazon focus on product accuracy rather than AI labeling, but TikTok Shop explicitly requires it.

How do I ensure my AI fashion images are legally compliant?

Four steps cover the essentials: use platforms generating synthetic models trained on licensed data, label AI content in ads and PDPs as required, verify image accuracy against your actual product, and review platform policies and regulations before each campaign launch.