These aren't fringe experiments. The AI fashion market is projected to reach $60 billion by 2034, with annual growth rates nearing 40%. Hugo Boss, Valentino, Jil Sander, MCM Worldwide, and Burberry have all publicly confirmed using AI-generated models for e-commerce or marketing.

But rapid adoption has created a pattern of recurring mistakes that damage consumer trust, invite legal exposure, and in some cases, generate public relations disasters. Many brands struggle with how shoppers perceive AI imagery accuracy—76% of shoppers say on-model images are the most important format for purchase decisions, yet when details are wrong, confidence evaporates. Others fail to disclose AI use, only to face backlash when shoppers discover the deception.

This article covers five critical mistakes brands keep making with AI models—and the practical steps to avoid them.

TLDR: 5 Mistakes Brands Keep Making with AI Models

- Diversity theatre: Using AI to generate diverse representation instead of hiring diverse talent

- Speed over accuracy: Pushing AI content live before garment details are checked — returns follow

- Likeness theft: Replicating real people's faces without consent, creating legal exposure

- Non-disclosure: Hiding AI use from shoppers until they notice — and they do notice

- Replacing all photography with AI rather than deploying it where it actually adds value

Mistake 1: Using AI Models as a Diversity Shortcut

When Levi's announced its Lalaland.ai partnership to "sustainably increase representation in fashion," the response was swift and brutal. Critics accused the company of using technology as an inexpensive shortcut to diversity rather than hiring real diverse talent. Professor Shawn Grain Carter from the Fashion Institute of Technology stated bluntly: "When you have to hire a model, book an agency, have a stylist, do the makeup, feed them on set—all that costs money. Let's make no mistake about it, Levi's is doing this because this saves them money."

The Problem with Performative AI Diversity

Performative diversity differs fundamentally from authentic representation. Brands generate AI models across different skin tones, body types, and ages to signal inclusion—but do so in place of actually hiring diverse talent, not alongside it.

When a brand has historically featured little representation in its marketing and suddenly showcases AI-generated diverse models, audiences immediately call out the disconnect between visual claims and actual brand culture or hiring practices.

Levi's eventually issued a clarifying statement: "We do not see this pilot as a means to advance diversity or as a substitute for the real action that must be taken to deliver on our diversity, equity and inclusion goals and it should not have been portrayed as such." It was a textbook example of how diversity messaging, when disconnected from actual hiring practices, backfires — and the pattern has repeated across the industry since.

The Labor Displacement Argument

Sara Ziff, founder of the Model Alliance, described the current state of AI in fashion as "the Wild West" and stated: "I think the use of AI to distort racial representation and marginalize actual models of color reveals this troubling gap between the industry's declared intentions and their real actions."

The labor case is clear: using AI to generate diverse representation directly displaces models, stylists, and photographers from underrepresented communities — the exact people who stood to benefit most from increased representation.

The irony is stark. Brands claim to advance diversity while simultaneously cutting the economic opportunities that would materially support diverse talent.

AI Training Data Amplifies Bias

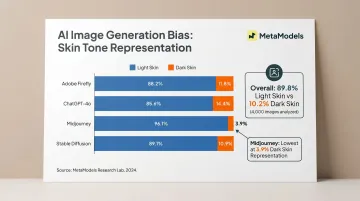

AI diversity tools carry an additional technical flaw: if training datasets underrepresent certain body types or features, the resulting AI images encode and amplify those biases. A 2025 study testing Adobe Firefly, ChatGPT-4o, Midjourney, and Stable Diffusion found that 89.8% of 4,000 AI-generated images depicted light skin, while only 10.2% depicted dark skin. Midjourney performed worst, with just 3.9% dark-skin representation.

Another 2024 study found that out of 200 images generated by Dall-E 3 and Midjourney, only one single image featured dark skin. Midjourney generated 99% of images in the two lightest skin tones.

These datasets power many fashion AI platforms. When brands rely on biased tools to generate diversity, they're not correcting representation gaps—they're reinforcing them.

What Genuine AI-Supported Inclusion Looks Like

Done right, AI can serve as a scale tool — using curated model libraries that cover genuinely diverse demographics to ensure every product gets representative coverage, not just hero images. That's a supplement to diverse human talent, not a replacement for it.

Transparency is non-negotiable: disclosure when AI is used, sourcing from tools with audited, representative training data, and hiring practices that visibly reflect the diversity a brand claims to champion.

Mistake 2: Sacrificing Garment Accuracy for Speed

Garment accuracy is the single most important technical requirement for AI fashion models in e-commerce. The product image serves as the proxy for physical inspection—when it's wrong, shoppers notice immediately.

Research from Stylitics found that 76% of shoppers say on-model photos are the most useful format for purchase decisions. Yet 71% of those same shoppers could not distinguish between real and AI-generated images—or said they "looked the same or had only small differences." The problem isn't that shoppers reject AI outright. It's that they quickly lose confidence when details are inaccurate.

Accuracy Failures That Erode Shopper Confidence

Shoppers flagged specific flaws in the Stylitics study:

- Incorrect button colors

- Unnatural fabric draping (no wrinkles where wrinkles should appear)

- Missing texture details

- Proportions that don't reflect how garments actually fit

- Airbrushed skin that makes fabric textures look unrealistic

These failures break trust even when shoppers can't identify that an image is AI-generated. The garment simply doesn't look right.

The Business Consequence: Increased Returns

Inaccurate product imagery directly increases return rates. When shoppers receive a garment that doesn't match the AI-generated image they purchased from, the disconnect breaks trust—often permanently.

The National Retail Federation reported that retail returns reached $890 billion in 2024, representing 16.9% of annual sales. A PowerReviews survey of 25,000+ US consumers found that 28% of apparel returns are due to appearance mismatch—the product did not look like what was shown in the imagery.

This stat covers all product imagery inaccuracies, not just AI-generated ones. Still, AI imagery that misrepresents garment details feeds directly into this return driver.

Why Speed Creates Accuracy Problems

Many AI model platforms generate output at speed without validating garment detail accuracy, relying on brands to catch errors. Brands rushing to scale AI content often skip or compress quality review, accepting images with visible flaws to hit volume targets. Flawed images reach product pages, shoppers purchase based on inaccurate visuals, and returns follow.

The Right Approach: Quality Controls Built Into the Process

The speed advantage of AI does not have to come at the cost of product accuracy. Platforms that use real-time fabric draping simulation and human review steps are built specifically to close this gap.

MetaModels.ai, for example, routes every image through a human-verified garment accuracy workflow: fashion specialists review color, shape, and proportions before delivery. AI handles the volume; specialists catch what automation misses.

Mistake 3: Copying Real People's Likenesses Without Consent

Some brands are producing AI models that closely replicate the appearance of real influencers, models, or public figures without authorization. Whether deliberate or the result of marketing teams not understanding how AI image platforms generate outputs, the exposure is the same: immediate legal and reputational risk.

Real Incidents from 2025-2026

Melanie Kieback: In February 2026, Berlin-based content creator Melanie Kieback (565,000+ Instagram followers) posted a video alleging that a fashion brand had created an "AI twin" of her to promote products. She shared side-by-side comparisons showing the AI model wearing identical outfits from her 2023 modeling photos. The physical details matched closely: blue eyes, eyebrows, freckles, and a distinct bump on her nose. She stated: "They say imitation is the highest form of flattery... but I don't feel flattered. This can't be the future and should not be normalised."

Arielle Lorre / Skaind: Beginning in late March 2025, podcaster and influencer Arielle Lorre reported that skincare brand Skaind used an AI-generated deepfake of her in TikTok ads, showing a manipulated video appearing to depict her being interviewed and promoting their product. Lorre stated: "The entire podcast interview of me promoting this product was digitally manipulated... Not only is it illegal, but it also dilutes my brand and affects the trust I've been building with my audience." She sent a cease-and-desist letter and is pursuing legal action.

Molly Baz / Shopify: In September 2025, cookbook author and food influencer Molly Baz accused Shopify of using a website template image that was strikingly similar to the cover of her cookbook. She called it a "sicko AI version" of her. Shopify pulled the template from its website.

The Legal Exposure

These incidents aren't just PR problems — they have real legal teeth. A lawyer commenting on the Kieback case stated: "Because this claim involves commercial use for an advertisement, this is one of the rare situations where AI twins are actionable under the law under state right of publicity law."

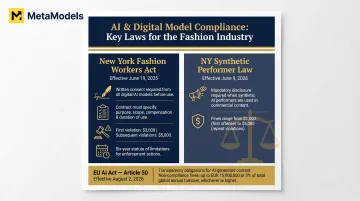

The New York Fashion Workers Act, which took effect June 19, 2025, creates specific requirements:

- Clients must obtain clear written approval from a model before creating or using a digital replica

- The approval must specify scope, purpose, rate of pay, and length of time the replica will be used

- Penalties: $3,000 for first violations, $5,000 for subsequent violations

- Models have a private right of action with a six-year statute of limitations

New York's Synthetic Performer Law, effective June 9, 2026, requires mandatory disclosure of synthetic performers in advertising, with fines of $1,000-$5,000 for non-compliance.

How to Prevent Likeness Replication

The fix comes down to platform choice and internal process:

- Use AI model platforms that generate original synthetic models from curated, purpose-built libraries — not tools that scrape or source real people's images during generation

- Audit the AI stack your team uses and document exactly where model appearance data originates

- Require written consent protocols for any real-person inputs before production begins

- Run a reverse image check on AI model outputs before publishing to catch unintended likeness matches

Mistake 4: Not Disclosing AI-Generated Imagery to Shoppers

Research from Stylitics found that 59% of shoppers want clear labeling when imagery is AI-generated—not because they reject AI outright, but because disclosure reads as a sign of honesty. Brands that stay silent lose trust on two fronts: shoppers feel misled when they discover the omission, then trace poor purchase outcomes—wrong fit, inaccurate texture—back to it.

What Adequate Disclosure Looks Like

Effective disclosure can take multiple forms:

- A visible "Virtual Model" label on product detail pages

- A brief disclaimer in campaign copy

- A note in the product image alt text

- Icons or watermarks indicating AI-generated content

Regulators in several markets are moving toward mandating AI content labeling. The EU AI Act Article 50, which takes full effect August 2, 2026, requires mandatory labeling of AI-generated content, with fines up to EUR 15M or 3% of global turnover for non-compliance. With the deadline under two years away, early adoption is a reputational advantage, not just a compliance checkbox.

The Counterargument: Will Disclosure Reduce Conversions?

Some brands worry that disclosure will hurt sales. The Stylitics study found the opposite: shoppers who received clear disclosure were more likely to trust the brand overall. When explicitly told they were viewing AI-generated models, 60% felt neutral or positive—36% said "interesting but not a big deal," 24% said "Cool! That's smart"—while 31% reacted negatively.

Shoppers who are open to AI imagery—a majority in that study—respond positively to transparency. Omission doesn't convert skeptics—it confirms their suspicions. Shoppers in the study put it plainly: "It makes the brand feel more honest" and "I feel less cheated if it's disclosed."

Broader consumer sentiment supports this finding: 63% of US consumers believe brands have a duty to disclose AI use in marketing.

Mistake 5: Deploying AI Models Everywhere Instead of Strategically

AI model efficiency is genuinely compelling — but brands that swap out their entire visual strategy for AI-generated imagery, across hero shots, lookbooks, social campaigns, and product pages alike, skip the critical question: where does AI actually perform well, and where does it create friction? That misjudgment drives consumer backlash and degrades quality at the brand's most visible touchpoints.

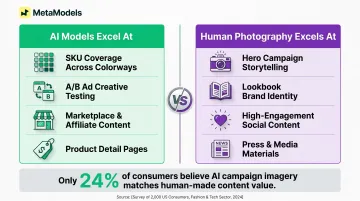

Where AI Models Genuinely Outperform Traditional Photography

AI models excel in specific contexts:

- Covering every SKU across multiple colorways without additional shoots

- Generating ad creative variations quickly for A/B testing

- Producing content for marketplaces and affiliate sites where speed outweighs campaign-level polish

- Delivering consistent, accurate garment imagery for product detail pages

A Stylitics case study with luxury retailer Milaner reported a 157% increase in conversion rate and a 40% boost in shopper engagement after implementing AI-powered on-model imagery for product pages.

Where Human Photography Remains More Effective

Real human faces generate stronger emotional resonance and social sharing behavior than current AI-generated equivalents. AI struggles in:

- Hero campaign images where emotion and lifestyle storytelling carry the brand

- Lookbooks built to communicate a distinct brand identity and aesthetic

- High-engagement social content: Instagram posts, influencer collaborations, narrative-driven campaigns

- Press materials where authenticity shapes how media covers the brand

Consumer sentiment reflects this gap. A Vogue Business/GQ survey of 250 readers in April 2026 found that only 24% believe AI-generated campaign imagery can match the value of human-made content. A peer-reviewed study reinforced this, finding consumers rate AI models lower than human models in fashion advertising — with the gap widest for luxury brands.

Those findings have shaped how luxury brands approach deployment. Valentino released an AI-generated video for its Garavani DeVain handbag but faced mixed response — some consumers said "It doesn't feel very Valentino." MCM Worldwide used AI for "campaign extensions," stylized environments that built on traditional photography rather than replacing it. Estée Lauder has taken a slow and cautious approach, setting guardrails around its use of the technology to avoid backlash.

How to Use AI Models in Fashion Without the Backlash

The common thread across all five mistakes comes down to intent. Brands treat AI models as a shortcut — to cost savings, diversity optics, or faster content — rather than applying the same strategic standards they'd bring to any other brand asset.

Principles of Responsible AI Model Adoption

Diversity in the model library, not just the campaign. Your AI model library should cover ethnicity, body type, and age — and those models need to appear across all products, not just hero images. Pair that with genuine hiring commitments to diverse human talent; AI representation shouldn't be a substitute for it.

Human review, not just automated output. Quality control workflows that validate color, shape, proportions, and texture before content goes live are non-negotiable. Platforms like MetaModels.ai assign fashion specialists to review every AI-generated image for garment accuracy — because speed that produces inaccurate product visuals isn't a benefit.

Original synthetic models only. Avoid any tool that scrapes or replicates real people's likenesses. Maintain documented consent protocols for any real-person inputs, and confirm your AI stack complies with right of publicity laws and emerging regulations like the New York Fashion Workers Act.

Consistent disclosure. Label AI-generated imagery on product pages, in campaign copy, and across social content. Brands that disclose proactively build trust; brands that wait get caught scrambling when regulation catches up.

Strategic deployment. AI models belong in high-volume catalog content, A/B testing, and secondary channels. Hero campaigns, lookbooks, and social content where emotional connection drives conversion — those still need human photography.

MetaModels.ai: Built Around These Principles

MetaModels.ai is built around these principles in practice. The platform covers each of the five areas above:

- Curated AI model library with genuine diversity across ethnicity, body type, and age

- Human fashion specialists review every output for color, shape, and garment accuracy before delivery

- Original synthetic models with no royalty or consent exposure — unlimited commercial usage rights, no release forms required

- Ready-to-publish images and video up to 4K resolution, designed for catalog scale without losing brand consistency

Frequently Asked Questions

Which fashion brands are using AI models?

Confirmed adopters span fast fashion (H&M, Mango), mid-market (Levi's), and luxury (Valentino, Burberry, Hugo Boss). Brands are using AI models for catalog photography, campaign imagery, and social content.

Is it legal for brands to create AI models that resemble real people?

Using AI to replicate a real person's likeness for commercial purposes without consent can violate right of publicity laws. The New York Fashion Workers Act requires written consent for model digital replicas. Brands should use purpose-built synthetic model libraries to stay on the right side of these requirements.

Do shoppers trust AI-generated fashion images?

Most shoppers are open to AI imagery, but trust hinges on execution accuracy and transparency. Research shows 59% want clear labeling, and shoppers lose confidence when garment details look inaccurate—incorrect colors, unnatural draping, or missing textures are enough to kill a sale.

Should brands disclose when they use AI models?

Yes. Disclosure is both an ethical best practice and an emerging legal expectation in several markets. 60% of shoppers feel neutral or positive when AI use is clearly labeled—transparent disclosure builds trust, it doesn't undermine it.

What is the biggest technical mistake brands make with AI fashion models?

Garment accuracy failures—incorrect draping, color, texture, and proportion—cause the most damage. Inaccurate product images drive returns (28% of apparel returns stem from appearance mismatch) and give shoppers a reason not to come back.

Can AI models replace human models entirely in fashion?

AI models work best alongside human photography, not as a wholesale replacement. Hero campaigns, lookbooks, and high-engagement social content still benefit from the emotional resonance that real human models deliver. Only 24% of consumers think AI campaign content can be as valuable as human-made content.