This article examines the timeline of Mango's AI model adoption, the technical process behind it, the business logic driving the shift, the gaps it reveals, and what other fashion brands can learn from it.

TLDR

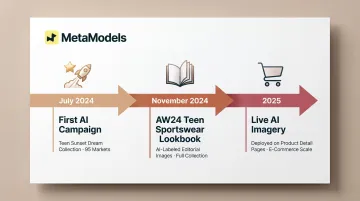

- Mango progressed from its first AI-generated Teen campaign in July 2024 to deploying AI imagery on live PDPs by 2025

- Generative AI trains on real garment photos to produce editorial-quality on-model visuals, with human art teams reviewing each output

- AI imagery cuts studio, model, and crew costs entirely — and speeds up content output across hundreds of SKUs

- The case also exposes open questions around model diversity and disclosure that brands adopting AI imagery still need to answer

Mango's AI Imagery Evolution: From Experiment to E-Commerce Standard

Mango's AI imagery push didn't emerge in isolation. Since 2018, the company has built more than fifteen machine learning platforms covering pricing (Midas), product recommendations (Gaudi), customer service (Iris), and design inspiration (Inspire). AI imagery is one piece of a broader digital transformation under its 2024–2026 Strategic Plan — but it's moved faster than most.

Here's how that progression unfolded:

- July 2024: Mango launched the first fully AI-generated campaign for its Teen line's Sunset Dream limited-edition collection, rolled out across 95 markets. The company positioned itself as "a pioneer in the fashion industry" — intent to lead, not follow.

- November 2024: Mango extended AI model use to its AW24 teen sportswear lookbook, with imagery set in dance studios and outdoor locations. Mango explicitly labeled these images as AI-generated — a transparency step that would prove important as the technology moved from experimental to operational.

- 2025: Mango became one of the first major fashion retailers to deploy AI-generated images on Product Detail Pages, replacing the main on-model shots that directly influence purchase decisions.

Why This Progression Matters

Most fashion brands use AI for aspirational lifestyle shots — backgrounds, settings, and mood imagery. Mango's move to replace PDP on-model photography crosses a different threshold entirely.

According to Stylitics research, 76% of shoppers say on-model photos are the most useful format for purchase decisions. Placing AI imagery at that conversion-critical touchpoint isn't an experiment — it's a production decision with direct revenue implications. That's the line Mango crossed in 2025.

Inside the Process: How Mango Builds AI Fashion Imagery

Mango's workflow combines automated AI generation with human editorial oversight to maintain brand quality standards.

Starting With Real Garments

The process begins with physical garment photography — packshots or studio shots of the actual garment. These images serve as the training foundation, ensuring the AI learns the real fabric, texture, and construction of each piece. Unlike text-to-image tools that invent garments from style prompts, Mango's model is anchored to the actual product.

Training the AI Model

Using real garment images, Mango trains a generative AI model to position each specific garment on a model. According to Mango, the primary technical challenge is "achieving images that have the same editorial quality as a standard fashion campaign with real models."

Generating Output Variations

Once trained, the AI generates multiple on-model image variations with different:

- Poses and model positions

- Styling contexts and accessory combinations

- Settings and backgrounds

- Lighting and mood variations

Variations that would otherwise require full reshoots — new locations, new models, new styling days — can be generated in minutes. That compression in turnaround time is the core operational case for the workflow.

Human Editorial Review

Generated images are reviewed and selected by Mango's art and photography studio team, who also retouch, edit, and master the final images. This human-in-the-loop step maintains brand consistency and catches garment accuracy issues before images go live.

Cross-Functional Collaboration

The workflow involves multiple internal teams:

- Design team — Sets creative direction, color palette, and seasonal standards

- Art and styling team — Selects final imagery and flags editorial inconsistencies

- Dataset and AI model training team — Manages model training, versioning, and technical infrastructure

- Photography studio — Performs final retouching, color grading, and image mastering

The result is a production model where AI absorbs the repetitive volume work and human teams focus on the decisions that actually affect brand perception.

The Business Case: Cost, Speed, and Scale Benefits

Cost Reduction

Traditional product photography requires models, photographers, stylists, makeup artists, set designers, bookers, location or studio fees, insurance, and post-production. While specific cost reduction percentages vary by brand and production scale, Mango CEO Toni Ruiz confirmed the primary operational benefit is to "speed up content creation."

AI imagery eliminates these recurring costs:

- Model booking fees and usage rights

- Photographer day rates

- Stylist, makeup artist, and hair team fees

- Studio rental or location fees

- Travel, catering, and insurance

- Post-production editing time

For a brand like Mango managing thousands of seasonal SKUs, those line items add up fast.

Speed and Volume Advantages

Fast fashion brands drop hundreds of new SKUs monthly. The ability to generate on-model imagery at the pace of product development — rather than scheduling shoots weeks in advance — represents a structural competitive advantage.

Context: Pixel Moda, a global e-commerce media producer, creates AI-generated and AI-assisted product and campaign content for over 900 global brands, producing 14 million pictures and videos per year. This production scale would be impossible with traditional photography workflows.

Consistency and Localization

AI imagery enables brands to:

- Maintain visual consistency across entire product lines

- Produce localized variations (different styling, settings, or model presentations) for different markets without additional shoots

- Scale editorial quality standards across thousands of products

- Generate seasonal campaign imagery aligned with specific regional preferences

Meeting the Conversion Benchmark

Operational efficiency only matters if the imagery actually converts. According to Stylitics' 2025 consumer research, 71% of shoppers could not tell the difference between a real photo and an AI-generated reference image, or perceived only small differences. Another 60% felt neutral or positive when told an image was AI-generated.

The bar for AI imagery in e-commerce isn't perfection — it's whether the imagery is compelling enough to drive purchase without introducing distracting errors. Mango's PDP images meet this bar.

The Diversity and Transparency Gap in Mango's AI Approach

The Diversity Limitation

Despite AI theoretically making it easier to show clothing on a wider range of body types, skin tones, and ages, Mango's current AI imagery defaults to a traditionally narrow model archetype — thin build, light skin. That's a missed opportunity, given what the technology is capable of.

AI model generation platforms can produce diverse representations at no extra cost compared to narrow archetypes. Defaulting to traditional beauty standards is a business decision, not a technological constraint.

The Transparency Question

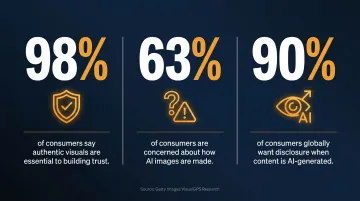

Mango includes a disclaimer on AI-generated PDPs, but its placement has been characterized as subtle. This matters because Getty Images research found that approximately 90% of consumers globally want to know whether an image or video was created using AI.

Key findings from the Getty Images VisualGPS report "Building Trust in the Age of AI":

- 98% of consumers say "authentic" images and videos are essential to establishing trust

- 63% are concerned about how AI images and videos are made

- Sentiment skews more negative toward brands using AI to create people or products because consumers "don't want to feel that they have been fooled or lied to"

Subtle disclaimers buried away from the product image don't meet that standard — especially when AI-generated visuals appear directly at the point of purchase.

The Broader Ethical Conversation

AI imagery eliminates not just model fees but the entire ecosystem of crew roles that depend on shoot production. WWD reported photographers, models, stylists, and hair/makeup artists are already experiencing job displacement. Model Elle Dawson noted that "regular jobs are gone" for newer models.

This labor impact shapes how fashion brands should position AI adoption publicly. Brands that acknowledge this tradeoff openly are better positioned than those that treat it as a footnote.

What Other Fashion Brands Can Learn from Mango's Playbook

The Replicable Framework

Mango's approach — training AI on real garment photos, generating on-model variations, applying human editorial review — is a workflow other brands can adopt regardless of size. The key inputs are:

- Quality packshot photography

- Clear editorial standards for output

- Human review process for quality assurance

- Transparent disclosure practices

Brands don't need Mango's scale or technical infrastructure to implement similar workflows. Multiple AI fashion imagery platforms now offer accessible alternatives to building proprietary technology in-house.

The Diversity and Inclusion Opportunity

Where Mango has defaulted to a narrow model type, brands adopting AI imagery now have a genuine opportunity to build more representative visual libraries from the start. Platforms like MetaModels.ai offer curated AI model libraries spanning diverse ethnicities, body types, and demographics, enabling brands to scale both content production and inclusivity simultaneously.

Brands that lead with inclusive AI-generated imagery can differentiate themselves on representation — without adding headcount or photoshoot budget.

The Strategic Imperative

McKinsey's State of Fashion 2026 report found that **more than 35% of fashion executives already use generative AI** in functional areas including image creation, copywriting, and product discovery. For most fashion brands, the question is no longer whether to adopt AI imagery workflows — it's how quickly.

Brands that haven't evaluated these tools yet face a narrowing window: as AI-generated imagery becomes standard, the cost and speed advantages early adopters enjoy today will simply become the industry baseline.

Frequently Asked Questions

Is Mango's AI imagery only used for marketing campaigns or also for product pages?

Mango initially used AI for campaign visuals (the 2024 Teen line Sunset Dream campaign), then expanded to lookbooks. By 2025, the company deployed AI-generated images directly on Product Detail Pages — the images shoppers see when evaluating a product to purchase.

How does Mango's AI ensure the garment looks accurate and not distorted?

The process starts with real photographs of each garment, which train the AI model on accurate fabric and fit. A human art and photography team then reviews, selects, and retouches all AI-generated outputs before they go live.

What happened to the photographers and models Mango previously worked with?

Mango has not publicly detailed the impact on its existing creative workforce. AI imagery does reduce demand for models, photographers, and stylists — and labor displacement remains an unresolved concern that most brands, Mango included, have addressed only partially in public statements.

Do consumers know when Mango's product images are AI-generated?

Mango includes an AI-generated disclaimer on affected images, though its visibility has been questioned. Consumer research from Getty Images shows nearly 90% of shoppers want clear disclosure when imagery is AI-generated.

Can smaller fashion brands realistically replicate what Mango is doing?

Yes. The core workflow — packshot photography, AI model generation, and human review — is accessible to brands of all sizes. Dedicated AI fashion imagery platforms make this far more practical than building proprietary technology from scratch.

Why did Mango start with its Teen line for AI imagery rather than its main collection?

Teen and youth lines, particularly limited-edition drops, represent a lower-risk testing ground: smaller collections, a digitally native audience, faster production cycles, and less brand equity at stake if image quality falls short of expectations.