Introduction

Brand trust is the variable that determines whether AI on-model imagery drives conversions or triggers backlash. While AI imagery can cut content costs by 90%, that efficiency means nothing if shoppers lose confidence in how a garment actually looks or fits. The real question isn't whether to use AI — it's how to deploy it without eroding the credibility that converts browsers into buyers.

Research shows 71% of shoppers cannot distinguish high-quality AI imagery from real photography. Trust breaks down not because imagery is AI-generated, but because execution details — fabric texture, garment draping, fit accuracy — fall short of what shoppers expect.

When those details are right, AI on-model imagery builds shopper confidence as effectively as traditional photography. Three conditions make the difference: execution accuracy, transparent disclosure, and brand consistency.

TLDR

- 76% of shoppers cite on-model photos as the most helpful format for purchase decisions — it's the single highest-impact visual for fashion e-commerce

- 71% cannot distinguish high-quality AI imagery from real photography — trust erodes only when garment details like fabric draping or button colour are inaccurate

- Nearly 90% want to know when imagery is AI-generated — proactive disclosure strengthens brand trust rather than undermining it

- Inclusive representation builds purchase confidence — AI makes diverse body types and demographics viable at scale, closing gaps traditional shoots cannot

Why On-Model Imagery Is the Visual Trust Battleground for Fashion E-Commerce

Product imagery in fashion e-commerce serves two distinct jobs simultaneously: providing information (will this fit my body?) and providing inspiration (how will this fit into my life?). On-model imagery uniquely delivers both at once, which is why 76% of shoppers say on-model photos are the most useful format for making purchase decisions — far exceeding flat lays or collage formats.

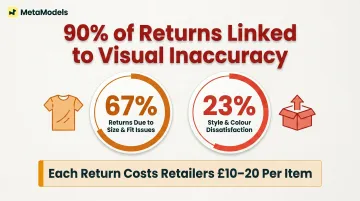

A shopper's trust in product photography directly correlates with purchase confidence. When imagery is unclear, unflattering, or unrealistic, shoppers either abandon without buying or purchase with high uncertainty. 67% of fashion returns are due to size and fit issues, with another 23% from style and colour dissatisfaction, meaning 90% of returns link directly to whether visuals accurately set expectations.

Each return costs retailers £10-20 per item. Visual accuracy isn't a UX nicety — it's a direct revenue issue.

The growing "representation gap" compounds this trust erosion. Traditional photoshoots limit how many models, sizes, and looks a brand can produce due to high costs. At Fashion Month Fall 2022, only 2.34% of model appearances were plus-size, and models over 50 represented just 0.52%. Most shoppers never see clothing on a body that resembles theirs — and this absence of representation is itself a form of trust erosion.

Research from the University of Bath found that thin-size models actually hinder purchase decisions because customers cannot assess how garments would fit their own body type. No evidence supports the assumption that thin models drive higher sales. The data points in the same direction:

- Shoppers disengage when they don't see their size or body type represented

- Poor fit is the single largest return driver across fashion categories

- Brands with broader size representation report stronger customer retention

AI on-model imagery addresses all three gaps — information, inspiration, and representation — at a fraction of traditional photoshoot costs. The caveat is that execution quality and transparency both matter significantly to whether that trust is built or broken.

What Shoppers Actually Think About AI On-Model Imagery

When AI imagery quality is high, shoppers cannot tell the difference. A survey of 411 shoppers by Stylitics and Aha Studio found that 71% reported AI and real photos looked the same or had only small differences. The medium itself, it turns out, is not the primary trust variable.

What shifts the equation is disclosure. When explicitly told imagery is AI-generated, emotional responses break down across a spectrum:

- 36% felt neutral ("interesting but not a big deal")

- 24% felt positive ("Cool! That's smart")

- 31% reacted negatively (citing authenticity concerns)

- 8% had already guessed the images were AI

Total neutral or positive: 60%

Gender shapes reception differently. Men responded more favorably (30% positive vs. 25% negative), while women showed greater skepticism (20% positive vs. 35% negative).

Three Consumer Personas Define Trust Requirements

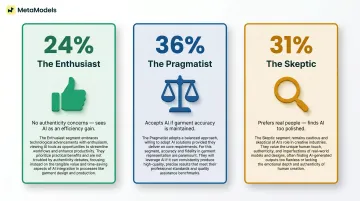

The research identified three distinct shopper personas, each requiring different trust signals:

- The Enthusiast (24%): Sees AI as a time and cost saver with no authenticity concerns.

- The Pragmatist (36%): Accepts AI provided garments are represented accurately — still watching execution.

- The Skeptic (31%): Prefers real people; finds AI imagery too polished to feel genuine.

Trust-Breaking Details Shoppers Noticed

Shoppers identified specific execution failures that collapsed trust:

- Wrong-coloured buttons

- Unnatural fabric draping with no wrinkles or movement

- Overly airbrushed skin lacking realism

- Inaccurate garment proportions or fit

Getty Images research found that AI-generated depictions of people are perceived as more misleading than AI-generated non-human subjects. If a campaign addresses authenticity or relies on "real people and real connections," AI-generated content may not fit.

Nearly 90% of consumers globally want to know whether an image is AI-generated, and 98% agree authentic images are essential for establishing trust. That level of consensus makes transparency less of a brand choice and more of a baseline expectation — which raises the question of what execution conditions actually separate trust-building AI imagery from trust-breaking imagery.

The Three Factors That Determine Whether AI Imagery Builds or Breaks Trust

Factor 1 — Execution Accuracy: Garment Details Matter More Than AI vs. Real

Shoppers do not process imagery as "AI vs. real" — they process it as "accurate vs. inaccurate." When AI imagery accurately renders garment draping, fabric texture, stitch details, and proportional fit, it builds the same purchase confidence as a studio photograph. A single detail error — say, a flat, wrinkle-free rendering of what should be a flowing linen dress — can collapse that confidence entirely.

Garment accuracy, not photographic origin, is the primary trust variable.

Why Human Review Matters

Fully automated AI pipelines carry real accuracy risk — generative models can hallucinate garment details. MetaModels.ai builds human review into its process: fashion specialists check every image for colour, shape, and proportion before delivery. That single step tackles the most consistent shopper complaint: products that look different in person than they do online.

Factor 2 — Transparency and Disclosure: Honesty Is a Brand Equity Signal

59% of shoppers want clear labelling of AI imagery (e.g., "Virtual Model"), with the majority interpreting disclosure as a sign of honesty rather than a red flag. Transparency is not just a legal requirement — it's a brand equity decision. Brands that proactively label AI imagery signal innovation and integrity simultaneously.

Disclosure Does Not Suppress Conversion

73% of Gen Z and Millennial consumers say knowing an ad used AI would increase or have no effect on purchase likelihood. Clear AI disclosure also ranks as the third-highest driver of ad attention, trailing only high-quality visuals and humorous content.

Research from SMU found that basic "AI-generated" labels can trigger scepticism. Detailed disclosures — specifically, explaining that AI was trained on licensed photos of real, diverse people — brought brand evaluations to parity with brands using human models across all three measured outcomes:

- Brand attitude

- Purchase intention

- Willingness to recommend

Factor 3 — Brand Consistency: Generic Templates Carry Risk

Transparency earns trust at the message level; visual consistency earns it at the brand level. AI imagery erodes that trust when it feels "off-brand" — generic poses, mismatched aesthetic, or visuals that don't align with established brand language. Template-based AI tools that produce the same look for every brand carry real risk here. AI on-model imagery should be calibrated to match brand-specific lighting styles, colour grading preferences, and model aesthetic.

Platforms offering custom model creation and brand-specific scene design enable brands to maintain visual identity while scaling production.

The Return Policy Effect

55% of shoppers feel more comfortable purchasing from AI product photos when a clear return policy is in place. Brands don't need flawless AI imagery to build confidence. They need a risk-reduction framework: accurate visuals paired with post-purchase assurance.

How Inclusive AI Models Strengthen Brand Trust Through Representation

Traditional photography's most significant limitation is cost-driven underrepresentation. Brands can only afford to book a limited range of models per shoot, meaning shoppers with non-standard body types, skin tones, or ages rarely see themselves reflected in product imagery — and this invisibility signals that the brand was not made for them.

Inclusive AI on-model imagery is both an ethical choice and a measurable commercial strategy. When a shopper sees a garment on a model that reflects their own body type or demographic, their ability to assess fit and make a confident purchase decision increases.

University of Bath researchers found that retailers using body-size diversity in model photography debunked a long-held industry belief: thin models do not drive sales. In fact, they hindered purchase decisions because customers couldn't assess garment fit for their own body.

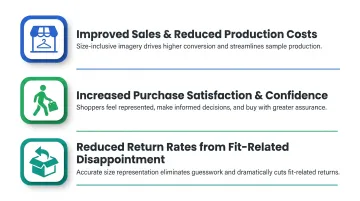

Size-inclusive photography delivered three measurable benefits:

- Improved sales and reduced production costs for retailers

- Increased purchase satisfaction and confidence for customers

- Reduced return rates from fit-related disappointment

According to McKinsey's Rise of the Inclusive Consumer report, 45% of US consumers — representing an estimated 120 million shoppers — believe retailers should actively support diverse and inclusive brands. These consumers tend to be younger, female, and more racially diverse, but their purchasing influence extends across all demographic groups.

Platforms like MetaModels.ai offer curated libraries of AI models with diverse ethnicity, body types, and demographics, making it economically viable for brands of any size to produce inclusive on-model imagery at scale — something previously accessible only to large brands with substantial production budgets. Brands that once lacked the budget to represent their full customer base can now close that gap without a single additional shoot.

Transparency Best Practices: How to Disclose AI Imagery Without Hurting Your Brand

The disclosure spectrum ranges from explicit "Virtual Model" labels on product pages, to small fine print disclaimers, to no disclosure at all. Each approach carries different trust and legal implications. 87% of respondents consider it important for an image to be "authentic," and consumers feel less deceived when disclosure is upfront. That stat alone makes disclosure a commercial decision, not just an ethical one.

How Disclosure Framing Matters

How you disclose matters as much as whether you disclose. Labelling AI imagery confidently — "Virtual Model" or "Created with AI" near the product image — reads as innovation. Burying it in footer fine print reads as evasion, and consumers notice the difference.

Practical Disclosure Guidance:

- Place labels near the image, where shoppers can see them without searching

- Use affirmative language ("Virtual Model" or "AI-generated imagery") rather than defensive hedging

- Apply labels uniformly across all AI imagery — selective disclosure is worse than none

Disclosure Increases Trust Scores, Not Decreases Them

Shoppers who know they're viewing AI imagery tend to react neutrally or positively — a pattern confirmed across multiple consumer studies. Clear labelling often lifts brand trust scores among younger, digitally native audiences in particular. The brands most at risk are those using AI imagery while staying silent about it.

The Backlash Risk Is Real

Several high-profile cases show exactly what that risk looks like in practice:

Levi's (2023): Announced a partnership with Lalaland.ai to "increase diversity" using AI models, triggering intense backlash. Black models criticised the move as cheapening diversity and prioritising cost-cutting over booking real diverse talent.

Mango (2024): AI-generated models were slammed as "false advertising" on TikTok. Critics argued that if both the model and clothing are AI-generated, the advertisement doesn't show how the product actually fits a human body.

Vogue/Guess (2025): A Guess advertisement in Vogue's August 2025 issue featured an AI-generated model, sparking "more upset in the fashion world than Anna Wintour's recent title change," centred on hyper-symmetrical, impossibly perfect beauty standards.

In each case, the backlash wasn't primarily about using AI — it was about how the brand handled (or avoided) the conversation. Proactive disclosure gives you control over that narrative before critics define it for you.

Frequently Asked Questions

Do consumers actually trust AI-generated fashion model images?

Consumer trust in AI imagery depends on execution quality, not the technology itself. Most shoppers are open to it when garment details are accurate, but trust erodes quickly when AI imagery misrepresents fit, texture, or product details.

Does disclosing AI-generated imagery hurt a fashion brand's conversion rate?

Research suggests disclosure generally does not hurt conversions and can actively build brand trust. The majority of shoppers interpret clear labelling as a sign of honesty, and brands that disclose proactively tend to be viewed more favourably than those that don't.

What specific details make AI fashion imagery look fake or untrustworthy?

The most common execution failures are:

- Unnatural fabric draping with no wrinkles or movement

- Inaccurate button, zip, or stitching details

- Overly airbrushed skin that lacks realism

- Proportions that don't reflect how a garment falls on an actual body

Can AI on-model imagery help reduce product return rates?

Accurate AI on-model imagery has the potential to reduce returns by giving shoppers a more realistic sense of fit and drape — but only when execution quality is high. Inaccurate AI imagery can increase returns by creating misaligned expectations.

How does inclusive AI model imagery impact consumer trust?

Showing clothing on models that reflect diverse body types, skin tones, and demographics helps more shoppers visualise fit on their own bodies, increasing purchase confidence. AI makes this kind of inclusive coverage affordable at scale.

Is it ethical for fashion brands to use AI-generated on-model imagery?

AI on-model imagery is ethically acceptable when brands use it with transparency, accuracy, and genuine inclusion. Ethical concerns arise primarily when it's used deceptively or displaces human workers without acknowledgement.