Introduction

On-model photography has always been the conversion gold standard in fashion e-commerce. Research from Baymard Institute's large-scale usability testing confirms that "Human Model" images are essential for apparel — without them, shoppers experience lower confidence and are less likely to complete a purchase. A Stylitics survey of 411 shoppers reinforces this: 76% said on-model photos are the most useful format for making purchase decisions. Salsify's 2026 Consumer Research Report adds that 61% of shoppers cite product images and videos as the most important factor when deciding to purchase.

That conversion advantage comes with a serious operational cost. Traditional on-model shoots require model booking, studio rental, styling, and post-production — running $80 to $150 per image and $5,000 to $25,000 per shoot day depending on model tier and studio quality.

For D2C brands launching 40–100 SKUs per season, those costs stack up fast.

AI image generation has changed what's operationally possible. Brands can now convert a packshot or flat lay into a full on-model gallery without a single shoot day. This guide breaks down exactly how they're building that capability at scale.

TL;DR

- AI on-model production converts flat lays into realistic model images by draping clothing onto virtual models from packshot or flat-lay inputs

- The workflow runs through input preparation, AI garment rendering, human quality review, and delivery as ready-to-publish assets

- D2C brands report up to 85% cost reductions while maintaining conversion rates

- Ready-to-use images work across PDPs, lookbooks, social ads, and email, with consistent brand identity across every channel

What Is AI On-Model Photo Production?

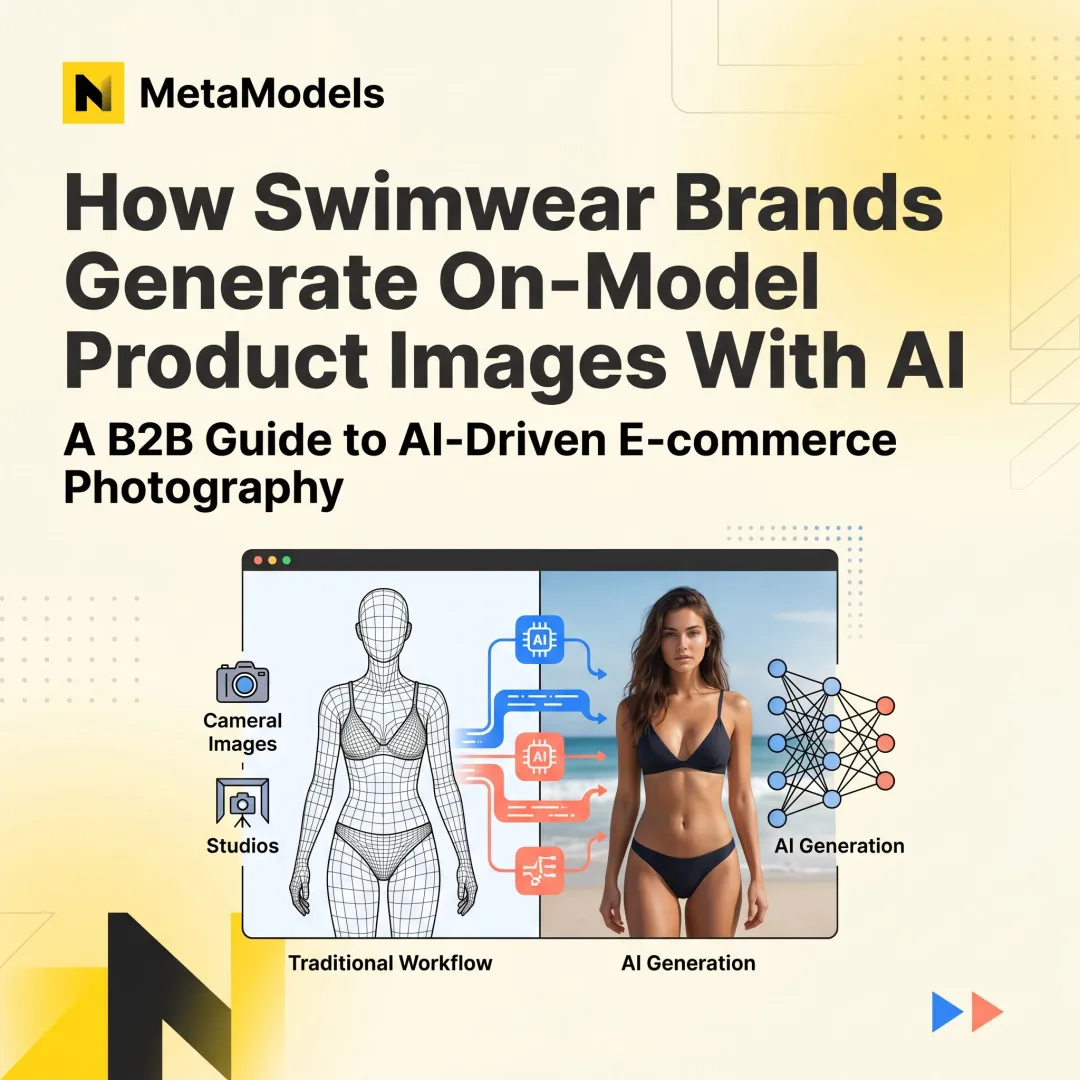

AI on-model photo production is a workflow where generative AI models apply a brand's garment — sourced from a flat lay, packshot, or ghost mannequin image — onto photorealistic AI-generated human models, outputting images that match traditional studio photography.

This technology addresses a specific operational gap: D2C fashion brands need frequent, high-volume on-model content but lack the budget or logistics to run traditional shoots for every SKU, colorway, or seasonal variant.

McKinsey projects generative AI could add $150 billion to $275 billion to apparel, fashion, and luxury sectors' operating profits within 3-5 years, with productivity gains exceeding 30% over the next five years.

AI on-model production is not the same as generic AI image generation. Where tools like Midjourney or DALL·E interpret a prompt stylistically, this workflow is garment-first:

- The actual product's fabric, drape, and texture are preserved through rendering

- Output reflects the real product — not an AI interpretation of it

- The garment drives the workflow; the generated scene is secondary

This precision matters because accuracy directly impacts conversion and return rates. A product image that misrepresents fit, color, or texture doesn't just disappoint — it drives returns, which research from Narvar puts at 24% for online apparel purchases.

How Does AI On-Model Photo Production Work?

AI on-model photo production operates through a defined sequence of stages — each building on the last — from a simple product image to a campaign-ready visual.

Input & Initiation

The workflow begins when a brand uploads a base product image — typically a flat lay, ghost mannequin shot, or packshot — along with brief specifications such as model type, pose preference, or channel destination.

Input quality requirements matter immediately: Image resolution, background neutrality, garment visibility, and completeness directly limit how accurately the AI can render garment details downstream. Poor input quality at this stage compounds through the entire workflow, resulting in substandard final outputs.

Core Processing: Garment Rendering & Model Generation

The AI system segments the garment from the input image, maps its structure, texture, and drape characteristics, then synthesizes a photorealistic model wearing that specific garment. Unlike a simple texture overlay, the generation is physics-informed — accounting for how fabric actually behaves on a body.

Model selection works through two paths:

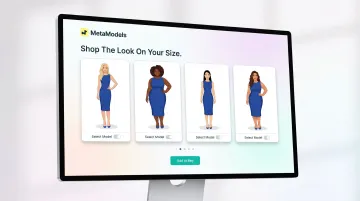

- Curated libraries — Brands choose from AI models with defined attributes: body type, skin tone, age range, and ethnicity

- Custom-built brand models — Platforms like MetaModels.ai offer custom model creation to match specific brand aesthetics

That flexibility serves two purposes: inclusive representation and on-brand visual identity. A sustainable activewear brand targeting Gen Z women needs different model profiles than a luxury menswear label — and AI makes both achievable at scale.

Garment accuracy at this step is what distinguishes production-ready fashion tools from general-purpose image generators. Complex textures, patterns, and fits require rendering engines trained specifically on fashion — not adapted from broader models.

Quality Control & Human Review

Most professional AI on-model platforms include a human review stage where trained editors verify garment accuracy against the original product before approval. Reviewers check for:

- Stitching and seam accuracy

- Print and pattern alignment

- Neckline, hem, and proportion fidelity

Brands that skip quality review risk publishing images where the AI has distorted a garment's key selling features — leading to higher return rates and eroded shopper trust. MetaModels.ai builds human-verified review into the pipeline by default, treating it as a non-optional quality gate.

Final Output & Delivery

The workflow produces a set of approved, ready-to-publish on-model images — typically available in multiple formats and resolutions (up to 4K), usable across PDPs, social platforms, paid ads, and campaign lookbooks — without manual post-production.

Salsify found that nearly half of shoppers have returned a product because it didn't match its online presentation. Accurate on-model imagery reduces that risk directly — while preserving the conversion advantage that model photography delivers over flat product shots.

Why D2C Brands Are Switching to AI On-Model Photos

The Cost Case: $150 vs. $2.40 Per Image

A realistic cost comparison shows the economics clearly:

Traditional photography:

- Photographer: $1,000-$3,500/day

- Model fees: $600-$1,500/day (agency standard)

- Studio rental: $600-$1,000/day (mid-tier)

- Hair and makeup: $800-$2,400/day (full team)

- Wardrobe stylist: $400-$1,000/day

- Post-production: $20-$40/image (basic retouching)

- Per-image cost: $80-$150

AI-generated on-model:

- Per-image cost: $2.40-$20 depending on platform and volume

ASOS reportedly conducted a 90-day split test across 40,000 SKUs comparing AI-enhanced product images against traditional studio photography. Per-image costs dropped from $18 to $2.40 — an 87% reduction. JungleScout reported that e-commerce brands adopting AI imaging tools achieved an average cost reduction of 85% in product photography — consistent with what early adopters across fashion and apparel are seeing.

Speed: From 72-Hour Cycles to Same-Day Launches

A D2C brand launching 40-100 SKUs per season cannot realistically schedule photoshoots for every product. AI on-model workflows can generate a full gallery for every SKU in hours.

Speed examples from real implementations:

- Revolve Group reduced cycle time from product receipt to live image from 72 hours to under 4 hours

- ASOS increased same-day product page updates from 12% to 60% of new arrivals

That kind of throughput changes how brands operate. Trend-reactive launches, mid-season design tests, and last-minute collection additions all become viable when the content bottleneck disappears.

Diversity at Scale — Without the Budget Trade-Off

With traditional shoots, diverse representation across body types, ethnicities, and demographics requires booking multiple models per product — a cost that forces most brands to compromise. AI removes that constraint.

The business case for diversity is clear: Harvard Business Review research analyzing 100+ brands over 3 years found that inclusive brands grew revenue 2.5x faster than non-inclusive peers. Inclusive marketing increased purchase intent by 10 percentage points among the general population and 13 percentage points among underrepresented groups.

Consumer demand supports this: 59% of consumers prefer to buy from brands that stand for diversity and inclusion in online advertising, and 71% expect brands to promote diversity and inclusion.

AI on-model platforms enable brands to show every product on multiple model profiles — different body types, skin tones, ages, and ethnicities — without additional per-shoot cost. Representation stops being a line-item decision.

Built-In A/B Testing and Channel-Specific Variations

Because AI generation isn't bound by shoot-day commitments, brands can create multiple image variations per SKU — different poses, backgrounds, or model types — and systematically test which performs best on PDPs versus paid social versus email.

ASOS's AI-enhanced images delivered a 3.2% lift in conversion rate in their split test. Everlane achieved 40% cost savings on non-hero photography while preserving conversion rates on primary PDPs using a hybrid traditional/AI approach.

Over time, brands build a clear picture of which visual presentations drive performance by channel — and can act on that data within hours, not weeks.

Where AI On-Model Photos Fit in the D2C Content Workflow

AI on-model production is most commonly triggered at the point of new SKU launch — when a product sample or final product image is available but a shoot has not yet been scheduled or budgeted. This makes it the default visual production path for the majority of a brand's catalog, not a supplementary tool.

Once approved, AI on-model images feed directly into the same channels as traditional photography:

- Shopify PDP uploads

- Lookbook assembly

- Social media scheduling

- Paid ad creative

The shift is transparent to the teams consuming the assets. eMarketer projects AI-generated imagery will represent 38% of all e-commerce photos by 2026 — a figure that reflects how quickly this approach has moved from experiment to standard practice.

Where Traditional Photography Still Fits

Hero campaign imagery, flagship PDP shots, and product categories where tactile accuracy drives purchase decisions — premium knitwear, structured outerwear — may still benefit from a foundation shoot. AI handles the variant volume built around it.

That hybrid model is already proving out in practice. Mango became the first major fashion brand to replace traditional product photos with AI-generated on-model visuals across its PDPs — a clear signal that the technology has crossed from pilot into production-ready territory.

Conclusion

AI on-model production works because it decouples visual quality from physical production constraints. The output is only as good as the input image, the rendering engine, and the quality review layer working in sequence.

D2C brands that understand how each stage works — from input requirements to quality gating to output formats — are better positioned to implement the technology effectively, integrate it with existing production systems, and scale it without compromising the brand consistency that drives conversion.

The operational advantages compound quickly:

- Lower production costs by eliminating model booking and studio fees

- Faster time-to-market with no shoot scheduling bottlenecks

- Inclusive representation across body types and demographics at scale

- Creative flexibility for systematic A/B testing across product lines

As adoption spreads, the brands pulling ahead won't be the ones debating whether to use AI on-model photography — they'll be the ones who've already built it into their production pipeline.

Frequently Asked Questions

Can I use AI models for my clothing brand?

Yes, AI on-model tools are specifically designed for fashion and apparel brands, regardless of size. Brands upload product images and the AI generates realistic model photos without physical shoots, including small D2C labels launching frequent collections.

Which AI model is best for photos?

For D2C brands, fashion-specific AI on-model platforms outperform general-purpose tools like Midjourney or DALL·E. Purpose-built platforms preserve fabric detail, fit, and proportions — delivering production-ready, commercially accurate imagery that general generators can't reliably produce.

How accurate are AI on-model photos compared to real photography?

A Stylitics survey found that 71% of shoppers could not distinguish between real photos and AI-generated images. Accuracy depends heavily on input image quality and whether the platform includes a human review stage for garment fidelity.

Do I need a physical photoshoot to start using AI on-model photos?

Most platforms only require a flat lay, ghost mannequin, or standard packshot as the input, though higher-resolution and well-lit base images produce significantly better AI-generated output.

How do AI on-model tools handle diverse body types and skin tones?

Production-grade platforms offer curated model libraries spanning diverse body types, skin tones, ages, and ethnicities. Many also support custom model creation, so brands can define profiles that match their target customer.

How much does AI on-model photography cost compared to traditional shoots?

D2C brands typically report 80–85% cost reductions versus traditional photography, with per-image costs dropping from $80–$150 to roughly $2–$20. Exact pricing varies by platform and volume, but the savings are consistent across production-scale use.