Introduction

Luxury brands face a unique tension: AI model photography promises dramatic cost savings and production at a scale traditional shoots can't match — but luxury has always demanded uncompromising visual perfection and brand consistency. The stakes for compliance failures are exponentially higher in this segment than in mass-market fashion. A single off-brand image can undermine decades of carefully cultivated positioning, while a legal misstep can expose brands to penalties reaching €15 million or 3% of global turnover under the EU AI Act.

"Compliance" in luxury AI photography carries dual meaning: external legal obligations — disclosure laws, copyright exposure, right-of-publicity statutes — and internal brand standards that define a house's identity.

When Gucci's AI "Primavera" visuals drew criticism for removing human artistry, and Valentino's AI-generated "DeVain" handbag imagery was described as "flat and lacking emotion," the damage was reputational, not regulatory. Getting both dimensions right is what separates brands that use AI effectively from those that pay for it twice — once in production, again in crisis management.

TLDR

- AI model photography compliance spans two layers: external legal requirements and internal brand quality standards

- Legal risks cover copyright exposure, right-of-publicity claims, and disclosure mandates under the EU AI Act and FTC guidance

- Luxury brands require precise control over model aesthetics, garment rendering, and tonal consistency across every image

- Combining AI generation with human review connects production speed to luxury-grade output quality

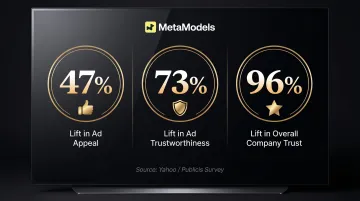

- Proactive disclosure builds consumer trust: research shows 96% lift in company trust when AI use is disclosed transparently

What "Compliance" Actually Means for Luxury AI Photography

For mass-market brands, AI photography compliance is primarily a legal question. For luxury brands, it is both a legal and brand equity question. A single off-brand image can undo decades of positioning — brand compliance carries the same weight as regulatory adherence.

Two Compliance Layers

External compliance encompasses legal, regulatory, and platform requirements:

- EU AI Act Article 50 disclosure obligations

- FTC consumer protection rules

- Right-of-publicity statutes

- Platform-specific content policies (Amazon, Shopify)

- Copyright exposure from AI training data

Internal compliance addresses adherence to brand visual identity:

- Model aesthetics aligned with house standards

- Garment rendering accuracy (fabric draping, colour precision)

- Campaign tone consistency

- Background and lighting alignment with brand mood

Luxury-Specific Visual Benchmarks

Luxury brands enforce visual standards that are harder to automate than in any other segment. The margin for error is narrow:

- Fabric draping must reflect how the garment actually falls — buyers at this price point evaluate cut and movement closely

- Colour accuracy across cashmere, silk, and leather must be precise; subtle shifts read as quality failures

- Model poses and posture must match the house aesthetic — a heritage brand's restrained stance is as intentional as its silhouettes

- Background and lighting must carry the right mood, whether that's minimalist cool or opulent warmth

Consumer Backlash at the Luxury Price Point

These visual gaps don't go unnoticed. Research shows 72% of consumers believe AI makes it difficult to determine what content is truly authentic, and that concern hits harder at luxury price points where trust justifies premium pricing.

Three recent examples illustrate the pattern:

- Gucci's AI visuals prompted consumer commentary that "if customers are paying premium rates, they expect premium effort—not automation"

- Valentino's AI-generated imagery was criticized as lacking "the emotion usually tied to the brand"

- Prada's S/S 2026 Wolfson campaign went viral as "polarising" and "unsettling," forcing the brand to clarify AI's limited role

Why Volume Cannot Compensate for Visual Failures

Luxury brands operate with lower SKU counts and higher per-item brand investment than mass-market retailers. A fast-fashion catalog can absorb one weak image across thousands of SKUs. A luxury house cannot.

Every image carries outsized weight. Heritage brands spend years building associations around craftsmanship and exclusivity — a single generic-looking AI output erodes that positioning in seconds.

Legal and Regulatory Requirements Every Luxury Brand Must Understand

Copyright and Training Data Exposure

AI image platforms trained on unlicensed images create indirect legal liability for brands using their outputs. The Getty Images v. Stability AI case illustrates this risk. The UK High Court dismissed Getty's secondary copyright infringement claims in November 2025. Getty was granted permission to appeal in December 2025, with proceedings expected in late 2026.

A parallel US case filed in August 2025 alleges infringement of over 12 million photographs. If courts ultimately find that AI models trained on unlicensed data constitute "infringing copies," brands using such platforms could face secondary liability exposure.

Luxury brand action: Vet AI vendors for transparent, licensed training data practices. Require documentation proving training datasets were sourced legally. This due diligence creates both legal protection and a documented good-faith compliance effort.

Right of Publicity

AI-generated faces can inadvertently resemble real, identifiable people—including celebrities, models, or influencers with strong brand associations. Luxury brands running high-visibility campaigns face elevated risk because their imagery reaches broader audiences and carries higher commercial value.

Four U.S. states have enacted AI-specific right-of-publicity protections:

| State | Law | Effective Date | Key Requirements |

|---|---|---|---|

| California | AB 1836 (deceased); AB 2602 (living) | January 1, 2025 | Written consent with "reasonably specific description of intended uses"; damages of $10,000+ per violation |

| New York | S. 8420 (synthetic performers) | June 9, 2026 | "Conspicuous disclosure" of synthetic performers in advertising; penalties $1,000-$5,000 |

| Tennessee | ELVIS Act | March 2024 | Addresses AI-generated deepfakes of voice and likeness |

| Illinois | Right of Publicity Act | Revised 2025 | Covers digital replicas; statutory damages of $1,000 |

Internal review process: Before publication, conduct a documented review assessing whether any generated face resembles an identifiable real person. Keeping a written record of that review is what transforms intent into defensible evidence if a claim arises.

EU AI Act Article 50

Luxury brands operating or advertising in the EU face strict disclosure obligations starting August 2, 2026. Article 50 requires:

- Providers must mark AI-generated content in machine-readable format

- Deployers (brands using AI tools) must disclose that content has been artificially generated or manipulated

- Disclosure must be "clear and distinguishable" at first interaction or exposure

- Penalties: Up to €15 million or 3% of global annual turnover

Luxury brands with significant EU revenues face the highest financial exposure. The artistic exemption provides limited relief—it applies only when AI use is "evidently artistic" and disclosure doesn't hamper enjoyment of the work. Product imagery and campaign visuals typically don't qualify.

FTC and U.S. State Laws

For US brands, the exposure is straightforward: Section 5 of the FTC Act prohibits unfair or deceptive practices, and AI-generated imagery that misrepresents how a product looks qualifies. The FTC's Operation AI Comply (September 2024) made this explicit — "there is no AI exemption from the laws on the books."

No fashion-specific FTC guidance exists yet. But enforcement actions in adjacent industries signal that brands using AI visuals to obscure product defects or exaggerate appearance are the most likely targets.

Platform-Specific Requirements

Luxury brands selling through third-party platforms face an additional compliance layer beyond legal obligations. Each platform's content policies function as binding contractual requirements — violations can affect listings before any regulator gets involved.

- Amazon: Prohibits misleading product representations. Synthetic imagery that misrepresents appearance violates content policies — consequences range from listing removal to suspension of selling privileges.

- Shopify: More permissive currently, but scrutiny is increasing. Treat current terms as a floor, not a ceiling, and monitor for updates.

Build platform policy reviews into your quarterly compliance calendar alongside legal updates — they change on different schedules and without advance notice.

Protecting Brand Identity: Visual Standards and AI Model Curation

The most critical brand compliance challenge is preventing "AI drift"—where generated images look technically correct but feel aesthetically generic, off-brand, or inconsistent with the house's established visual language. This requires deliberate model selection and prompt governance, not just using default AI outputs.

Luxury-Grade AI Model Curation

Effective model curation for luxury brands involves:

- Physical proportions — Selecting models whose body type, height, and proportions align with the brand's established campaign aesthetic

- Skin tone rendering — Ensuring skin tone accuracy matches the brand's diversity standards and photographic style

- Posture and bearing — Avoiding models whose AI-generated stance conflicts with brand positioning (e.g., a heritage house's traditional aesthetic vs. streetwear-influenced posture)

- Facial features — Maintaining consistency with the brand's historical model selection patterns

Platforms like MetaModels.ai address this through custom model creation matched to brand identity, alongside curated libraries of diverse AI models. This allows luxury brands to maintain visual consistency across entire catalogs rather than producing one-off images that vary aesthetically.

Fabric Draping Accuracy as Brand Compliance

Luxury buyers evaluate garments on how they fall and move. AI imagery that misrepresents drape or silhouette creates a mismatch between expectation and physical product — and consistent model curation alone won't protect the brand if fabric rendering is inaccurate.

Industry data indicates approximately 16% of fashion e-commerce returns occur because the product did not match digital imagery. Fashion return rates reach up to 40% of online purchases overall. AI-generated imagery that inaccurately renders color, texture, or drape compounds an already costly problem.

For luxury brands, the cost isn't just financial—it's reputational. A customer who receives a garment that doesn't match the website imagery questions the brand's integrity, not just the photo accuracy.

Preventing AI Drift Through Controlled Workflows

Maintaining brand identity requires three disciplines working together:

- Custom model creation — Building AI models matched to your brand's established aesthetic: lighting style, model type, and campaign tone — not selected from generic libraries

- Prompt governance — Establishing documented style parameters and visual guides that enforce consistency across catalog production

- Vendor selection criteria — Requiring AI platforms to demonstrate licensed training data, human quality assurance, and brand-consistent output capabilities

The Quality Assurance Layer: Why Human Review Is Non-Negotiable for Luxury

Automated AI generation alone cannot guarantee luxury-grade output. Garment accuracy issues regularly occur and must be caught before publication.

What Human QA Review Should Check

A comprehensive quality assurance process for luxury AI model photography should verify:

- Fabric rendering accuracy — Does the material look like cashmere, silk, or leather as appropriate?

- Draping fidelity — Does the garment fall naturally on the body?

- Seam integrity — Are seams straight, proportional, and correctly positioned?

- Colour precision — Does the garment colour match the physical product exactly?

- Embellishment detail — Are buttons, zippers, embroidery, and hardware rendered accurately?

- Brand aesthetic alignment — Does the overall image feel consistent with the house's visual language?

Human Review as Right-of-Publicity Compliance

Human review serves a dual purpose: quality assurance and legal protection. A reviewer checking whether any generated face resembles an identifiable real person creates the documented good-faith effort that reduces legal risk.

Document this review step in an audit trail:

- Date and time of review

- Reviewer name and qualifications

- Specific items checked

- Any corrections made

- Final approval signature

The MetaModels.ai Standard

That audit trail also shapes what to demand from any AI photography vendor. MetaModels.ai builds this review step into its workflow, passing every generated output through a garment accuracy check before delivery. Whether a brand handles generation internally or outsources it, requiring the same documented QA process is the practical way to hold AI speed accountable to luxury standards.

Disclosure as Brand Strategy, Not Just Legal Obligation

Consumer research reveals a counterintuitive finding: consumers don't reject AI imagery—they reject deception. A Yahoo/Publicis survey found that when consumers noticed AI disclosure, it produced a 47% lift in ad appeal, 73% lift in ad trustworthiness, and 96% lift in overall company trust.

For luxury brands, where trust is the product, that gap is consequential. The same research found 72% of consumers believe AI makes authenticity harder to determine. Disclosure doesn't erode that trust — it restores it.

Practical, Non-Intrusive Disclosure Formats

Luxury brands can meet regulatory requirements without visible interruptions to their aesthetic:

- C2PA metadata embeds cryptographically signed AI provenance data at the file level — no visible label required

- Product description labels ("AI-assisted imagery") satisfy transparency requirements while keeping visuals clean

- Alt-text disclosure covers both accessibility compliance and AI transparency in a single field

Positioning AI Disclosure as Innovation Narrative

Luxury brands should frame AI disclosure as part of their innovation story, not a liability admission. Position AI tools as precision instruments guided by the creative team — the same way leading houses have long adopted technology in service of craft, from digital pattern-making to 3D knitting.

"Our imagery combines AI-powered efficiency with human creative direction, ensuring every visual meets our standards while presenting our collections with greater inclusivity and scale."

Frequently Asked Questions

What legal obligations apply to luxury brands using AI model photography?

Luxury brands face several overlapping obligations: EU AI Act Article 50 disclosure requirements (effective August 2026), FTC rules prohibiting deceptive practices, and right-of-publicity statutes in California, New York, Tennessee, and Illinois. Brands must also vet AI vendors for licensed training data to avoid downstream copyright liability.

Do luxury brands need to disclose when model images are AI-generated?

Yes. In the EU, the AI Act requires disclosure when AI materially changes what consumers see. In the U.S., FTC guidance increasingly points the same direction. Beyond legal compliance, proactive disclosure is a brand trust strategy — research shows a 96% lift in consumer trust when AI use is disclosed transparently.

How can AI model photography maintain the visual quality standards luxury brands require?

Achieving luxury-grade output requires discipline at every stage:

- Precise model curation aligned with brand aesthetic

- Accurate fabric draping rendering

- Consistent governance through saved style parameters

- Human review of every output before publication to verify garment accuracy

Can AI-generated model faces create legal liability for luxury fashion brands?

Yes. AI-generated faces that resemble identifiable individuals create right-of-publicity exposure, particularly in California, New York, Tennessee, and Illinois. Brands should document internal review processes, assess whether generated faces resemble real people before publication, and maintain audit trails showing good-faith compliance efforts.

What platform restrictions apply to AI model imagery for luxury e-commerce?

Amazon's misrepresentation policies prohibit synthetic imagery that misleads about product appearance — enforcement can include listing removal and account suspension. Shopify's policies are still developing, but AI-generated content faces growing scrutiny. Treat platform terms as a compliance layer on top of legal requirements, not a substitute for them.

How do luxury brands protect their brand identity and DNA when using AI model photography?

Protecting brand identity requires a multi-layered approach:

- Custom AI model creation aligned with brand visual language

- Prompt governance through saved templates and locked aesthetic parameters

- Vendor selection that prioritizes licensed training data and human quality assurance

- Rigorous review processes that prevent "AI drift" toward generic imagery inconsistent with house aesthetic