Introduction

Fashion catalog production runs into the same walls every time: model casting delays that stretch for weeks, limited pose variations from expensive day-long shoots, and costly reshoots when a pose fails to communicate garment fit or design details. A single production session might yield three to four usable poses per garment, forcing brands to choose between thorough product visualization and staying on budget.

AI model poses change that equation. Brands now generate catalog-ready imagery across multiple pose categories in hours, not weeks. According to McKinsey's returns management research, 70% of apparel returns stem from poor fit or style mismatches — problems directly tied to incomplete visual information at purchase. Pose selection determines how effectively a garment communicates its silhouette, movement, and value to the buyer.

This article covers why poses matter for catalog performance, which pose types belong in every catalog, how AI generates and controls them, and the strategy and pitfalls that separate high-performing catalogs from wasted spend.

TLDR:

- Poor pose selection drives incomplete fit visualization, higher return rates, and lost conversions

- Every catalog needs static (front/three-quarter/back), dynamic (walking/movement), editorial, and lifestyle poses

- AI generates garment-accurate pose variants using skeletal estimation and diffusion-based reconstruction

- Pose strategy requires consistent libraries, human QC review, and channel-specific formatting

- Platforms like MetaModels.ai generate human-reviewed pose sets across diverse model types — no casting budget required

Why Pose Selection Defines Fashion Catalog Performance

Pose is the primary visual signal for fit communication. Each angle communicates something different:

- Front-facing views show silhouette and print placement

- Three-quarter angles reveal how fabric sits across the torso and hips

- Back views expose seam construction, collar height, and hem detail

Without these angles, shoppers rely on incomplete information — and that gap drives returns.

The conversion and return rate connection:

McKinsey data shows that 70% of apparel returns are attributed to poor fit or style, with apparel e-commerce return rates reaching 25% compared to an overall retail return rate of 10.6%. Returns represent a massive cost burden: the National Retail Federation projects total U.S. retail returns will reach $890 billion in 2025, with 19.3% of online sales returned.

High-resolution images and multi-angle on-model photography reduce this risk. Poses that flatten or obscure how a garment sits on a body force purchase decisions based on guesswork rather than visual evidence. Modern shoppers expect to visualize movement, versatility, and wearability from static images — expectations that single-pose catalogs cannot meet.

Why AI solves this at scale:

AI generates multiple pose variations from a single garment input at low incremental cost, removing the per-shoot volume limit that traditionally forced brands to choose between budget and visual completeness. Pose energy also shapes brand perception: a confident, dynamic pose communicates a different brand story than a neutral stand. Consistency across a catalog collection reinforces that story to returning visitors and builds the recognition that keeps shoppers browsing longer.

The Types of AI Model Poses Every Fashion Catalog Needs

Static Stand Poses for PDP Hero Images

Front-facing, three-quarter, and back-facing static poses form the foundational set for product detail page (PDP) hero imagery.

- Front pose: Shows silhouette, hem length, and print placement clearly

- Three-quarter pose: Reveals how the garment fits across the torso, hip, and shoulder line

- Back pose: Communicates seam construction, collar height, back detailing, and hem behavior

These three angles provide the minimum visual information shoppers need to assess fit from a static image.

Dynamic and Movement Poses

Static poses can't show how a garment actually behaves on a moving body. Walking, mid-stride, and in-motion poses fill that gap — particularly for:

- Dresses and blouses where fabric float and drape are key selling points

- Flowy skirts that need stride movement to communicate silhouette

- Activewear and compression garments where fit-in-motion is a purchase driver

- Pleated styles where fall and structure only read during movement

Editorial and Campaign Poses

High-fashion and editorial poses — contrapposto stance, over-shoulder glance, leaning postures, arms-raised compositions — establish brand tone and emotional context beyond product functionality. These poses are used for lookbooks, seasonal campaign launches, and paid ad creative where brand storytelling takes priority over pure product detail.

Seated and Lifestyle Poses

Seated, relaxed, and lifestyle-context poses work best for casualwear, knitwear, denim, and loungewear categories where everyday wearability and versatility need to come through. These poses also perform well in social commerce formats where context and relatability drive engagement.

Production-ready pose libraries:

Platforms like MetaModels.ai offer curated libraries of AI models across all major pose categories — static, dynamic, lifestyle, and editorial — so brands can cover a complete pose set per SKU without booking models or coordinating additional shoots.

How AI Technology Generates and Controls Model Poses

Skeletal Estimation and Joint Mapping

AI pose generation begins with skeletal estimation: mapping the human body into a network of keypoints representing joints, limbs, and torso. Research published in ACM Computing Surveys identifies two standard keypoint formats — COCO (17 keypoints) and MPII (16 keypoints) — that map body landmarks like elbows, knees, wrists, and shoulders.

This skeletal scaffold becomes the foundation for re-rendering the model in a target pose while preserving proportions. OpenPose uses Part Affinity Fields (PAFs) — 2D vector fields encoding limb location and orientation — to associate joints with individuals. HRNet (High-Resolution Net) maintains high-resolution representations throughout processing via parallel multi-resolution subnetworks.

Diffusion-Based Garment Reconstruction

AI doesn't simply warp fabric when a pose changes. Diffusion models reconstruct how the garment would drape, fold, and cast shadows in the new position. Research from Tencent AI Lab demonstrates a three-stage pipeline:

- Prior stage: Transformer-based diffusion model predicts global target-image embedding from source appearance and target pose coordinates

- Inpainting stage: Latent Diffusion Model generates a coarse target image using pose skeleton images processed through a ControlNet-style encoder

- Refining stage: Image-to-image diffusion restores fine textures via cross-attention with DINOv2-extracted source features, enabling the model to "paint" source garment textures onto the target pose

The result is accurate fabric draping at the new pose — not a stretched or smeared approximation of the original image.

Three Methods for Controlling AI Poses

Reference image upload: Upload a reference photo and the system transfers its joint configuration to the AI model. High precision, but you need to source or stage the reference first.

Preset pose library: Choose from pre-configured poses built for catalog production. The fastest option — consistent, predictable results with no iteration required.

Prompt-based instructions: Describe the pose in plain text. Flexible for custom or unusual positions, though outputs may need a few rounds of refinement.

Persistent Model Identity Across Poses

Catalog coherence requires the same AI model — face, body type, lighting direction — to appear across all pose variants for a collection. When pose variants feature mismatched faces or inconsistent lighting, the catalog grid reads as disjointed rather than intentional. Achieving this means the generation system must lock model identity across outputs — not just copy-paste a face onto a new body position.

That's where human review becomes critical. MetaModels.ai combines real-time fabric draping with manual quality checks on every output — catching joint distortion, shoulder drift, and hem misalignment before assets reach the catalog.

Matching AI Model Poses to Catalog Channels and Use Cases

PDP Poses vs. Social Media Poses

PDP and social media demand fundamentally different pose strategies:

- PDP: Clean, repeatable, garment-forward framing — consistent across every SKU

- Social media: Dynamic, editorial energy suited to vertical, square, and carousel formats

- Ads: High-contrast hero poses that read clearly at thumbnail scale

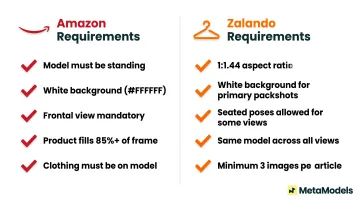

Marketplace-Specific Constraints

Amazon and Zalando impose strict rules on pose usage:

Amazon requirements (per Seller Central guidelines):

- Clothing must be photographed on a model

- Models must be standing (not sitting, kneeling, leaning, or lying down)

- Pure white background required (hex #FFFFFF)

- Frontal view mandatory

- Product must fill at least 85% of the image frame

Zalando requirements (per Partner University guidelines):

- Aspect ratio: 1:1.44 (width x height)

- White background for primary packshots

- Seated poses allowed for front crop, back view, and further views

- Same model and styling required for all views of an article

- Minimum 3 images per article

Map these constraints before generation begins — non-compliant assets get rejected at upload, wasting production time. Lookbook and campaign work, by contrast, operates under far fewer restrictions.

Lookbook and Campaign Use Cases

Lookbooks and campaigns allow the widest creative pose latitude — and the most room to differentiate visually. Common high-value poses for this format include:

- Full-length movement shots (walking, turning, mid-stride)

- Editorial close-ups highlighting fabric texture or detail

- Multi-model groupings for lifestyle and brand storytelling

- Environment-contextualized poses (outdoor, interior, conceptual sets)

Producing this range through traditional studio shoots requires significant time and budget. Generating it from packshots removes that barrier entirely.

Building a Consistent Pose Strategy for Your Brand

Define a Brand Pose Library

Start with two to four signature poses that reflect your brand's visual identity:

- Confident and approachable for mid-market brands

- Severe and editorial for luxury positioning

- Relaxed and lifestyle-oriented for casualwear

Lock these poses before a season launch and apply them consistently across all SKUs in that collection.

Version Control for Pose Libraries

Preventing pose drift between catalog batches requires a few operational habits:

- Standardized file naming conventions for every generated asset

- Archived seeds and prompts tied to each approved pose

- Season-locked references that the team pulls from — not recreates

Without these guardrails, teams regenerate assets from memory, and small inconsistencies compound into a catalog that looks mismatched at scale.

Inclusive Representation at Scale

That same documented pose logic applies directly to diverse model sets — different body types, ethnicities, and ages — without added complexity. AI removes the volume constraint that traditionally made inclusive representation expensive. Applying a pose set to ten model variants costs the same as applying it to one.

Common Pitfalls When Using AI Model Poses in Catalogs

Anatomical Distortion Risks

AI pose generation can produce unnatural joint angles, warped hand positions, and misaligned shoulders — particularly at extreme or unusual angles. A human review step before any pose variant goes live catches these errors early — specifically checking joint alignment, shoulder line, and hand positioning where distortion is most common.

Garment Warping

Structured garments — blazers, tailored trousers, corseted dresses — are most vulnerable to seam misalignment and shoulder distortion when re-posed. Two fixes address this reliably:

- Improve source file quality — higher-resolution packshots give the AI more accurate drape data to work from

- **Apply a targeted retouch pass** on the distorted region — faster and more precise than regenerating the full image

Pose Inconsistency Across Catalog Batches

When similar products appear with mismatched pose energy, framing heights, or crop ratios in a category grid, the mismatch signals low production quality to shoppers. Baymard Institute research found that 64% of e-commerce sites fail to adequately display or present product attributes in listings, leading directly to site abandonment. Consistency in list item attributes allows at-a-glance comparison; inconsistency makes shoppers assume the site doesn't carry relevant products.

Defining a pose brief — fixed crop height, consistent framing axis, and matched energy level — before batch generation eliminates most of these issues upstream rather than in post-production.

Frequently Asked Questions

What types of AI model poses work best for product detail pages?

Front-facing, three-quarter, and back-facing static poses form the core PDP set. Add one dynamic pose to show fit in movement for dresses, blouses, and activewear categories where drape behavior matters.

How do AI-generated poses preserve garment accuracy and fabric drape?

Diffusion-based reconstruction rebuilds how fabric would behave in each pose rather than warping a 2D image. Human QC review catches drape errors, seam misalignment, and artifacts before assets are approved for catalog use.

Can AI model poses be customized to match a brand's specific aesthetic?

Yes. Pose customization is achievable through reference image input, preset pose libraries, and prompt-based instructions. Brands can build and lock their own pose libraries for seasonal consistency.

How many pose variations should a fashion catalog include per SKU?

Three to four poses is the minimum for PDP because each angle answers a different shopper question — front for silhouette, back for fit, three-quarter for construction, dynamic for movement. From that same base asset, additional variants for social and campaign creative are generated without a new shoot.

Do AI model poses affect conversion rates compared to flat-lay images?

On-model imagery communicates fit and silhouette more effectively than flat lays. High-resolution on-model images improve conversion and reduce return rates by giving shoppers the visual context they need to choose the right size and style.

How do AI model poses support inclusive representation in fashion catalogs?

AI enables the same pose set to be applied across models of different body types, ethnicities, and ages at no additional casting cost. This removes the cost barriers that traditionally made diverse representation in catalogs expensive to produce at scale.