Poor draping accuracy doesn't just look bad. It creates customer mistrust, drives higher return rates, and damages brand credibility. When a product image misrepresents how a garment actually drapes or fits, customers receive something different from what they expected. 43% of UK consumers returned a product in the past year due to incorrect pre-purchase information, and 16% of shoppers have returned online apparel purchases that didn't resemble the item shown on the product page. With online return rates averaging 24.5% and costing the US retail industry close to $900 billion annually, draping accuracy isn't an aesthetic preference — it's a measurable business issue.

TLDR:

- AI model draping accuracy determines how faithfully a garment's fabric behavior, silhouette, and print appear on AI-generated models

- Poor draping accuracy drives returns, reduces conversion rates, and erodes brand trust

- Physics-based AI approaches produce significantly more accurate results than simple overlay methods

- Test platforms using your most complex garments — not your easiest ones — to reveal real capability differences

- Human-reviewed AI output catches errors that fully automated systems miss

What Does AI Model Draping Accuracy Actually Mean?

Draping accuracy is the degree to which an AI-generated image accurately shows how a specific garment would look on a real human body. This includes fabric fall, silhouette shape, surface texture, print fidelity, and garment structure. Not all AI platforms approach this challenge the same way.

Two Fundamentally Different Approaches:

Overlay or "painting" methods composite a garment image onto a model without simulating physical behavior. These systems essentially stretch or warp the garment photo to fit the model's body outline, producing flat, unrealistic results where fabric appears painted on rather than draped naturally.

Physics-informed or full-image regeneration approaches simulate how fabric responds to body shape, gravity, and movement. These systems model fabric properties and produce far more accurate results — particularly for complex garments like flowing dresses or structured tailoring.

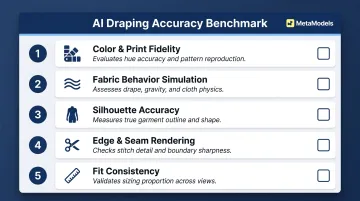

Five Dimensions of Draping Accuracy

- Color and print fidelity: Preserves exact print patterns, brand colors, logo placement, and surface graphics — pattern alignment at seams is a common failure point

- Fabric behavior simulation: Replicates fold lines, stretch points, and gravity-driven drape appropriate to fabric weight; flowing fabrics are harder to model than structured ones

- Silhouette accuracy: Retains intended shape — structured shoulders, flared hems, fitted waists — without making blazers look baggy or fit garments appear loose

- Edge and seam rendering: Renders collars, cuffs, hems, and seam lines cleanly, without blurring or artifacts; edge quality separates professional-grade output from amateur results

- Fit consistency: Maps the garment proportionally to the model's body type, rather than floating, stretching, or painting it on

Each of these dimensions is directly influenced by how an AI platform is built — which is where architecture becomes the deciding factor.

The Role of AI Architecture

Models trained on large fashion-specific datasets with physics engine reinforcement tend to outperform general-purpose image generation models on garment accuracy tasks. General diffusion models often struggle with fashion-specific terminologies, and region-specific edits can generate content that doesn't fit well with the rest of the product, leading to stylistic inconsistencies.

Context matters: A product page requires higher garment fidelity than a social media lifestyle image. Match your accuracy requirements to your use case before choosing a platform.

Why Draping Accuracy Directly Impacts Fashion Brand Performance

Return Rates

When a product image misrepresents how a garment drapes, fits, or looks in real life, customers receive something different from what they expected. This expectation gap drives returns. 62% of consumers say they're more likely to keep a purchase when product information is clear, accurate, and detailed. Inaccurate draping does the opposite — it creates the expectation gap behind the 16% of apparel returns attributed to products not resembling their images.

Conversion Rates

Shoppers use product imagery as their primary proxy for physical evaluation. 56% of users investigate product images as their first action when arriving at a product page, and 42% attempt to gauge the overall scale and size of a product from its images. Accurate draping increases purchase confidence by showing shoppers exactly what they'll receive.

Brand Trust

Consistently inaccurate AI imagery erodes shopper confidence over time. In early 2026, Gucci faced swift backlash for AI-generated visuals promoting its Milan Fashion Week show, with comments calling the imagery "cheap" and "lazy." Valentino's clearly labeled AI-generated video for its DeVain handbag sparked similar anger — not because viewers were confused, but because they objected to the AI output quality itself.

The data behind those reactions is worth noting:

- 25% of shoppers react negatively to AI imagery overall — rising to 35% among women

- Peer-reviewed research confirms customers avoid services advertised by AI-generated images, particularly for high-involvement purchases where trust matters most

Legal and Regulatory Context

Brand trust risk is compounded by a tightening regulatory environment. Three major jurisdictions now impose disclosure requirements on AI-generated product visuals:

| Jurisdiction | Requirement | Consequence |

|---|---|---|

| EU (AI Act) | AI-generated content that substantially manipulates images must be "clearly identifiable as artificial" | Fines up to €15 million or 3% of global annual turnover |

| UK (ASA) | AI use must be disclosed where it's prominent and not obvious to consumers | Ads ruled materially misleading if AI effects don't reflect real results |

| US (FTC) | AI-generated content falls within existing consumer-protection statutes | Nondisclosure is deceptive if AI use is "material" to the consumer |

Product visuals that distort fit, color, or texture in ways that "change reality" sit squarely in regulators' crosshairs. Accurate draping keeps imagery defensible.

The Business Case for Accuracy Investment

The cost of processing returns and handling complaints consistently outweighs the upfront cost of a higher-quality AI draping platform. 68% of consumers say they'd stop buying from a brand after a bad product information experience — meaning one category of inaccurate imagery can permanently shrink a brand's customer base.

The Key Factors That Determine AI Draping Quality

Input Image Quality

AI draping output quality is heavily constrained by the quality of the input garment image. Clean, well-lit flat-lay or packshot images on neutral backgrounds give the AI model the clearest signal for fabric texture, pattern, and structure. Poorly lit, wrinkled, or low-resolution inputs degrade output quality regardless of how sophisticated the AI is.

Best practices:

- Use neutral backgrounds (white or light grey)

- Ensure even, professional lighting

- Capture high-resolution images

- Present garments flat or on ghost mannequins without wrinkles

Fabric Category and Complexity

Different fabric types present different levels of difficulty for AI draping systems:

Structured fabrics (denim, blazers, structured knits) render more accurately because their behavior is predictable. Cotton, for example, has a stretch stiffness of 1,000,000 g/s², making it relatively stable to simulate.

Lightweight, flowing fabrics (chiffon, silk, sheer overlays) are considerably harder to get right. Silk and knit are the most challenging fabric types for AI simulation due to extreme deformability and complex folding patterns. Knit has very low stretch stiffness (25,000 g/s²), leading to drastic shape changes. These materials require accurate simulation of gravity, layering, and light transmission.

Heavy and high-friction materials (leather, fur) create complex contact mechanics harder to model than smoother fabrics, increasing the sim-to-real gap.

AI Model Body Diversity and Fit Mapping

Draping accuracy also depends on how well the platform's model library maps garment fit across different body types. A garment draped accurately on a size-small model may not accurately represent how it would look on a plus-size or petite model unless the AI system accounts for proportional fit differences rather than uniformly scaling the garment image.

The distinction matters: platforms that simulate how fabric physically responds to different body shapes — tension at the shoulders, gather at the waist — produce noticeably more realistic results than those that just resize a flat image.

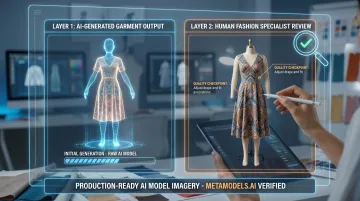

The Role of Human Review

Fully automated AI pipelines have a ceiling on accuracy for complex garments. Platforms that incorporate human review at the output stage can catch AI errors before images go live. Common failure points include:

- Distorted logos or graphic prints

- Unnatural folds that contradict fabric physics

- Fabric color shifts at seams or edges

This quality checkpoint is especially important for complex garments where AI-only pipelines have inherent accuracy limitations.

Platform Training Data and Specialization

AI systems trained specifically on fashion imagery, garment-body interaction, and fabric physics outperform general-purpose image generation models on draping accuracy tasks. The AI in fashion market reached $2.92 billion in 2025, with fashion-specialized platforms emerging as distinct from general-purpose generators. Fashion-specialized training enables capabilities general tools lack: garment segmentation, pose-aware fabric deformation, and seam-accurate rendering. These directly determine whether draped output looks production-ready or AI-generated.

Which Garment Types Challenge AI Draping the Most?

AI draping systems handle simple garments easily. Complex pieces reveal real capability differences.

Highest-difficulty categories:

- Sheer and layered fabrics — light transmission through semi-transparent materials demands sophisticated physics modeling that most platforms handle inconsistently

- Heavily embellished pieces — beading, embroidery, and 3D textures are hard to preserve; texture and fabric-based features pose greater challenges than structural elements like pockets or logos

- Knitwear — low stretch stiffness creates unpredictable fold patterns that challenge even advanced simulators

- Printed and logo-heavy pieces — pattern alignment at seams and logo distortion are common failure points across garment curves

- Dense pleats and ruffles — pleat skirts show the lowest absolute accuracy due to collision detection failures among tightly packed folds

Practical Guidance

Test your most difficult SKUs — not your easiest ones — during any AI platform evaluation period. Simple plain-color garments will look accurate on almost any platform. Your most complex items are where platform differences actually show.

For couture, heavily embellished, or sheer pieces, a hybrid approach often delivers the best result: AI draping for the bulk of your catalog, traditional photography reserved for hero and campaign imagery.

How to Evaluate AI Draping Accuracy Before You Commit

Run a Structured Accuracy Audit

Before signing up for a paid plan, run a practical test using 5–10 representative SKUs that include at least two high-complexity garments.

What to look for:

- Print alignment at seams

- Fabric texture fidelity at 100% zoom

- Natural fold behavior

- Edge rendering quality (clean collars, cuffs, hems)

- Logo preservation without distortion

Use the Comparison Benchmark Approach

Generate the same garment on AI models alongside a real on-model photo (or known-accurate reference image) and directly compare the five accuracy dimensions:

- Color and print fidelity

- Fabric behavior simulation

- Silhouette accuracy

- Edge and seam rendering

- Fit consistency

If a platform's output diverges noticeably on two or more dimensions, that's a signal to test further before committing.

Ask About Quality Assurance Processes

QA processes vary significantly between platforms — and the difference matters for garment accuracy. Before committing, ask:

- Does the platform use automated checks only, or is there a human review layer?

- Are garments flagged for re-generation when fidelity drops below a threshold?

- Can you request manual corrections on specific accuracy issues?

Platforms that combine automated processing with human-verified output — like MetaModels.ai's review pipeline — consistently produce more accurate results than fully automated systems, particularly for complex garments.

How MetaModels.ai Ensures Draping Accuracy at Scale

MetaModels.ai addresses the accuracy ceiling that fully automated platforms face through a dual-layer approach: real-time fabric draping technology that simulates how garments behave on AI models, combined with human-reviewed image output.

Every AI-generated image and video undergoes review by human fashion specialists who verify and correct details including color accuracy, shape, and proportions before delivery. This human quality checkpoint catches AI errors — distorted prints, unnatural folds, color shifts — that automated systems miss. For embroidered pieces, bold prints, or structured tailoring, that layer of oversight is what keeps the output production-ready.

The packshot-to-AI-model workflow is built for brands that want accurate on-model content without new production setups. The process is straightforward:

- Upload flat-lay or ghost mannequin packshots

- The system converts them into on-model images with accurate fabric draping

- Color fidelity, print detail, and garment structure are preserved throughout

- Content is delivered ready-to-publish in up to 4K resolution

Frequently Asked Questions

How accurate is AI model draping compared to a real photoshoot?

For most standard garment types, AI draping now produces results that closely match real photoshoots — though accuracy varies by fabric complexity and platform. Sheer fabrics, embellishments, and complex prints still benefit from human review or physical photography for hero images.

What types of garments are hardest for AI draping to render accurately?

Sheer and transparent fabrics, heavily embellished pieces, fine knitwear, and garments with small-text logos or complex print alignment at seams are the most challenging categories. Prioritize these garment types in platform testing to reveal real capability differences.

Does AI draping accuracy affect product return rates?

Yes. Inaccurate draping misrepresents how a garment looks when worn, which leads to unmet expectations and higher return rates. Accurate output keeps product images aligned with what customers actually receive.

What input image format gives the best AI draping results?

Clean, well-lit flat-lay or packshot images on neutral (white or light grey) backgrounds are the most reliable input format. Wrinkled, poorly lit, or cluttered images degrade output accuracy regardless of platform quality.

Can AI draping accurately show how a garment fits different body types?

Accuracy across body types depends on whether the platform models proportional fit differences rather than simply scaling the garment image. Brands targeting diverse audiences should test draping on multiple body types, not just standard sizing.

How does human review improve AI draping accuracy?

Human review catches errors that automated systems miss: distorted prints, unnatural folds, and color shifts. For complex garments in particular, this checkpoint is what separates acceptable output from publish-ready imagery.