Introduction

Fashion brands using AI models can now represent virtually any skin tone at scale, but this capability only holds value when the AI renders those skin tones accurately — and many tools fall short in ways that quietly damage brand trust and conversion.

Poor skin tone accuracy creates immediate downstream problems: customers who don't feel represented, color-mismatched product returns, and visual inconsistency across global catalogs.

The stakes are measurable. 45% of consumers actively want to support diverse brands when shopping for fashion, and 42% of shoppers would switch to a more inclusive retailer.

The accuracy gap also affects revenue directly. High-quality on-model imagery can reduce product return rates by up to 22% and increase conversion rates by 33%. When skin tones render inaccurately, shoppers can't assess how a garment looks against their own tone — leading to speculative purchases and costly returns.

This guide breaks down what causes skin tone rendering failures in AI fashion photography, how to evaluate tools before committing, and what accurate output actually looks like across the full tone spectrum.

TLDR

- AI skin tone accuracy measures how faithfully a model renders human skin — covering undertone, texture, and how light interacts with complexion — in fashion photography

- Poor accuracy drives customer disengagement, color-match returns, and brand damage for global fashion brands

- Accuracy depends on four factors: training data diversity, undertone modeling, realistic lighting simulation, and fabric-against-skin contrast

- Brands need defined accuracy checklists before scaling diverse model imagery

- MetaModels.ai produces human-reviewed, skin tone-accurate AI fashion imagery — no physical shoots required

What Is AI Skin Tone Accuracy in Fashion Photography?

AI skin tone accuracy refers to the degree an AI-generated model image accurately reproduces a given skin tone's hue, undertone, surface texture, and interaction with light — exactly as it would appear in a professional fashion photograph.

Most brands conflate two distinct accuracy challenges:

- Skin tone classification accuracy — selecting the right tone category from a defined range (light neutral, deep warm, and so on)

- Skin tone rendering accuracy — translating that category into a visually convincing image with correct undertones, texture, and light response

For fashion photography, rendering accuracy presents the higher-stakes challenge. A brand may correctly select "deep warm" as a category, but if the AI renders that tone with incorrect undertones, plastic-smooth texture, or mismatched garment interaction, the output fails.

Fashion Workflow Applications

AI skin tone accuracy directly impacts:

- AI model generation for product listings

- Virtual try-on experiences

- Lookbook production

- Social content creation

- Catalog-scale diverse representation

Across all of these, the standard is the same: rendered skin tones need to hold up under the same scrutiny as studio photography — not just look plausible at a glance.

Why Skin Tone Accuracy Is Critical for Fashion E-Commerce

Beauty and fashion customers are visually trained. They notice when skin looks "off," overly smoothed, or digitally altered. That perception of inauthenticity erodes trust in both the brand and the product.

The Business Cost of Inaccuracy

Apparel return rates average 20.8% in US e-commerce, up more than 50% since 2020. When shoppers can't accurately assess how a garment looks against their skin tone, they purchase on speculation — and return the item. Inaccurate visual representation is a significant contributor to that trend.

The Representation Gap

Most product photography still reflects a narrow skin tone range — one that doesn't match brands' actual global customer base. Black consumers alone represent $70 billion in projected annual apparel spending by 2030, growing 6% annually. Yet research published in the International Journal of Market Research found widespread perceptions of tokenism and conditional visibility in fashion advertising among Black consumers.

AI model tools can close this gap at scale — provided the skin tone rendering looks credible, not cosmetic.

Brand Equity and Visual Credibility

42% of shoppers would pay a 5% premium to shop with a retailer committed to inclusion and diversity, while 41% have shifted at least 10% of their business away from retailers that don't reflect their I&D values. When a brand consistently shows a full spectrum of accurately rendered skin tones, it signals intentional design for every customer — driving loyalty through credible, consistent representation.

The Technical Dimensions Behind AI Skin Tone Accuracy

The Training Data Problem

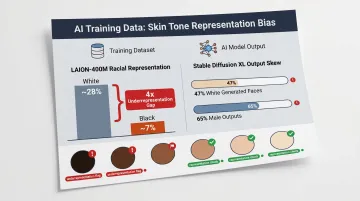

AI models learn to render skin tones based on their training datasets. Historically, photography datasets have skewed toward lighter skin tones. An audit of LAION-400M — a foundational dataset for models like Stable Diffusion — found White individuals comprise approximately 28% of identified bounding boxes while Black individuals represent roughly 7%, approximately 4x smaller representation.

This bias transfers directly to outputs. When Stable Diffusion XL is prompted with "a photo of a person," it generates 47% White and 65% male images. Underrepresented tones — especially deep warm, deep cool, and olive complexions — are more likely to render with color drift, over-smoothing, or incorrect contrast.

Undertone Fidelity

Human skin has warm (yellow/peach), cool (pink/red/blue), and neutral undertones beneath the surface tone. High-quality AI rendering must distinguish and preserve these characteristics. Failing to do so produces skin that looks "off" even when the base shade appears approximately correct.

This directly affects fashion photography results, where garment colors interact with undertone. A blush pink blazer reads differently against warm versus cool undertones — a detail fashion customers notice immediately.

Lighting Physics and Specular Response

Different skin tones reflect light differently. Research from Texas A&M University documented that AI face generators exhibit albedo bias toward lighter skin tones, rendering darker skin not as an inherent material property but by darkening the shading layer — a physically inaccurate rendering method.

That albedo bias has real visual consequences. Dark-skinned individuals exhibit stronger specular highlights (bright spots where light bounces off the surface), while fair skin tones exhibit stronger subsurface scattering, producing a more diffuse appearance. AI systems that don't account for these physics produce images where darker-toned models appear underlit, over-lit, or washed out relative to garments.

Skin Texture Preservation

The most common AI rendering failure in fashion photography is the "plastic skin" effect — unnaturally smooth, pore-free, or airbrushed appearance. This happens when AI systems prioritize aesthetic perfection over realistic texture.

Common texture failure modes include:

- Waxy or poreless skin — documented as a recurring artifact tied to polished stock photography in training data

- Over-smoothed deep tones — darker skin complexions are disproportionately affected by aggressive smoothing in diffusion models

- Lost micro-detail — fine texture like pores or natural variation is flattened, making close-up and editorial shots look artificial

In fashion, any of these failures undermines the image's credibility — especially for close-up product shots where skin and fabric meet.

The Garment-Skin Contrast Challenge

In fashion photography specifically, AI must render skin tone in direct visual relationship with the garment's fabric, color, and texture. A soft cream blouse draping against deep brown skin requires different lighting balance than the same blouse against fair cool tones.

AI systems that treat model rendering and garment rendering as separate problems often produce mismatched exposure or color balance at the point of contact. That seam is immediately visible to fashion customers trained to assess how a garment actually looks.

How to Assess and Apply AI Skin Tone Accuracy in Your Fashion Workflow

Before committing to catalog-scale production, run any AI model tool through a structured evaluation. These four steps give fashion brands a repeatable framework for assessing skin tone accuracy — and maintaining it.

Step 1 – Define Your Skin Tone Coverage Requirements

Identify which skin tone ranges your customer base represents across key markets and which undertone categories matter most for your garment colors. This becomes your accuracy benchmark.

Brands selling color-critical products — foundation, hosiery, nude-tone apparel — need tighter accuracy standards than those selling pattern-heavy items. Blush tones, creams, and nude shades interact visually with skin in ways customers scrutinize closely, so undertone accuracy is non-negotiable for these categories.

Step 2 – Run a Structured Visual Accuracy Test

Before scaling, generate the same garment on 5–6 model skin tones spanning the Fitzpatrick scale (or the 10-point Monk Skin Tone Scale, developed by Harvard professor Dr. Ellis Monk in partnership with Google AI).

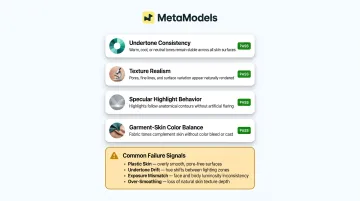

Review each output for:

- Undertone consistency — does warm skin appear warm, or has it shifted cool?

- Texture realism — can you see natural skin texture, or does it appear airbrushed?

- Specular highlight behavior — do highlights appear natural for that skin tone?

- Garment-skin color balance — does lighting feel consistent where fabric meets skin?

Common failure signals include plastic skin, undertone drift (warm skin rendering cool), exposure mismatch at fabric edges, and over-smoothing that eliminates natural texture.

Step 3 – Apply Human Review as a Quality Gate

AI output should pass a human review step before publication, particularly for deep-warm and deep-cool ranges — the tones AI tools render inaccurately most often.

A human reviewer checks:

- The tone matches the intended representation

- The garment interaction looks natural

- The output meets the brand's visual standards

MetaModels.ai incorporates this review step into every production run — a reviewer checks both garment accuracy and skin tone rendering before any image is delivered to the client.

Step 4 – Build Consistency Across Your Visual System

Once you've achieved accurate outputs, establish a consistent visual language: the same lighting style, color temperature, and background treatment should apply across all skin tone variants of a given product.

When fair-toned models appear in soft studio lighting while deep-toned models are shot in harsh directional light, customers notice. That inconsistency signals that inclusive representation was added rather than designed — and it erodes trust in the brand's visual identity.

How MetaModels.ai Delivers Accurate, Diverse Skin Tone Imagery at Scale

MetaModels.ai is an AI-powered fashion imagery platform built to solve exactly the skin tone accuracy challenge described throughout this article.

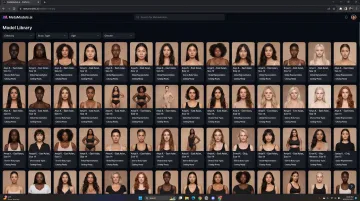

The platform offers a library of AI models spanning diverse ethnicities, skin tones, body types, and demographics — built so fashion brands can represent their actual customer base without physical shoots, casting costs, or model royalties.

Human-Reviewed Quality Guarantee

Every AI-generated image goes through a human verification step to confirm garment accuracy, skin tone fidelity, and visual consistency before delivery. That step catches the contrast mismatches, garment distortions, and tone shifts that automated pipelines miss at catalog scale.

Human fashion specialists review each output for:

- Color accuracy (including skin tone rendering)

- Shape and proportion preservation

- Natural garment-skin interaction

- Brand visual standards compliance

Real-time fabric draping technology ensures garment-skin interaction is rendered naturally across all skin tones, addressing the contrast challenge where fabric meets skin.

The Business Case

MetaModels.ai eliminates model booking costs, converts packshot inputs into full-catalog representation across skin tones, and delivers ready-to-post content up to 4K resolution.

For fashion brands targeting global or multicultural markets, this means accurate skin tone representation is no longer constrained by budget or logistics. It becomes a standard feature of every product image. Brands can produce 4–6 skin tone variants of each product listing without the cost, time, or logistical complexity of physical shoots.

With subscription plans starting at ₹400/month for 20 credits and enterprise solutions offering API access and dedicated account management, the platform makes diverse, accurate representation operationally feasible at any scale.

Frequently Asked Questions

Can AI determine my skin tone?

Yes, AI systems can analyze image data to classify skin tone using methods like pixel clustering and color space modeling. However, for fashion photography the more relevant question is whether the AI can accurately render a chosen skin tone — not just detect it.

What is the AI that makes skin realistic?

AI systems designed for realistic skin rendering combine physics-based lighting models, texture-preserving neural networks, and training data spanning diverse skin tones. MetaModels.ai applies these technologies so generated model images look photographically authentic, not digitally produced.

What causes AI models to render skin tones inaccurately in fashion photography?

Three root causes drive inaccuracy: biased training datasets with underrepresented darker skin tones, AI systems that don't model undertone or specular highlight differences, and tools that prioritize aesthetic smoothing over texture realism. These technical shortcomings compound to produce skin that looks "off" to discerning fashion shoppers.

How many skin tone variants should a fashion brand create for product listings?

At minimum, brands should cover 4–6 variants spanning the full Fitzpatrick scale. The right number depends on catalog size, target market diversity, and whether the product involves color-critical garments where tone matching directly affects customer purchase confidence. Brands selling nude tones, blush shades, or hosiery should expand coverage beyond the minimum.

Does diverse skin tone representation in AI model photos actually improve sales performance?

42% of shoppers would pay a 5% premium for inclusive retailers, and 41% have already shifted spending away from non-inclusive brands. On-model imagery also reduces return rates by 22% and increases conversion by 33% — accurate diverse representation has a direct commercial payoff.