Introduction

When online shoppers abandon their carts or return fashion purchases, they rarely name the actual culprit: lighting. Something about the product image looks "off" — and that subconscious distrust drives real revenue loss.

According to an Akeneo survey reported by Chain Store Age, 34% of consumers who return clothing cite "misleading product images" as a key reason, while 62% say more accurate visuals upfront would reduce their returns.

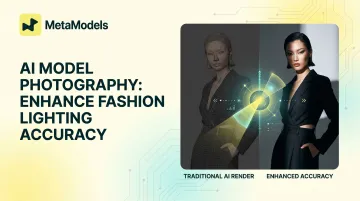

AI model photography has made on-model fashion imagery faster and cheaper to produce. Yet lighting accuracy remains the hardest problem to solve — and the one with the most direct impact on conversion. This article breaks down why it matters, how AI systems handle it, and what you can do to get it right.

TLDR

- Lighting inaccuracies erode shopper trust and push return rates up — a direct hit to revenue

- AI matches lighting environments by analyzing shadows, color temperature, highlights, and falloff

- Different fabrics reflect light differently—silk needs sharp specular highlights while cotton diffuses softly

- Consistent lighting across a catalog builds brand identity and shopper confidence

- Human review catches garment-level errors that AI processing alone misses

Why Lighting Accuracy Is a Revenue Problem in Fashion E-Commerce

Lighting inconsistency creates an "uncanny valley" effect in product photography. Shoppers rarely identify the specific flaw, but the discomfort registers immediately—and that friction triggers disengagement. Research from the University of Maryland found that visual inconsistency between virtual figures and their backgrounds increases perceived uncanniness and cognitive load, leading to negative evaluative responses.

The Traditional Compositing Problem

When a garment shot in a studio is placed onto a model image or lifestyle background, the two lighting environments clash. The most common culprits include:

- Color temperature mismatches — warm studio light conflicts with cool natural light in the background

- Shadow direction conflicts — a garment lit from the left placed on a model lit from the right signals artificiality immediately

The problem compounds at scale. A brand managing hundreds or thousands of SKUs cannot manually correct lighting for every image, and inconsistency across a catalog erodes visual trust even when individual shots are technically sound.

Why Lighting Matters Differently for Fashion

Apparel communicates texture, weight, drape, and fit through light. Poor lighting collapses that information, making products harder to evaluate and increasing purchase uncertainty. 56% of online shoppers' first action on a product page is to explore product images before reading titles or descriptions, making visual accuracy the first—and sometimes only—chance to build confidence.

The return connection is direct: 71% of consumers have returned products because the actual item did not match the description, and lighting is a key driver of that gap between expectation and reality.

How AI Model Photography Analyzes and Replicates Lighting

Modern AI model photography systems use computer vision to analyze the lighting characteristics of an input image:

- Shadow direction and hardness - Where shadows fall and how sharp or diffused their edges are

- Highlight color temperature - Whether highlights read as warm (golden) or cool (blue)

- Ambient vs. directional light ratios - The balance between soft environmental light and focused light sources

- Light falloff gradients - How quickly light intensity decreases across the subject

Once the AI understands the lighting "signature" of an environment, it applies those characteristics to the model and garment layer. Older compositing methods simply layered images on top of each other — no light matching, no shadow integration.

How Training Data Shapes Lighting Intelligence

These systems train on large datasets of professional fashion photography to learn how garments, skin tones, and different materials realistically respond to various lighting conditions. According to research from the University of Maryland on illumination control in diffusion models, state-of-the-art systems use:

- CLIP vision models to compute lighting quality similarity scores

- Intrinsic image decomposition to separate reflectance from illumination

- Geometry-aware shading using depth estimation and surface normals

- Procedural shadow patterns that simulate cast shadows from windows, blinds, and foliage

What Lighting Accuracy Actually Means

True lighting accuracy goes well beyond brightness. It includes:

- Shadow integrity - Shadows should be preserved, not flattened or artificially removed

- Highlight placement - Specular highlights on silk versus cotton look fundamentally different

- Color temperature coherence - Warm or cool lighting must be consistent across the entire image

These qualities are also where prompt specificity pays off. On platforms that accept text or reference image inputs, specifying a lighting style — "soft diffused light," "Rembrandt-style directional light," "natural window light" — produces far more accurate results than generic prompts.

Fabric Type and Light Interaction: The Most Underrated Challenge

Fabric type is the critical variable that separates basic AI fashion images from truly photorealistic ones. Different materials have fundamentally different optical properties:

How fabrics differ optically:

- Silk - Produces sharp specular highlights and reflects environmental color; requires anisotropic rendering to avoid a flat, washed-out result

- Cotton - Diffuses light softly with minimal reflection; absorbs and scatters incident light through loosely woven fibers

- Leather - Creates high-contrast reflections with deep shadow falloff; behaves like hard surfaces

- Synthetic blends - Vary depending on fiber density and weave; can exhibit plastic-like sheen if rendered incorrectly

According to Google's Filament physically based rendering documentation, general cloth exhibits 4-5.6% Fresnel reflectance at normal incidence, while traditional hard-surface BRDFs (bidirectional reflectance distribution functions) make cloth look rigid or tarp-like. Velvet exhibits strong rim lighting from forward and backward scattering, while silk and satin require anisotropic material models to capture their mirror-like specular behavior.

What goes wrong when AI doesn't account for material properties:

A silk blouse rendered with cotton-like light diffusion looks flat and unconvincing. A leather jacket without proper specular highlights looks like matte plastic. These errors immediately signal to shoppers that something is artificial, triggering distrust before they've read a single product description.

The most advanced AI model photography systems handle this through fabric-aware rendering: the AI identifies material type from visual analysis of the input product image and applies the correct light interaction model before generating output. MetaModels.ai's real-time fabric draping technology takes this further, integrating garment material behavior into the generation process so fabric doesn't just drape realistically — it also catches and scatters light correctly.

The skin tone lighting challenge

Material rendering accuracy is only one dimension of the problem. Lighting that looks accurate on one skin tone can look completely different on another — and AI model photography platforms must handle both with equal precision. This is simultaneously a quality issue and an inclusivity issue.

Research evaluating 4,000 AI-generated images across four leading AI models found significant skin tone bias: overall representation was 89.8% light skin versus 10.2% dark skin. The study was conducted in a dermatological context, but the architectural biases it documents are built into the same foundation models that power AI fashion photography.

For brands relying on AI models with diverse skin tones, lighting must be equally accurate across all representations. Accenture research found that 41% of shoppers shifted at least 10% of their business away from a retailer that did not reflect the importance of inclusion and diversity. Brands that get lighting right across all skin tones protect both conversion rates and customer trust.

Scaling Lighting Consistency Across Large Product Catalogs

For fashion brands managing hundreds or thousands of SKUs across multiple seasons, individual image quality is less important than uniformity. Every product page needs to feel like it belongs to the same visual family. Inconsistent lighting across a catalog is visually jarring and undermines brand authority.

By setting a lighting reference standard and applying it across an entire product upload, brands can ensure that a sweater photographed in January and shorts photographed in July read as part of the same cohesive catalog. This is a key practical advantage AI model photography holds over traditional photoshoots, which are inherently subject to session-to-session variability.

That variability also carries a real cost. Basic white-background shots run £20–60 per image, while styled lifestyle images cost £80–400+. Post-production retouching adds another £12–60 per image. When studio rental, shipping, and coordination are factored in, effective per-image costs reach approximately £70 even when the quoted rate is £30.

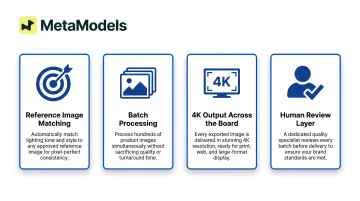

Key features to look for in an AI platform:

- Reference image matching — Upload a "gold standard" image; the AI applies that exact lighting profile to every subsequent SKU

- Batch processing — Submit multiple products at once without manually adjusting settings per upload

- 4K output across the board — Consistent resolution prevents quality mismatches between early and late catalog additions

- Human review layer — Platforms like MetaModels.ai combine bulk upload capability with human-reviewed output, catching garment accuracy issues before they reach your product pages

Why AI Lighting Still Needs Human Oversight

Even the most sophisticated AI lighting systems can produce errors that automated quality checks miss—a highlight in the wrong position, a shadow that doesn't match the background, or a fabric rendered with incorrect material properties. These errors are small individually but significant in aggregate, especially when they appear on high-traffic product pages.

Research from Stylitics surveying 411 shoppers found that shoppers quickly lose confidence when details like buttons, wrinkles, or fabric textures look incorrect in AI-generated images. Specific uncanny details that reduced trust included buttons being the wrong color, a lack of natural wrinkles in clothing, and faces that appeared too airbrushed.

73% of e-commerce brands report that AI-generated product images fail quality standards without additional manual editing. Common failure points include:

- Color inaccuracy and inconsistent tone rendering

- Texture failures on materials like silk, denim, or knit

- Reflection errors on glossy or metallic surfaces

- Lost fine details such as stitching, embroidery, or hardware on luxury items

For brands where accuracy matters, the standard is a human-reviewed workflow: each AI output is checked against the original product before publishing. MetaModels.ai pairs automated generation with verification by fashion specialists who confirm color, shape, and proportions are correct before delivery — catching the errors purely automated pipelines miss.

Practical Steps to Maximize Lighting Accuracy with AI Model Photography

Start with the Best Possible Source Image

The AI can only work with the information it receives. Input images that are sharp, well-lit, and show the full garment from multiple angles give the system more data to correctly identify material type, texture, and existing light conditions. Dark, blurry, or heavily shadowed source images lead to lighting errors — often ones that are difficult to correct in later iterations.

Use Reference Images and Specific Prompt Language

When the platform supports it, provide a lighting reference image — an existing photo with the lighting style you want — or use specific language describing the lighting environment:

- "Soft diffused natural light from a north-facing window"

- "Hard directional light from upper left with minimal fill"

- "Rembrandt lighting with warm color temperature"

Generic prompts produce generic lighting. The more precisely you describe the environment, the closer the output matches your brand's visual style.

Treat the First Output as a Starting Point

Regenerating with refined instructions is a normal part of the workflow, not a sign the technology has failed. Common refinements include:

- Adjusting shadow direction to match a real-world light source

- Correcting highlight intensity on reflective or textured fabrics

- Specifying the background lighting environment more precisely

Each pass narrows the gap between the AI output and your intended result.

Frequently Asked Questions

Can AI generate photorealistic images?

Yes, modern AI systems can produce highly photorealistic fashion images when trained on large professional photography datasets. Photorealism depends on input quality, accurate fabric rendering, and correct lighting synthesis. Platforms with human review layers like MetaModels.ai help ensure output meets professional standards before delivery.

How does AI create realistic faces?

AI generates realistic faces by learning statistical patterns from large datasets of human facial imagery, producing new faces that reflect real proportions, skin texture, and feature variation. In AI model photography, platforms use curated libraries of AI-generated model faces rather than real identities, maintaining visual realism without consent or licensing issues.

Why does lighting accuracy matter so much for fashion e-commerce?

Lighting is how shoppers perceive fabric texture, garment weight, color accuracy, and fit—all the signals they use to evaluate a purchase without touching the product. Inaccurate lighting collapses these signals, increasing uncertainty and driving both cart abandonment and returns.

What are the most common lighting mistakes in AI-generated fashion images?

The most frequent errors are mismatched color temperature between model and background, shadows that don't align with the implied light source, and fabric rendered with incorrect reflective properties—such as silk looking matte or leather lacking specular highlights. These inconsistencies signal low production quality, eroding shopper confidence before they reach checkout.

How does AI handle lighting differently across fabric types?

Advanced AI model photography systems identify material type from the input image and apply fabric-specific light interaction models—understanding that silk reflects sharply while cotton diffuses softly. Without material differentiation, output looks flat—denim, silk, and cotton all rendered with the same diffused finish regardless of how each fabric actually behaves under light.

Can AI model photography replace traditional fashion photo shoots entirely?

AI model photography is most powerful as a complement to traditional shoots—handling catalog scale, product variations, and rapid content production. Traditional photography remains the standard for hero campaigns and editorial work where human emotion and spontaneity matter. MetaModels.ai covers the high-volume middle ground: consistent, human-reviewed product imagery at scale.