Introduction

Picture this: You've just wrapped your spring collection—bias-cut silk blouses, raw denim jackets, and ribbed knitwear—and now you're staring down a £25,000 photoshoot budget just to capture how each fabric actually drapes, reflects light, and moves on a model. Three weeks from booking to delivery. Multiply that across every colorway and SKU, and you're looking at a content bottleneck that holds back launches and drains budgets before a single garment ships.

Packshots show what a garment looks like flat. What they can't show is how silk catches light on a shoulder, or how denim holds its shape at the hip. Until recently, only a studio shoot could bridge that gap. AI fabric rendering changes that equation.

What follows covers how AI fabric rendering on models works, how it differs from traditional photography, and what it means for fashion brands that need photorealistic imagery at scale—without the studio overhead.

TL;DR:

- AI fabric rendering converts flat packshots into photorealistic on-model images using physics-based simulation

- On-model photography costs £100–500+ per shot; AI brings that to 10–20% of the cost with same-day turnaround

- AI systems simulate fabric-specific drape, weight, and light behavior for material-accurate results

- Human review catches rendering artifacts before images go live, ensuring garment accuracy

What Is AI Fabric Rendering on Models?

AI fabric rendering on models is the process of using machine learning and physics-based simulation to generate photorealistic images of garments—with accurate fabric behavior—draped on virtual or AI-generated human models, without a physical photoshoot.

This isn't general 3D rendering. While some platforms render fabric on furniture or abstract forms, AI fabric rendering on models is specifically about how garments interact with body shape, pose, and movement—producing on-model e-commerce imagery at scale.

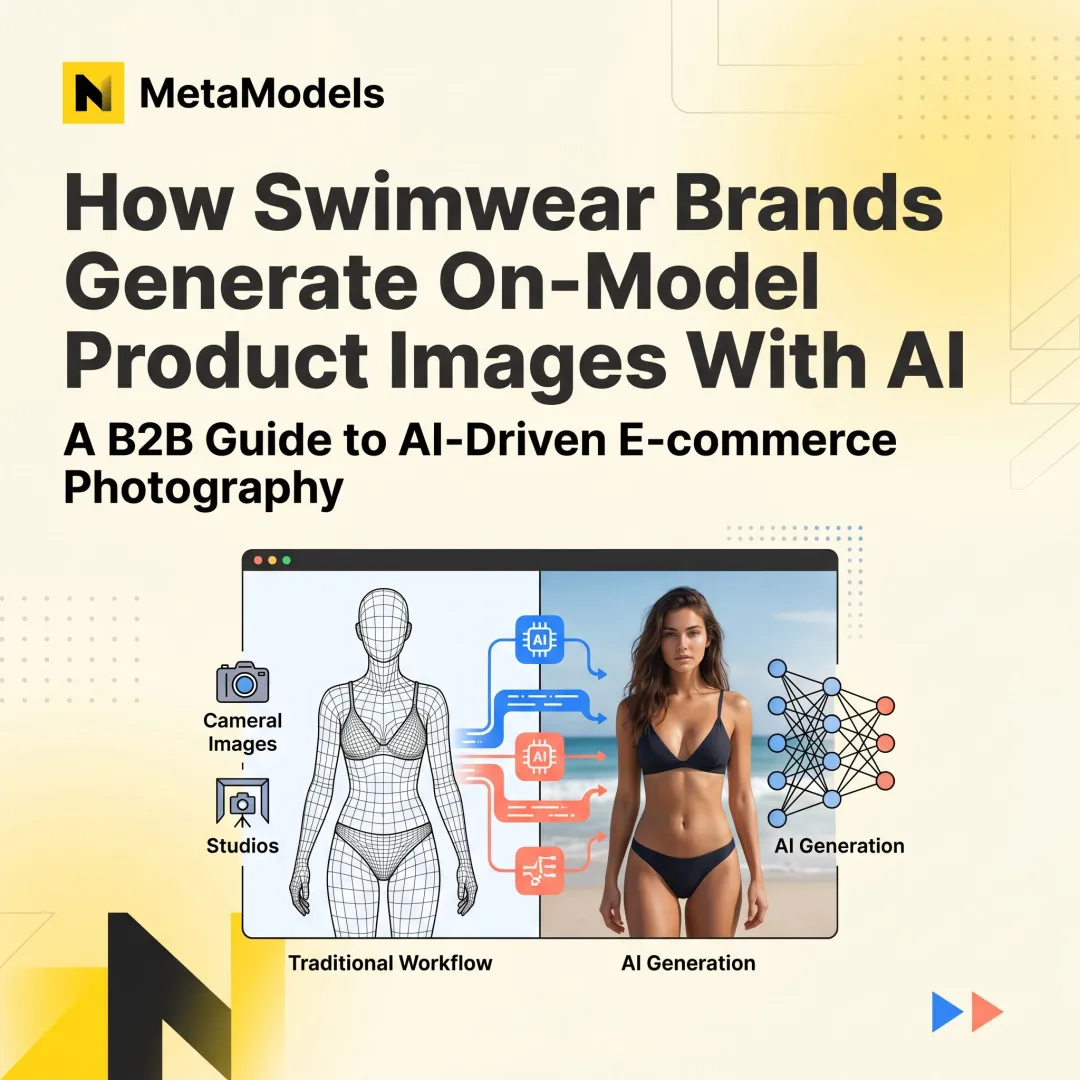

The technology works from two inputs: a flat packshot or 2D pattern (garment data) and an AI-generated human form (virtual model). From these, the AI infers how the fabric would realistically drape, fold, and reflect light on a body.

The AI disciplines involved include:

- Neural networks trained on fabric physics simulate stiffness, weight, friction, and elasticity

- Material recognition models classify fiber type and surface properties

- Generative image synthesis composes the final photorealistic output

The output for e-commerce teams: ready-to-use, high-resolution on-model imagery that rivals traditional studio photography, produced in minutes rather than days. MetaModels.ai, for example, converts packshots into on-model content without physical models, studio coordination, or royalty fees.

Why Traditional Garment Photography Falls Short for Fabric Accuracy

Even well-lit studio shoots struggle to capture a fabric's true character. The way bias-cut silk moves or how a heavy wool coat sits on the shoulder—capturing this consistently across a full catalog requires constant reshooting.

means £125,000-250,000 per year on traditional photography alone.

The Catalog Scaling Problem

When you have hundreds of SKUs across multiple colorways, fabric types, and silhouettes, traditional photography creates a bottleneck. Every variant needs its own shoot, model booking, and post-production pass. The traditional workflow—booking a photographer three weeks out, shipping samples, shoot day, then a week for edited images—runs 3-4 weeks from start to finish.

That's imagery arriving after launch, not before it.

How AI Renders Fabric Realistically on Virtual Models

Fabric Physics Simulation

AI models simulate physical fabric properties—drape, weight, stiffness, and elasticity—by training on datasets of real-world material behavior. The AI learns how 200-gsm cotton behaves differently from lightweight chiffon when draped over a shoulder or gathered at the waist.

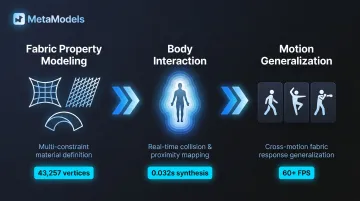

Recent research demonstrates the sophistication of these systems. Zhang & Li's Neural Garment Dynamic Super-Resolution model uses Mesh-Graph-Net architecture to extract super-resolution features from coarse garment dynamics, garment-body interactions, and inter-layer fabric interactions. The system synthesizes high-resolution frames (43,257 vertices) in approximately 0.032 seconds—roughly 5x faster than full physics-based simulation.

The model accounts for:

- Stretching, shearing, and bending via dihedral angles between mesh triangles

- Body interaction using signed distance fields and ray casting to identify nearest body vertices

- Generalization to unseen motions (dancing, walking, boxing) and unseen body shapes

Pose and body shape add another layer of complexity. The AI must account for how garments interact with the specific geometry of the virtual human—wrinkle formation at joints, fabric pooling at the hem, tension across fitted areas. Qiu's hybrid method combining PhysX 5.0 with LSTM networks and Conditional GANs achieves real-time rendering at over 60 FPS, reducing rendering delay from 33ms to 22ms (a 33% reduction) while improving accuracy by 25-30% over physics engines alone.

Light and Surface Interaction

Neural rendering systems replicate material-specific light behavior using learned reflectance models rather than manual shader tuning. Each fabric has a distinct optical "fingerprint":

- Silk produces anisotropic sheen that shifts with viewing angle

- Velvet absorbs directional light to create perceived depth

- Denim reflects diffusely with visible weave texture at close range

Physically Based Rendering (PBR) material sets drive this process, generating albedo, normal, roughness, and height maps. Height maps in particular simulate realistic surface details—bumps, wrinkles, weave texture—without requiring heavy underlying geometry.

Why consistent lighting matters: E-commerce images need to match across a catalog. AI rendering locks in studio-equivalent lighting setups—ensuring every garment, regardless of fabric type, renders under the same conditions for a consistent, professional look across the catalog. Without matched digital lighting, digital garments can look like "stickers" pasted onto a scene.

Human Review for Garment Accuracy

Even sophisticated AI can produce artifacts—fabric that clips through the body, incorrect fold patterns, or unrealistic texture scale. That's where human review becomes essential. Catching these errors before images go live protects both garment accuracy and brand trust.

At MetaModels.ai, every AI image and video is reviewed by human fashion specialists before delivery, with details including color, shape, and proportions checked and corrected.

Fabric-Specific Rendering Challenges AI Now Solves

Historically Difficult Fabrics

Three fabric categories have historically broken standard rendering pipelines:

- Sheer and semi-transparent materials (organza, chiffon): Require subsurface light transport modeling to capture how light passes through and diffuses within the fabric — something standard opaque-surface simulations miss entirely.

- Pile fabrics (velvet, bouclé): Need micro-geometry simulation. Sattler et al.'s Bidirectional Texture Function (BTF) approach captures view- and illumination-dependent appearance by mapping fabric probes onto 3D garment models, enabling directional nap behavior that standard BRDF models can't reproduce.

- Structured fabrics (denim, canvas): Must retain shape with limited drape. AI assigns stiffness parameters and bending resistance to maintain each fabric's characteristic body.

Colorway and Pattern Rendering

When a fabric exists in 12 colorways or as a large-scale print, AI can render every variant on-model from a single garment geometry pass—eliminating the need to reshoot the same style across colors. For brands launching dozens of SKUs per season, that collapses weeks of reshoots into a single production run.

Current Limitations

These capabilities have real boundaries. Current AI fabric rendering struggles with fabric friction — models lack the graph features to describe this property accurately. AI-generated video can also show product warping when models move, which disqualifies it for most commercial use cases today.

Hybrid pipelines — pairing physics-based simulation with generative AI — are the current workaround, alongside mandatory human review before any content goes live.

The Business Impact: From Packshots to Photorealistic On-Model Imagery

Workflow Transformation

The packshot-to-on-model pipeline works like this: A brand uploads a flat lay or ghost mannequin image, the AI maps the garment onto a selected virtual model with accurate fabric rendering, and the output is a publish-ready on-model image at up to 4K resolution.

Platforms like MetaModels.ai enable this conversion without model bookings, studio logistics, or royalty fees. The process eliminates traditional photography overhead while maintaining photorealistic output quality.

Cost and Speed Advantage

AI-generated imagery reduces e-commerce production costs by up to 70% compared to traditional studio shoots. AI-generated PDP imagery operates at 10-20% of traditional photography costs, with turnaround measured in hours instead of weeks.

Adoption is accelerating: Hugo Boss has been using AI imagery across e-commerce platforms since 2023. Peek & Cloppenburg, Etro, and Revolve have actively adopted AI for e-commerce content.

Catalog Scalability Benefit

With AI rendering, a brand can produce on-model imagery for an entire seasonal collectionacross all fabrics, silhouettes, colorways, and size ranges at once. This is especially valuable for e-commerce platforms that need fresh imagery at launch, not weeks after.

Fabric Rendering Quality and Returns

Accurate product imagery matters. At least 30% of all products ordered online are returned, and clothing has the highest return rate at 24%.

Critically, 22% of consumers return products because the product "looks different" in real life than it did on the website. When shoppers see how a fabric actually drapes and fits on a model body, they develop more accurate purchase expectations—reducing returns driven by fabric appearance or fit surprises.

3D product visualization reduces returns by 40%, demonstrating the measurable impact of accurate fabric representation.

Inclusive Representation as a Compounding Benefit

75% of consumers globally say that a brand's diversity and inclusion reputation influences their purchase decisions, according to Kantar's Brand Inclusion Index survey of 23,000+ people across 18 countries.

AI model libraries that include diverse body types, ethnicities, and demographics mean fabric rendering accuracy can be validated across the full range of consumers a brand actually serves—not just one body shape.

In practical terms, this delivers two compounding advantages:

- Meets consumer demand for representation without booking multiple models per SKU

- Confirms drape, fit, and fabric behavior across body types before a product goes live

What to Look for in an AI Fabric Rendering Solution

Fabric Accuracy Benchmarks

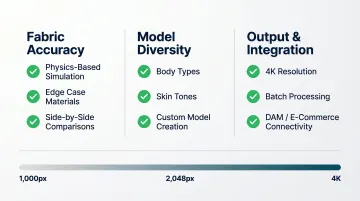

Evaluate platforms on how well they handle your specific fabric types — not just cotton basics, but edge cases like sheers, metallics, and heavy outerwear. Ask for side-by-side comparisons with actual garments before committing.

The core question: does the platform use physics-based simulation that ingests CAD patterns and assigns real-world physical properties, or pure generative AI that optimizes for visual appeal but may produce unrealistic fit? The distinction matters for catalog accuracy.

Model Diversity and Customisation

Fabric rendering accuracy is only useful if it holds across your full customer base. Look for platforms with a library of AI models spanning body types, skin tones, and demographics — plus the ability to create custom models that match your brand's specific aesthetic or target customer.

Output Quality and Workflow Integration

Look for platforms that produce ready-to-post imagery (minimum 1080p, ideally up to 4K), support batch processing for large catalogs, and connect directly to your existing e-commerce and digital asset management (DAM) workflows without requiring manual rework at each step.

E-commerce resolution standards to meet:

- Amazon requires images at least 1,000 pixels on the longest side for zoom functionality

- Shopify recommends square product images at 2,048 x 2,048 pixels

- Maximum upload sizes typically cap at 5,000 x 5,000 pixels

For brands that need to meet these standards consistently at scale, MetaModels.ai delivers ready-to-publish content up to 4K resolution across all subscription tiers, with human fashion specialist review on every output to confirm garment accuracy.

Frequently Asked Questions

How does AI fabric rendering on models differ from traditional packshot photography?

Packshots show the garment flat or on a mannequin. AI fabric rendering places the garment on a realistic virtual model with accurate drape and light behavior, producing on-model imagery without a physical photoshoot or studio booking.

Can AI accurately render different fabric types like silk, denim, or knitwear on a model?

AI systems trained on material-specific physics datasets replicate the distinct light behavior and drape characteristics of each fabric type. Very complex materials — metallics, sheers, heavily embellished outerwear — may require additional fine-tuning or human review.

How does real-time fabric draping technology work for e-commerce imagery?

AI maps garment geometry onto a virtual human model and simulates how the fabric falls and folds based on its physical properties — weight, stiffness, elasticity. The result is a photorealistic image ready for e-commerce use.

Does AI-rendered fabric on models look realistic enough for online shoppers?

Leading platforms produce imagery indistinguishable from studio photography, especially when combined with human quality review. Accurate fabric texture and drape give shoppers a true sense of the garment — which directly supports purchase confidence.

What types of garments benefit most from AI fabric rendering on models?

Fluid, draped garments (dresses, blouses, knitwear) show the greatest visual difference between a flat packshot and on-model AI rendering. These categories benefit most in e-commerce because shoppers need to see how the fabric moves and drapes on a body.

How does accurate fabric rendering on models help reduce e-commerce return rates?

22% of e-commerce returns happen because products "look different" than expected. When shoppers can see how a fabric actually drapes and fits on a model body, that gap closes — reducing returns driven by appearance or fit surprises.