Introduction

When a linen shirt appears creaseless or a chiffon blouse drapes perfectly taut, shoppers instinctively register something is wrong—even if they can't articulate it. This wrinkle realism gap is one of the most persistent flaws in AI-generated fashion imagery, and it undermines shopper trust and inflates return rates in ways that cut into real revenue.

Research from Stylitics found that shoppers specifically flagged "wrinkle-free fabrics that appeared unnatural" as a trust-eroding detail in AI fashion imagery, even when they couldn't identify other AI artifacts. The same survey revealed that 37% of shoppers become more careful about sizing and 30% expect to return the product when they suspect AI imagery was used.

That trust gap widens when wrinkle rendering fails to reflect how fabric actually behaves — and no amount of production speed or cost savings offsets the damage of a high return rate.

Wrinkle accuracy has become a technical and commercial problem that separates content that converts from content that erodes brand equity. This article explores why wrinkle realism matters, what technology is solving it, and how brands should evaluate AI platforms for fabric accuracy.

TLDR

- Unrealistic wrinkle rendering erodes shopper trust and drives defensive shopping behavior—37% become more sizing-cautious when AI is disclosed

- Physics-based simulation models how fabric actually drapes on a body, producing structurally correct wrinkles rather than surface-level texture guesses

- Elastic and knit fabrics are the hardest to simulate accurately—knit simulation errors average 17.59%, making fabric-specific approaches essential

- Human review combined with AI simulation is the current standard for e-commerce-ready garment accuracy

Why Wrinkle Accuracy Is the Hidden Metric in AI Fashion Imagery

Wrinkles aren't a cosmetic detail—they're visual proof that fabric is behaving according to physics. When a linen shirt shows no creases or a chiffon blouse drapes perfectly taut, shoppers instinctively register something is wrong. The Stylitics survey of 411 shoppers confirmed this intuition: respondents flagged "wrinkle-free fabrics that appeared unnatural" as a specific trust signal, criticizing AI images that "make things look too perfect."

The business risk is quantifiable. The National Retail Federation reported $849.9 billion in U.S. merchandise returns in 2025, with size and fit issues causing roughly 70% of fashion returns according to McKinsey data. When AI imagery overly smooths wrinkles or eliminates drape cues, shoppers lose the visual signals they use to assess fit and fabric weight—increasing the likelihood of "not as described" returns.

The trust gap grows measurable when AI is disclosed. Stylitics data shows shoppers respond to AI model disclosure by:

- Scrutinizing sizing more carefully (37%)

- Checking return policies before purchasing (37%)

- Anticipating a return before they even buy (30%)

Poor wrinkle rendering widens this gap further.

The Glance Test vs. Purchase Confidence

Consider a packshot of a relaxed-fit cotton shirt placed on an AI model. If the fabric appears stiff and creaseless, shoppers lose confidence in how the garment will look on their own body — and an image that passes a quick glance fails to close the sale.

For fashion brands producing AI imagery at scale, wrinkle accuracy separates content that converts from content that undermines brand trust. The higher the price point or the more texture-dependent the fabric, the more this distinction matters.

Fabric behavior is one of the hardest visual attributes to generate reliably — even for purpose-built systems. Pusan National University research found that DALL-E 3 implemented fashion design prompts correctly only 67.6% of the time, with fabric texture and abstract design concepts ranking among the most persistent failure points.

The Technology Powering Realistic Wrinkle Rendering

Two dominant technical approaches power AI wrinkle simulation: purely generative models that learn wrinkle patterns from image datasets, and physics-based simulation that models fabric as a material subject to real forces. The fundamental difference lies in how each approach "decides" where a wrinkle goes.

Physics-Based Simulation: Material Point Method

The Material Point Method (MPM) is one of the most physically accurate approaches available for garment dynamics. KAIST's MPMAvatar research demonstrates how MPM divides fabric into particle-level points and calculates deformation based on material properties, shape, and external forces.

How MPM works:

- Treats fabric as a continuum with direction-dependent physical properties

- Accounts for how fabric stretches easily in-plane but remains stiff in the normal direction

- Uses collision handling algorithms to manage contact with complex body meshes

- Generates wrinkles based on actual force calculations, not learned patterns

This produces more consistent and physically plausible wrinkles than pattern-matching from training images alone. Critically, MPMAvatar achieves zero-shot generalization—it generates realistic garment motion for poses and interactions it has never seen in training. For AI fashion imagery, this means brands don't need AI that only handles a narrow range of poses or fabric interactions.

Gaussian Splatting for Multi-View Fabric Rendering

Physics simulation determines where wrinkles form. Gaussian Splatting handles how they look across angles, reconstructing 3D spatial representations of fabric from multi-view images. This is critical for e-commerce, where the same garment image must read accurately whether a shopper views it from the front, side, or at an angle.

Stanford and Meta's PGC (Physics-Based Gaussian Cloth) framework embeds Gaussians in mesh structures: Gaussians capture near-field details like fabric fuzziness, pilling, and fly-away fibers, while the mesh encodes far-field albedo.

Garments recovered from a single static pose generalize to novel motion via physics-based simulation. Brands can scale imagery across pose variations without additional re-capture sessions.

Deep Learning for Fabric-Specific Behavior

Convolutional neural networks predict fabric-specific behavior by training on distinct material types. Training data diversity determines whether the AI generates accurate material-specific wrinkles or applies a generic average. Each fabric has its own mechanical logic:

- Denim holds sharp, angular creases at stress points

- Silk drapes in smooth, flowing folds with minimal surface texture

- Knits stretch and conform rather than forming rigid folds

Zero-shot capability represents a major milestone. ETH Zurich and Adobe Research's transformer-based network with geodesic attention generalizes to unseen body motions and garment types, handling both tight-fitting and loose clothing without retraining. handling both tight-fitting and loose clothing without retraining. For fashion brands launching new product lines, that means accurate wrinkle rendering from the first image — no additional model training required.

Fabric-Specific Wrinkle Challenges AI Must Overcome

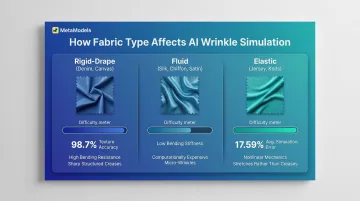

Not all fabrics wrinkle the same way. This is one of the core challenges for AI systems. Fabrics fall into three behavioral categories:

| Fabric Category | Wrinkle Behavior | Simulation Challenge |

|---|---|---|

| Rigid-drape (denim, canvas) | Hold sharp, structured creases | High bending resistance creates large folds; texture classification achieves 98.7% accuracy but drape simulation remains difficult |

| Fluid (silk, chiffon, satin) | Form soft, flowing folds | Extremely low bending stiffness produces fine, closely spaced wrinkles that are computationally expensive to resolve |

| Elastic (jersey, knits) | Stretch and recover rather than crease | Nonlinear, anisotropic mechanics; average stretch force error of 17.59% |

The Hardest Fabric Categories

Research from Hong Kong Polytechnic University quantifies the difficulty. Knitted and elastic fabrics present the greatest challenge because their mechanical behavior is nonlinear and anisotropic (the same fabric behaves differently depending on the direction and magnitude of force applied). Simulation errors average 17.59% ± 8.33% for stretch force and 16.84% for compression.

Thin, lightweight fabrics like chiffon create unpredictable micro-folds as they interact with body movement. Structured tailoring fabrics demand precise crease placement — trouser breaks, lapel rolls — that follow specific construction conventions rather than pure physics. Generic AI trained on limited fabric datasets generates "average" wrinkle behavior: visually plausible, but wrong for specific materials.

Platforms that ingest brand-specific fabric data or support custom material simulation profiles are the practical answer. Without that capability, garment accuracy stalls at plausible-looking approximations rather than true material fidelity.

How Human Review Elevates AI Wrinkle Quality

AI handles generation at scale, but human review catches garment-specific inaccuracies that automated systems miss. This hybrid model serves as the quality control layer bridging AI output and brand-ready imagery.

What Human Reviewers Check

Fashion specialists trained in wrinkle accuracy evaluate three core elements:

- Wrinkle placement against garment construction — seam lines physically prevent folds in certain locations that AI may ignore

- Fold behavior matching the actual fabric — a silk blouse drapes differently than denim, and the render needs to reflect that

- Stress points at pose-body intersections — shoulders, elbows, and hip areas where tension must be visualized accurately

Platforms like MetaModels.ai use human-reviewed AI images to ensure garment accuracy. Every image undergoes review by fashion specialists who verify color, shape, proportions, and fabric behavior before delivery.

The Cost-Benefit Case

The numbers behind hybrid AI-human workflows are hard to ignore:

- BoF and McKinsey project 30%+ productivity gains over the next five years from AI-driven fashion content production

- Stylitics documented luxury retailer Milaner achieving a 157% conversion rate increase and 40% engagement boost from AI on-model imagery

- 71% of shoppers cannot distinguish high-quality AI imagery from traditional photography — provided fabric texture, wrinkles, and fit are rendered accurately

That last point matters most. Human review is what closes the gap between "close enough" and conversion-ready. When wrinkle accuracy slips, so does brand trust — and the conversion rate follows.

Choosing an AI Fashion Platform That Gets Wrinkles Right

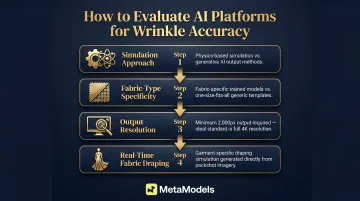

Technical Evaluation Criteria

Brands should evaluate platforms across four technical dimensions:

Simulation approach — Does the platform use physics-based simulation (MPM, FEM) or purely generative rendering? Physics-based systems produce structurally correct wrinkles for specific garment and fabric types.

Fabric-type specificity — Does it have fabric-specific models or a one-size approach? Generic wrinkle templates fail for specialized materials.

Output resolution — Wrinkle detail is lost below certain thresholds. For e-commerce, images should exceed 2,000 pixels on the longest side. Amazon recommends 1,600px minimum for zoom functionality; Shopify suggests 2,048 x 3,072px for fashion. True 4K resolution (3,840 x 2,160 or higher) preserves fine fabric details.

Real-time fabric draping — Can the platform simulate how a specific garment drapes on a model body based on its actual packshot, rather than applying generic wrinkle textures? Platforms designed specifically for fashion e-commerce — such as MetaModels.ai, with its real-time fabric draping technology and human-verified imagery pipeline — address this directly.

The Evaluation Process

Once you've identified your criteria, put them to the test. Request sample images across multiple fabric types — at minimum, a fluid fabric, a structured fabric, and a knit. Evaluate wrinkle realism under zoom at the resolution level your PDPs will display. A/B test conversion and return rates against traditional imagery.

Platforms with fully automated processing plus manual editing options allow brands to course-correct without starting over. This flexibility matters most when specific garments require wrinkle adjustments post-generation.

Transparency and Disclosure

Brands that label AI-generated imagery build shopper trust. 59% of shoppers in the Stylitics survey wanted clear labeling, interpreting it as a signal of honesty. Wrinkle accuracy is part of this trust equation — disclosed AI imagery that is demonstrably accurate strengthens credibility rather than undermining it.

The regulatory landscape is crystallizing. Three frameworks are already in effect or imminent:

- IAB AI Transparency Framework (released January 2026)

- EU AI Act Article 50, mandating transparency for AI-generated content

- New York State disclosure law for AI-generated human likenesses in commercial ads, effective June 2026

Build disclosure protocols now — requirements will only tighten from here.

Frequently Asked Questions

Why do AI-generated fashion images often show unrealistic wrinkles?

Most basic AI image generators learn wrinkle patterns statistically from training images rather than simulating actual physics. They produce "average" fabric behavior that looks plausible at a glance but fails to reflect how a specific fabric actually drapes, folds, or creases under real-world conditions.

How does fabric type affect AI wrinkle rendering accuracy?

Different fabrics behave according to distinct physical properties—rigid fabrics like denim hold sharp creases, fluid fabrics like silk form soft flowing folds, and knits stretch rather than crease. AI systems not trained on fabric-specific simulation data will apply generic wrinkle behavior that looks wrong to trained eyes and shoppers alike.

Can AI-generated wrinkle accuracy match professional fashion photography?

Advanced platforms combining physics-based simulation with human quality review are closing this gap significantly. Most shoppers in recent surveys cannot distinguish high-quality AI imagery from traditional photography, though execution quality varies widely between platforms.

How does poor wrinkle accuracy affect e-commerce return rates?

Inaccurate fabric rendering means shoppers receive garments that don't match what they saw in product images. Research shows 30% of shoppers anticipate returns when they know AI imagery was used, and improved wrinkle accuracy directly reduces that gap by making images reflect how garments actually look and fit.

What should brands look for when evaluating AI fashion tools for wrinkle realism?

Evaluate platforms against three criteria before committing:

- Simulation method: Physics-based or fabric-specific (not just generative rendering)

- Output resolution: 4K or equivalent, minimum 2,000px to render wrinkle detail

- Human review: A garment accuracy validation step before images go live

How does physics-based AI simulation differ from standard image generation for fabric rendering?

Where standard generative AI predicts pixels from training data patterns — plausible, but not physically grounded — physics-based simulation calculates fabric movement according to actual material properties and forces. The result is wrinkles that are structurally correct for a specific garment and fabric type, not just statistically average.