Introduction

A fashion brand invests in AI-generated catalog images, only to discover that the model's face shifts slightly across batches, skin tones render differently under varying lighting, and proportions drift between collections. What looked polished at the 20-SKU test phase becomes visually jarring at 200 SKUs—especially when images appear side-by-side on grid-view product pages. That's the consistency gap that breaks AI adoption at scale.

Maintaining a unified visual identity across hundreds of catalog SKUs requires controlling three elements simultaneously: model identity, set and environment, and garment rendering accuracy. These aren't separate technical problems—they interact, and solving one without the others still leaves your catalog looking fragmented.

TLDR:

- Visual inconsistency erodes shopper trust and brand identity even when individual images look good

- Catalog consistency depends on model identity, environment, and garment accuracy working together

- Reusable model libraries—not regenerating from scratch—enable sustainable catalog-scale consistency

- Human quality review remains essential; pure AI automation can't fully replace it

Why Visual Consistency Is the Hidden Driver of Catalog Performance

Visual consistency operates at the catalog level, not the image level. Individual image quality—no matter how high—cannot compensate for inconsistency across a product grid.

56% of users immediately explore product images as their first action on a product detail page, yet 25% of e-commerce sites provide insufficient image quality for visual evaluation. When model appearance, proportions, or lighting shifts between product pages, shoppers register subtle doubt even if they can't articulate why. Baymard Institute's usability testing found that users extend disappointment with poor images to the entire brand, perceiving the business as "cheap" or "unprofessional."

The conversion impact is measurable. Products with professional-quality photos show approximately 33% higher conversion rates compared to those with low-quality images. A documented rebrand using visual consistency tools produced a 39.7% increase in search visibility and a 13.5% jump in conversion rates, demonstrating that systematic visual consistency outperforms individually optimized assets.

Returns tell the same story. 22% of e-commerce returns are attributed to products looking different in person than they did online. Beyond that, 43% of consumers returned a product in the past year because pre-purchase information wasn't clear or accurate.

The National Retail Federation puts average retail return rates near 17%, costing the industry close to $900 billion annually. Garment image accuracy isn't a design preference — it directly affects bottom-line profitability.

A catalog is not a collection of individual images. It's a cumulative visual statement. When every SKU appears shot on a different-looking model under different lighting, the brand feels like a marketplace rather than a label. Consistency guides customers naturally through the catalog. Every visual inconsistency chips away at trust and brand recognition.

What Makes Consistency Hard to Scale with AI-Generated Models

AI image generation is probabilistic by nature. Without anchoring references, each new generation introduces variation in facial features, body proportions, skin tone rendering, and pose—even when given similar prompts. Unlike a traditional studio shoot, there's no fixed subject to return to.

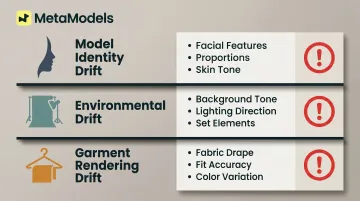

Three common points of drift break catalog consistency:

Model identity drift: Facial features, proportions, and skin tone shift between generations. The ConsistentID project traces this to coarse visual embeddings, cross-attention bleed between facial regions, and text-image prompt conflicts that erode identity over successive outputs.

Environmental drift: Background tone, lighting direction, and set elements shift between batches. AI struggles to accurately replicate nuanced lighting and the way light interacts with fabric textures, producing subtle but compounding inconsistencies.

Garment rendering drift: Fabric drape, fit, and color accuracy vary with input image quality and prompt phrasing. A VTONQA benchmark across 8,132 images from 11 virtual try-on models found four recurring artifact types: garment distortion, body inconsistency, blurring, and incomplete clothing transfer.

Prompt-only approaches fail at scale. Writing detailed text prompts can guide general aesthetics but cannot reliably reproduce the same model face, proportions, or set details across hundreds of images. Consistency requires structural reference anchoring, not just descriptive language.

Small inconsistencies that seem minor for a 20-SKU test become visually jarring across a 200-SKU catalog—especially on grid-view product pages where every image sits directly next to another.

The Three Pillars of Consistent AI Model Images for Catalogs

Pillar 1: Model Identity Anchoring

Model consistency starts with establishing a definitive base model image—a "reference anchor"—that captures the face, body type, age range, and aesthetic you want to maintain across all catalog images. This base image should be treated as a brand asset, not a one-off generation.

Two technical methods for using this anchor:

Re-dressing the same model using virtual try-on or packshot-to-model workflows. This is the fastest approach and maintains pose and proportions exactly. The same model is digitally "re-dressed" with each new garment, preserving complete visual consistency across SKUs.

Using the base image as a face reference for generating new poses and scenes. This approach allows variation in pose, angle, and context while preserving identity. Tools like IP-Adapter and ConsistentID enable this approach by anchoring facial features while permitting changes in pose, angle, and styling.

When to use each approach:

- Use re-dressing when you need maximum consistency and speed across a large catalog with similar styling

- Use face reference when you need pose variation, different styling contexts, or lifestyle scenes while maintaining brand model identity

Pillar 2: Set and Environment Consistency

Background and environmental consistency is as important as model identity for catalog cohesion. The same studio lighting temperature, backdrop color, and prop styling should repeat across all SKUs within a collection or season.

Encode environment as a reference image, not just prompts. Generic prompts like "studio background" produce drift. Specific set references—such as "soft grey seamless backdrop with diffused overhead lighting, no props"—reduce it. Precise environment definitions, through reference images or detailed prompts, produce more reproducible results.

Practical guidance: Save and document your signature set/environment reference image as a brand asset. Apply it consistently to each generation session. If environmental drift appears, regenerate from the original reference — editing drifted outputs compounds the inconsistency.

Pillar 3: Garment Accuracy and Fabric Rendering

Garment accuracy is the pillar most directly tied to return rates and shopper trust. When the rendered garment doesn't match what arrives at the door — wrong drape, off color, poor fit — return rates climb.

Input image quality for the garment directly determines rendering accuracy. Clear, well-lit flat-lay or packshot images showing all detail are essential. Poor input quality compounds errors across the generation pipeline.

Getting garment rendering right requires evaluating three things when choosing a platform:

- Input image quality — clear flat-lays or packshots with full detail visible

- Physics-based draping — systems that model how fabric actually falls, not just texture-map a flat image onto a silhouette

- Human review checkpoints — catches garment accuracy errors before images go live

Real-time fabric draping technology is where the biggest accuracy gaps appear between platforms. Physics-based draping systems that model actual fabric behavior have achieved 0.38 cm mean absolute error in body measurement and 87.4% accuracy in style matching. That's meaningfully better than texture-mapping a flat product image onto a model silhouette, which is how lower-tier tools handle it.

In contrast, the VTONQA benchmark shows overall quality scores ranging from 26.26 to 66.35 on a 0-100 scale, with lower-body garments significantly harder to render accurately. Even the best-performing systems achieve only 66 out of 100, meaning approximately one-third of generated images fall below acceptable perceptual quality thresholds.

A Practical Workflow for Catalog-Scale Consistency

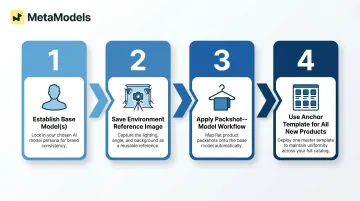

Sequential setup process:

- Establish and save your brand's base model(s) — one generation per model type (e.g., women's, men's, plus-size, children's)

- Establish and save your signature set/environment reference image — document lighting, backdrop color, and styling elements

- Use packshot-to-model workflows to apply each new garment to the saved model, using both the garment image and the environment reference as inputs

- Treat all three anchor assets as a stable template — new products are added to the template, not regenerated from scratch

Batch processing advantage:

Once the anchor template is established, entire collections can be processed in bulk. Upload all packshots, apply the same model and set references, and generate at scale without manually configuring each SKU.

The cost and throughput advantages are significant. AI-generated images run approximately $0.50–$1.50 per image — compared to $5,000+ for a traditional photoshoot — with output reaching up to 2,000 final images per day. Brands applying this approach have reported:

- Up to 70% reduction in traditional production workflows

- Thousands of SKUs processed within a 24-hour turnaround

- 90% time savings compared to manual editing

- 100% consistency in backgrounds and image quality

Those numbers only hold if the underlying model and environment stay consistent — which is where platform infrastructure matters. MetaModels.ai provides a ready-to-use library of diverse AI models across ethnicities, body types, and demographics, alongside custom model creation matched to a brand's specific identity. Build the anchor template once, and it carries through every new collection without reconfiguration.

Building a Reusable AI Model Library for Your Brand

A model library is a brand asset — treat it like one. At its simplest, it's a saved collection of base model images paired with reference images and prompt structures that reliably reproduce the intended look. Each entry should document:

- The model's face, body type, and styling direction

- Approved reference images used as anchors

- Prompt structures that have produced consistent results

- Environmental and lighting standards tied to that model

Document it, version it, and make it accessible to everyone producing catalog content.

Brands representing diverse customer bases need multiple models in their library — and this is where consistency standards earn their value. Apply the same environmental treatment, lighting approach, and garment accuracy requirements to every model you add. The catalog holds together visually even when featuring different people because the production standards stay constant across all of them.

When a generation starts to drift — face looks different, proportions shift — regenerate from the original anchor references rather than editing the drifted image. Preserving the anchor library matters more than salvaging any single output.

Quality Control: The Step Most Brands Skip

Even well-anchored AI systems occasionally produce images with subtle garment inaccuracies—wrong button count, unnatural drape, slightly off color—that won't be obvious at the prompt stage but will be noticed by customers. At 200+ SKUs, a small error rate produces meaningful quality issues.

Human review is the quality control layer that separates catalog-ready AI imagery from quick-generation outputs. Each generated image needs to be checked against the original garment for accuracy before it goes live — not as a final polish step, but as a core part of the production pipeline.

MetaModels.ai builds this verification into every order as a standard step, ensuring garment accuracy is confirmed before delivery.

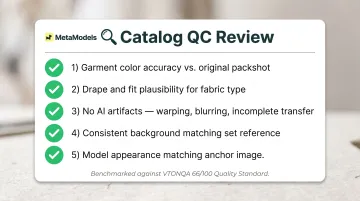

A reliable quality review covers:

- Garment color accuracy against original packshot

- Drape and fit plausibility for the fabric type

- No visible AI artifacts (warping, blurring, incomplete clothing transfer)

- Consistent background matching the set reference

- Model appearance matching the anchor image

These checks matter more than most teams expect. The VTONQA study found that even the best commercial AI systems score only 66 out of 100 on overall quality — and lower-body garments remain a consistent weak point. Without structured human review, errors at that rate will reach your catalog.

Frequently Asked Questions

How accurate is AI in product photography?

With high-quality garment inputs, fabric draping technology, and human review, AI can achieve near-photorealistic accuracy. Fully automated pipelines without quality checks still risk subtle garment or fit errors.

How do you keep the same AI model consistent across a full catalog?

Use a single base model image as a permanent reference anchor for every new generation. Apply it through re-dressing (virtual try-on) for same-pose consistency or face reference for varied poses, rather than generating a new model from prompts each time.

Can AI-generated model images replace traditional photoshoots for fashion catalogs?

AI can handle the majority of catalog SKU imagery at a fraction of traditional photoshoot cost, but brand-defining hero content and campaign imagery often still benefit from traditional shoots.

What image quality do I need to upload for consistent AI catalog results?

Garment inputs should be sharp, well-lit, and show the full product without cutoffs. Flat-lay or mannequin packshots on a plain background tend to produce the most accurate AI model renderings.

How do fashion brands maintain model diversity while keeping catalog consistency?

Brands should build a model library with multiple anchor models representing different demographics, ethnicities, and body types—each held to the same environmental and quality standards—so the catalog feels unified in aesthetic even when featuring varied representation.

Are AI-generated catalog images accepted on major e-commerce platforms?

Most major platforms including Shopify, Amazon, and Etsy accept AI-generated product images provided they accurately represent the actual product. Honesty in product representation, not the generation method, is what platform policies govern.