Inconsistent model representation directly fragments brand identity. When different looks, body types, skin tones, and styling appear from shoot to shoot—even when the clothing itself is perfect—the brand reads as fragmented rather than cohesive. This visual inconsistency quietly undermines the recognition and trust that drive purchasing decisions.

This guide explains exactly what AI virtual model tools are, how they work to maintain visual consistency, and why they have become practical infrastructure for brand-conscious fashion teams managing large catalogs, frequent drops, and multi-channel distribution.

TL;DR

- AI virtual model tools generate photorealistic model imagery from packshots without physical photoshoots, locking in brand-consistent visuals at scale

- Configure model persona, styling variables, and output format once — those parameters apply across every image batch automatically

- Brands can represent diverse body types, ethnicities, and demographics without the coordination cost of booking multiple models for separate shoots

- Human fashion specialists review every output for garment accuracy and quality before images go live

- Fashion teams scale content across e-commerce, social media, ads, and lookbooks without visual drift — regardless of output volume

What Are AI Virtual Model Tools?

AI virtual model tools are software platforms that use generative AI to place clothing and apparel onto photorealistic virtual human figures, producing model imagery without physical photoshoots. Instead of booking talent, renting studios, and coordinating styling teams, brands upload packshots and receive on-model images ready for publication.

Traditional model photography introduces visual variation at every stage: different models across shoots, changing stylist interpretations, variable lighting conditions, and inconsistent post-production editing. These cumulative variations make maintaining consistency across a large catalog expensive and difficult to sustain.

The scale of the problem becomes clear with the numbers. A mid-sized brand averaging 300 styles across 4 collections produces approximately 4,800 SKUs annually, each requiring multiple images. Traditional shoots for this volume run £5,000 to £20,000 per collection, with model day rates alone costing £500 to £2,000.

AI virtual model tools are distinct from generic AI image generators like Midjourney or DALL-E. They are purpose-built for fashion contexts, with specific features for garment draping accuracy, body representation, and brand-matched styling — not open-ended image generation from text prompts.

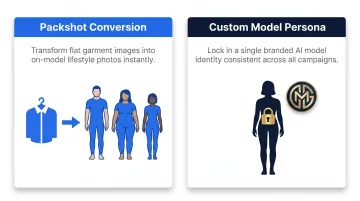

Tools differ by workflow, falling into two broad approaches:

- Packshot conversion: Flat product images are placed directly onto AI models from a curated library, covering diverse ethnicities, demographics, and body types

- Custom model personas: Brands design and lock a proprietary virtual model to their visual identity, enabling tighter consistency across all channels

Commercially available platforms include Lalaland.ai, WearView, Photoroom, Claid.ai, and MetaModels.ai.

Adoption is already underway at scale. Zara began using AI to generate new images of real-life models in different outfits, and H&M created digital twins for marketing campaigns.

How AI Virtual Model Tools Build Consistent Brand Identity

Consistency from AI virtual model tools follows a defined sequence of configuration and generation stages, each controlling a different variable in the brand's visual output. Understanding that sequence shows exactly where brand identity gets locked in — and why it holds.

Model Selection and Brand Configuration

A brand begins by setting up its visual identity within the tool: selecting or creating the AI model persona that will represent the brand. This involves specifying physical characteristics, skin tone, body type, posture, and overall aesthetic that align with the brand's positioning.

This configuration stage is where brand decisions become technical parameters. Once locked in, the same model characteristics are applied to every image generated, removing the shoot-to-shoot variation that undermines visual consistency with real models.

MetaModels.ai's approach here gives brands two options:

- Curated library selection — Choose from pre-built AI models spanning diverse ethnicities, demographics, and body types

- Custom model creation — Commission a model built specifically to match brand identity, including custom styling, age range, and aesthetic tone

Both options serve different stages of brand maturity — one for brands exploring the platform, the other for brands with a fully defined visual identity.

Garment Application and Real-Time Rendering

The core operation maps the uploaded garment (whether a flat packshot or ghost mannequin image) onto the selected model using real-time fabric draping technology. The AI simulates how the material falls, folds, and moves on the body, preserving critical garment details including color, shape, texture, print, and proportions.

Accurate fabric rendering means the product is shown at its best in every image — the visual quality of garment presentation becomes a repeatable standard, not something that shifts based on stylist judgment or model fit.

For specialized applications, the rendering technology handles:

- Stretch fabrics and compression fits for sportswear

- Moisture-wicking textures for activewear

- Shoulder fit, lapel detail, and trouser break for menswear

- Embellishment detail and color accuracy for luxury brands

Quality Control and Human Review

AI generation is followed by a review stage where human fashion specialists check outputs for garment accuracy, visual coherence, and brand compliance. They catch misrepresented fabric details, incorrect drape, or off-brand presentation before images are approved.

Without this step, AI-generated images can introduce subtle errors — stretched patterns, misplaced seams, unnatural fabric behavior — that quietly erode the professional perception the brand is building. Human review keeps AI speed from coming at the cost of accuracy.

MetaModels.ai reviews every AI image and video for details including color accuracy, shape, proportions, and garment-specific elements before delivery, ensuring that quality remains consistent regardless of production volume.

Scalable Output Across Brand Channels

The process ultimately produces a library of brand-consistent model images, ready to deploy across e-commerce product pages, social media, ads, and lookbooks — all featuring the same model persona, styling aesthetic, and image quality standard.

When customers see the same model presentation across every touchpoint, the brand reads as cohesive and intentional — the kind of visual consistency that builds recall and trust over time. MetaModels.ai delivers ready-to-post content up to 4K resolution with end-to-end production management, maintaining that standard as brands scale across products, regions, and seasons.

Why Brand Visual Consistency Is a Business Advantage

Visual consistency directly affects brand recall. A 5-year longitudinal study by System1 and the IPA, covering 56 brands, 4,000+ ads, and 600,000 respondents, found that consistent brands produce 27% more "very large brand effects"—significant uplifts in awareness, favorability, and action intent. That same study found consistent brands report double the "very large profit gains" of inconsistent ones.

A brand that looks identical whether a customer encounters it on Instagram, a product detail page, or a digital ad signals operational competence and quality. Visual fragmentation—mismatched models, inconsistent styling—signals the opposite, weakening both trust and perceived brand value. Research by Lucidpress and Demand Metric found consistent branding can increase revenue by up to 33%, with 68% of respondents citing it as a driver of at least 10% revenue growth.

Maintaining a fixed AI model persona eliminates the per-shoot cost of sourcing models who match the brand's aesthetic. It also removes the post-production time required to reconcile visual differences across multiple talent. Standard e-commerce shoots run £1,200 to £8,000 per day, with per-image costs reaching £187 when factoring in studio hire, models, styling, and post-production. AI-generated content eliminates these recurring costs entirely, freeing the creative team for strategy, campaigns, and brand development.

Where AI Virtual Model Tools Fit Across Brand Touchpoints

AI virtual model tools are used at the content production stage of the brand workflow—after product photography of garments and before publishing—sitting between raw product assets and channel-ready visual content.

The same model imagery or slight variations can be adapted for:

- E-commerce product pages — Standardized model presentation optimized for conversion

- Social media — Lifestyle-oriented crops and compositions formatted for Instagram, Facebook, Pinterest, and TikTok

- Paid ads — Multiple size and format variations for testing ad creatives

- Lookbooks — Editorial-style sequences maintaining brand aesthetic

This multi-channel application is particularly valuable for teams managing large catalogs or multiple product drops. Rather than scheduling separate shoots per collection, brands can generate consistent model imagery for entire seasonal catalogs in parallel, without timelines growing to match.

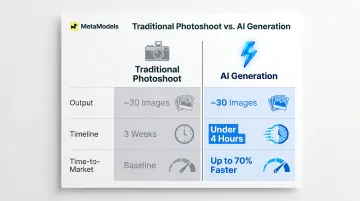

The speed advantage is concrete. Traditional photoshoots produce roughly 30 usable images from a full production day — with 3 weeks from scheduling to delivery. AI generation hits the same output in under 4 hours, cutting time-to-market by up to 70% based on platform data.

| Traditional Photoshoot | AI Generation | |

|---|---|---|

| Output | ~30 images | ~30 images |

| Timeline | ~3 weeks | Under 4 hours |

| Time-to-Market | Baseline | Up to 70% faster |

Conclusion

AI virtual model tools build brand consistency by turning the variables that traditionally caused visual fragmentation—model availability, shoot conditions, stylist choices—into configurable, repeatable parameters that the brand controls from the start.

Fashion teams and e-commerce marketers who understand how these tools function at each stage — from model configuration through fabric rendering to human-reviewed output — are better positioned to deploy them as core production systems, not just cost-saving shortcuts. When visual consistency directly shapes brand recall, trust, and revenue, locking it in through repeatable tooling is what separates brands that scale cleanly from those that drift.

Frequently Asked Questions

What is an AI virtual model tool?

An AI virtual model tool is software that generates realistic model imagery by placing apparel onto virtual human figures using generative AI. It eliminates the need for physical photoshoots, producing catalog-ready images from packshots in minutes.

How do AI virtual model tools ensure the same model appears consistently across different product images?

Consistency is maintained by locking a brand-configured model persona (physical attributes, posture, aesthetic parameters) into the system. Every image batch applies the same characteristics regardless of volume, removing the variation that comes with booking different real models.

Can AI virtual models be customized to reflect a specific brand's aesthetic or values?

Most tools let brands select from curated diverse model libraries or commission fully custom personas. Custom models can be built to match aesthetic tone, demographic representation, age range, body type, and visual positioning.

Are AI-generated model images legally safe to use in commercial campaigns?

AI virtual model platforms are built for commercial use with no model releases, royalty liabilities, or usage restrictions—because the models are synthetic. Regulations are evolving: the EU AI Act mandates synthetic content labeling from August 2026, and New York requires advertiser disclosure of synthetic performers from June 2026.

How do AI virtual model tools compare to traditional photoshoots for maintaining brand consistency?

Traditional photoshoots introduce variation through changing talent, stylists, and shooting conditions—making consistency at scale difficult to sustain. AI tools lock every visual variable into repeatable parameters, producing more consistent output than conventional production while cutting recurring model and studio costs.

Which types of fashion and e-commerce brands benefit most from AI virtual model tools?

Brands with large product catalogs, frequent seasonal drops, multi-channel distribution needs, or limited production budgets benefit most. These are exactly the situations where traditional shoots become operationally difficult and expensive, and where AI tools deliver the clearest returns.