Introduction

In July 2025, H&M released a collection of denim campaign images set against the backdrop of London, Paris, Milan, New York, and Stockholm. The models looked effortlessly natural, styled in global fashion capitals with authentic lighting and expressions. For most viewers scrolling through social media, the images appeared completely ordinary—until they learned the truth: almost none of it was real.

The models weren't physically present in those cities. They weren't wearing those clothes during a traditional photoshoot. The entire campaign was created using "digital twins"—AI-generated replicas of 30 real models, built by feeding thousands of photographs into machine learning algorithms. As PetaPixel noted, the images were so realistic that "most people will scroll past without ever realizing what they just looked at was synthetic."

H&M's campaign isn't an outlier. Major retailers including Zara, Zalando, and Levi's have moved past experimentation — AI imagery is now embedded in their production pipelines at scale. The technology promises cost reduction, content scalability, and personalization, but it also raises hard questions about job displacement, model consent, and the future of creative work. Understanding how H&M built this system — and what it got right and wrong — matters for any brand weighing the same path.

TLDR

- H&M built AI digital twins from multi-angle photographs of 30 consenting real models, training machine learning systems to replicate their likenesses

- The business case centers on cost reduction, speed, scalability, and personalization — practical production advantages over traditional shoots

- Digital twins replicate specific real people (with consent), unlike fully synthetic AI models

- Critics warn of job losses for photographers, makeup artists, and hair stylists who rely on campaign work

- Zara, Zalando, Mango, and Levi's are already operating AI model imagery at production scale

What Are H&M's AI Digital Twins?

Defining Digital Twins in Fashion

A digital twin in fashion is an AI-generated replica of a specific, real model — built from the likeness of an actual person who consented to the process. H&M's program works this way. Generic AI models, by contrast, are entirely fictional personas with no real-world counterpart.

That gap has concrete consequences. Digital twins preserve a real person's appearance, identity, and brand equity — which means they carry legal and commercial weight that synthetic personas do not.

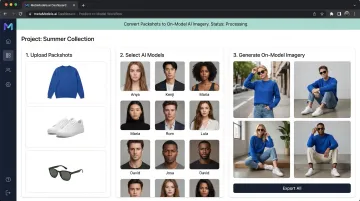

Digital twins require consent agreements, compensation terms, and ongoing likeness rights management. Platforms like MetaModels.ai take a different approach — using fully synthetic personas that require no talent releases or royalty payments.

What the Campaign Actually Looked Like

The campaign was a collaboration between H&M and Swedish photographer Johnny Kangasniemi. Key details:

- Collections: Seasonal denim, shot across New York, Paris, Milan, London, and Stockholm

- Scale: 30 models participated, each contributing their likeness under agreed terms

- Output: Imagery polished enough to be indistinguishable from traditional fashion photography

H&M's Chief Creative Officer, Jörgen Andersson, framed the initiative as a creative evolution: "It's not a question of man versus machine, I think it's man and machine. What the machine can do is basically amplify human creativity." The brand emphasized that AI is additive — traditional photography would continue alongside it.

Model Vanessa Moody, one of the 30 participants, described the collaboration as "professional, collaborative, and transparent," suggesting the process was conducted with clear terms and compensation.

Why the Images Are So Disruptive Culturally

PetaPixel noted that "most people will scroll past without ever realizing what they just looked at was synthetic." This invisibility is the core disruption. When AI-generated content becomes indistinguishable from reality, it raises questions about consumer trust, content authenticity, and disclosure obligations. If brands can create photorealistic imagery without physical shoots, what happens to the industry's understanding of "real" advertising?

How H&M Creates Digital Twins: The Technical Process

Step 1 — Data Capture

The process begins with an extensive photoshoot of the real model. According to PetaPixel's reporting, this involves "taking numerous photos of the model from different angles and in different lighting." This dataset—hundreds or thousands of images—serves as the training material for the AI model.

Step 2 — Machine Learning Training

H&M feeds this dataset into a generative AI model (likely a diffusion-based architecture; H&M hasn't disclosed specifics). The algorithm learns to reproduce the model's likeness across new poses, outfits, settings, and lighting scenarios that were never captured in the original shoot.

Step 3 — Image Generation and Iteration

Once trained, the system can generate new scenes as needed. A simple text prompt can place the digital twin on a Paris street corner at golden hour, no physical travel, set construction, or reshooting required. Creative direction—styling, composition, mood—remains guided by human art directors throughout.

Step 4 — Human Review

Every image undergoes quality control. Human reviewers check garment accuracy, color fidelity, and brand consistency before images go live. AI can generate images quickly, but human oversight ensures the outputs meet commercial and creative standards — which is ultimately what makes the digital twin viable at H&M's scale.

The Business Case: Why H&M Made This Move

Cost Reduction

Traditional fashion shoots require coordinating models, photographers, makeup artists, hair stylists, location scouts, travel, and studio time—often across multiple international cities. Forbes reports that professional fashion shoots cost £8,000-£24,000 per day in the UK, with luxury campaigns exceeding £40,000. AI removes most of these costs entirely — no travel, no crew, no studio booking.

Speed and Scalability

AI allows H&M to generate new visual content in hours rather than weeks. This enables real-time response to seasonal trends, regional campaigns, and e-commerce catalog updates at a scale no physical studio can match. Zalando reported that approximately 70% of its editorial campaign assets were AI-generated in Q4 2024, with content produced in under 24 hours.

Personalization and Inclusivity

AI also creates direct revenue opportunities. Brands can show the same product on different model types — varying age, body shape, and skin tone — depending on who is viewing the product page. Levi's cited diversity as a motivation for its partnership with Lalaland.ai, aiming to create "a more inclusive, personal and sustainable shopping experience."

The financial upside is substantial. McKinsey estimates generative AI could add £120 billion to £220 billion to apparel and luxury operating profits over the next 3-5 years — and companies excelling at personalization already see 40% higher revenues than those that don't.

Brand Control and Consistency

Digital twins give H&M precise control over how garments appear in every image. Physical shoots across different crews inevitably introduce variation — inconsistent lighting, unexpected fit differences, expression mismatches. With AI-generated imagery, those variables disappear:

- Garment fit and draping stays consistent across every SKU

- Lighting and color treatment is locked to brand standards

- Expression and pose can be specified and replicated at scale

H&M Isn't Alone: The Broader Industry Trend

The Convergence of Major Brands

H&M is not experimenting in isolation. Multiple major retailers have publicly confirmed AI model imagery programs:

- Zalando: Reported that 70% of editorial campaign assets were AI-generated in Q4 2024, with content produced in under 24 hours

- Zara: Uses AI to create new images of real models in different outfits to speed production and "complement existing processes"

- Mango: Released its first fully AI-generated campaign in July 2024 for its Teen line, available in 95 markets

- Levi's: Partnered with Lalaland.ai in March 2023 for body-inclusive AI avatars

These aren't copycat moves. Brands as different as Levi's and Zalando, operating in different markets and price points, have independently reached the same conclusion: AI-generated imagery fits their production needs now.

What the Data Shows

The numbers confirm what the brand announcements suggest. Zalando's 70% figure stands out as the clearest benchmark available — a majority of editorial assets for one of Europe's largest fashion platforms now come from AI pipelines. McKinsey research estimates up to 25% of AI's total value in fashion comes from the creative side, not backend logistics. The production and creative teams are where the transformation is actually landing.

The Controversy: Photographers, Models, and Ethics

The Core Fear from Industry Professionals

Digital twins reduce the recurring need for photoshoots. Photographers, makeup artists, and hair stylists who rely on repeat campaign work are understandably concerned. On Instagram, a commenter challenged photographer Kangasniemi directly: "H&M studio used to hire lots of different photographers to do e-commerce / lookbooks, so now by using AI, clearly they are starting to be replaced unless they are still using photographers' images in the mock-ups and paying them?"

Sara Ziff, founder of the Model Alliance, told CNN that the initiative "has the potential to replace a host of fashion workers—including make-up artists, hair stylists, and other creative artists in our community."

Consent and Compensation Questions

H&M's model Vanessa Moody described the process as "professional, collaborative, and transparent," suggesting models were compensated and consented to likeness use. The BBC reported that models would be "compensated for use of their digital twins in a similar way to current arrangements"—paid based on rates agreed upon by their agents.

What remains unclear publicly:

- Specific contract terms and fee structures

- Percentage splits or usage-based royalty models

- Whether compensation scales with the volume of AI-generated images produced

No industry-wide standard for AI likeness compensation currently exists — leaving both models and brands navigating uncharted legal territory.

The Counter-Argument: Technology as Amplifier

Photographer Johnny Kangasniemi framed AI as "an extra tool" rather than a replacement. He argued, "It's not something that I see is going to replace photography in any sense."

Digital cameras didn't kill photography — they shifted who did it and how. AI may follow the same pattern. The real concern isn't total replacement; it's the erosion of routine shoot volume, which is where many working photographers and crew earn their living.

What Fashion Brands Can Learn From H&M's Approach

The Strategic Question Is No Longer "If" But "How"

AI model imagery has moved from experimental to operational. The real question now is which implementation path fits your brand's scale, budget, and existing workflow.

The Investment Gap: Digital Twins vs. AI Model Platforms

H&M's approach—building bespoke digital twins of real models—requires significant investment and infrastructure that most mid-market or independent brands cannot replicate. It involves:

- Extensive photoshoots to capture training data

- Partnerships with tech firms (H&M worked with Swedish firm Uncut)

- Legal frameworks for model consent and compensation

- Ongoing quality control and human review systems

For brands without enterprise budgets, AI model platforms offer a more accessible alternative. Services like MetaModels.ai convert existing packshot photography into on-model AI imagery using curated libraries of diverse AI models. These platforms deliver cost-effective, human-reviewed visuals at volume—without building proprietary digital twins or managing model agreements.

The Decision Framework for Brands

| Brand Tier | Best Fit Approach | Key Benefit |

|---|---|---|

| Large enterprise | Custom digital twin pipelines | Full control over model identity and exclusivity |

| Mid-market / independent | AI model platforms (e.g., MetaModels.ai) | Ready-to-post content, diverse representation, no royalties |

Mid-market and independent brands in particular gain a practical edge: professional on-model imagery from packshots, at a fraction of traditional studio costs, with no legal complexity around model rights.

Frequently Asked Questions

What exactly is an AI digital twin in fashion?

An AI digital twin is a machine-generated replica of a specific real person, created by training algorithms on extensive photographs of that individual. This allows brands to place the person's likeness in new scenes, outfits, and settings without additional physical shoots.

How is a digital twin different from a generic AI-generated model?

Digital twins replicate a specific real person's appearance and identity, requiring consent and compensation. Generic AI models are entirely synthetic personas—fictional characters with no real-world counterpart—requiring no talent releases or royalty agreements.

Are H&M's digital twins replacing real photographers and models?

H&M frames the technology as additive, stating it will continue traditional photography. However, critics note that AI reduces the volume of repeat shoot work, which is a genuine concern for professionals who rely on campaign frequency for income.

Is it ethical for brands to create AI digital twins of real people?

Ethics depend heavily on consent, transparency, and fair compensation. H&M's model Vanessa Moody publicly described the collaboration as professional and transparent, but industry-wide standards for AI likeness agreements do not yet exist.

Which other fashion brands are using AI-generated models?

Zara, Zalando, Mango, and Levi's are prominent examples. Zalando reported 70% of its editorial campaign assets were AI-generated in Q4 2024. Each brand approaches the technology differently, whether for multi-outfit visualization, marketplace automation, or diversity-focused campaigns.

Can smaller brands use AI fashion model imagery without building their own digital twins?

Yes. Platforms offering AI model libraries let brands generate on-model visuals from packshot photography without custom model training. This makes professional-quality imagery accessible at any production scale, not just for enterprise budgets.