Introduction

Zara conducts one photoshoot with a real model — then uses AI to dress that same model in hundreds of different outfits across multiple collections, eliminating the need to re-book, re-style, and re-shoot. This isn't a future prediction. Reuters confirmed in December 2025 that Zara was already using generative AI operationally to digitally dress real models in new clothing collections.

That shift is driven by a straightforward cost problem. Industry estimates place conventional fashion photoshoots at $10,000 to $30,000 per day, with luxury productions exceeding $50,000.

For a retailer like Zara — introducing thousands of new designs annually across 97 physical markets and 214 online markets — slow content production directly drags on revenue. When a product is ready to sell but imagery isn't, the digital shelf sits empty.

This article breaks down Zara's three specific AI initiatives, how each workflow functions in practice, and what it means for fashion brands trying to keep pace with faster content production cycles.

TLDR

- Zara digitally re-dresses real models in new outfits using existing photoshoot assets, with model consent and equivalent pay

- Zara's virtual fitting room generates a personalized customer avatar in ~2 minutes from two photos, live in 43 markets

- Inditex has integrated AI as operational infrastructure — scaled across workflows, not piloted in isolation

- H&M created 30 AI "digital twins" of real models (with ownership rights); Zalando cut imagery costs by 90% and production time from 6–8 weeks to 3–4 days

- British photographers lost 58% of assignments to AI by February 2025, up from 30% five months earlier

What Zara Is Actually Doing With AI: Three Distinct Initiatives

AI-Generated Model Imagery

Zara's parent company Inditex contacts real models to obtain explicit consent before reusing their existing photoshoot assets. Generative AI then overlays entirely new clothing collections onto those approved photographs, placing models in new outfits and even new settings without a new physical shoot.

Models reportedly receive payment equivalent to what they would earn from an additional photoshoot session. An Inditex spokesperson confirmed: "We work collaboratively with our valued models - agreeing any aspect on a mutual basis - and compensate in line with industry best practice."

In practice, a single photoshoot becomes the base asset for dozens or hundreds of visual variations. AI extends that asset across:

- Different garments and full outfit combinations

- New backgrounds and campaign settings

- E-commerce pages, social media, and marketing materials

This is especially valuable during high-rotation periods like seasonal sales, when Zara needs to visualise hundreds of SKUs rapidly.

The Virtual Fitting Room

Zara launched a virtual fitting room feature progressively via the Zara app starting mid-December 2025. Users submit a selfie and a full-body photograph; AI processes these inputs and generates a personalised avatar in approximately two minutes, delivering both a high-resolution photo and a 360-degree video of that avatar wearing selected items.

Rollout timeline:

- Initial launch (December 2025): Mexico, UK, Germany, Netherlands, Italy

- Early 2026: Spain

- Mid-2026: United States

- April 2026: 43 markets total

The feature surpassed 7 million sessions within weeks of launch. A Zara spokesperson stated the goal is to "turn online shopping into a social and interactive experience" by allowing users to build outfits and share looks with friends.

The business case is straightforward: customers who can assess fit before purchasing return fewer items, and "total look" suggestions push average order value higher. Zara reportedly achieved approximately a 10% return rate reduction where the feature is active — a meaningful margin gain at Inditex's EUR 38.6 billion revenue scale.

The AI Search and Styling Assistant

Inditex confirmed a ChatGPT-like conversational assistant will be installed on the Zara online platform, though this feature is not yet deployed as of early 2026. The planned capabilities include image-based product searches (users can search by uploading a photo), real-time product availability verification, and outfit combinations for specific occasions.

Tiziana Pandolfi, Zara's head of e-commerce, emphasized that the goal is "not to show off technology but to make shopping easier and more enjoyable for customers." Taken together, these three initiatives show a retailer treating AI as infrastructure — not a marketing headline — built into every layer of how products are visualised, discovered, and sold.

How Zara's AI Fashion Imagery Workflow Works

A conventional photoshoot takes place with a real model under standard production conditions. The resulting approved photographs serve as the base asset library — the model's likeness, poses, lighting conditions, and body geometry are all captured once and then made available for AI extension.

Generative AI uses these base photographs to map new garments onto the model's body, accounting for body shape, lighting, fabric drape, and texture behavior. The output reads as a legitimate photograph — not an obvious digital overlay. The model is real; only the clothing is AI-generated.

Once the AI renders the image, human review takes over. Inditex's stated position is that AI supports creative teams rather than supplanting creative judgment. Edited images go through brand consistency and accuracy checks before publication, ensuring garment details, color representation, and styling remain commercially accurate.

This approach places Zara in a distinct position from competitors. While H&M explored fully AI-generated avatar models, Zara keeps real human models at the center of the process — with their explicit consent.

| Approach | Zara | H&M |

|---|---|---|

| Model type | Real human models | AI-generated avatars |

| Consent model | Opt-in with likeness rights | Digital twin ownership transfer |

| Brand authenticity | High — human presence retained | Lower — fully synthetic |

| Repeat photoshoots | Eliminated | Eliminated |

The tradeoff comes down to authenticity versus full automation. Zara preserves model representation and consumer trust; H&M pushes further toward synthetic imagery with fewer ongoing talent costs.

The Business Case: Why Fast Fashion Needs AI-Powered Content at Scale

The Content Volume Problem

Zara introduces approximately 10,000 new designs per year and distributes 5.2 million clothing articles per week. Inditex operates approximately 5,500 stores worldwide across 97 physical markets and 214 online markets. The company recorded over 8 billion online platform visits and 218 million active app accounts in FY2024.

Traditional fashion photoshoots cost $10,000 to $30,000 per day. Model day rates range from $500 to $5,000. Agency creative fees for campaign-level concepts range from $10,000 to $100,000. At Zara's SKU volume, traditional production cannot sustain the continuous content demand economically.

Speed-to-Market Imperative

Zara's competitive model depends on moving from trend identification to shelf faster than competitors. Content production is a genuine bottleneck — when a new collection or product variant is physically ready but imagery is not, the product cannot sell online.

Zalando's experience quantifies this problem: before AI adoption, editorial imagery production took 6 to 8 weeks. With generative AI, this dropped to 3 to 4 days, with costs reduced by 90%. Zalando can now respond to social media fashion trends in under 24 hours, compared to a previous 2 to 4 week window.

AI compresses the cycle between physical inventory readiness and digital sales launch. For fast fashion, this directly impacts revenue — every day a product sits without imagery is lost sales opportunity.

Integration With Data-Driven Operations

Zara already uses machine learning for demand forecasting, inventory allocation, and consumer behaviour analysis through its Inditex Open Platform (IOP), operational since 2018. The retailer manages inventory across 5,500+ stores using RFID and AI-driven allocation algorithms.

AI-generated content connects each stage of this system directly:

- Better imagery drives higher conversion rates

- Conversion data sharpens demand forecasting accuracy

- Improved forecasts optimise inventory allocation across stores

Each content cycle improvement feeds back into the wider operational system, making speed of imagery production an operational — not just marketing — variable.

Inditex has published no specific figures on cost savings from the AI imagery initiative. That absence is telling — organisations typically stop framing a capability as innovation once it becomes standard infrastructure.

Not Just Zara: The Fashion Industry Is Moving in This Direction

H&M: Digital Twins with Model Consent

In March 2025, H&M announced plans to create 30 AI "digital twins" of real models for use in marketing content. Models retain rights to their own digital twins, can license them to any brand, and receive payment each time the twin is used. H&M released the first images on July 2, 2025 — for the Pre-Spring 2025 collection featuring models Ayan and Jada — with Creative Advisor Ann-Sofie Johansson describing it as "a great example of how we can use technology to enhance our creativity and explore new ways of working."

Zalando: 90% Cost Reduction, 3-Day Turnarounds

Zalando went further with measurable results. Using generative AI, the retailer:

- Cut editorial imagery costs by up to 90%

- Reduced production timelines from 6-8 weeks to 3-4 days

- Had approximately 70% of Q4 2024 editorial campaign images AI-enhanced

- Recorded +10% higher engagement on AI-enhanced content

- Saw returns drop 10% where AI-powered size advice was available

VP of Content Solutions Matthias Haase framed the shift plainly: "We are using AI to be able to be reactive" — and noted that creative teams now effectively operate with "six hands instead of two."

Zalando calls this evolution "emotional commerce": moving beyond transactional convenience toward content that inspires, builds confidence, and makes shopping feel less like a chore.

The Workforce Warning Behind the Numbers

The business gains don't come without tradeoffs. The British Association of Photographers (AOP) has formally warned that AI adoption across major fashion retailers will materially reduce assignment volumes for photographers, models (for secondary shoots), makeup artists, stylists, and production crews. For brands evaluating this shift, that context matters — the decisions being made now will shape how the industry works for years ahead.

The Ethical Debate: Model Rights, Job Displacement, and Consumer Trust

Consent and Compensation Structure

Models receive explicit agreements and equivalent payment for the AI use of their images. The photographers, makeup artists, stylists, and production teams who would have been hired for a second physical shoot receive nothing — because that shoot no longer happens.

This creates an asymmetric impact across the creative supply chain, even within a framework that treats models fairly. The AOP's February 2025 findings spell out the scale:

- 58% of UK photographers had lost assignments to generative AI (up from 30% in September 2024)

- Average annual income losses reached £35,000 per photographer

- Each cancelled photoshoot affects up to 10 additional workers — assistants, stylists, and makeup artists

Consumer Perception Risk

McDonald's Netherlands released an AI-generated Christmas ad on December 6, 2025. Viewers called it "creepy," "god-awful," and "poorly edited." Specific complaints centred on "uncanny-looking characters" and disjointed stitching of clips. McDonald's removed the video three days later (December 9), stating the moment served as "an important learning" as the company explored "the effective use of AI."

Consumer acceptance of AI-generated imagery is not guaranteed — and fashion brands face higher scrutiny than fast food. Shoppers evaluating clothing make purchase decisions based on how a garment looks on a body, making visual quality directly tied to conversion. When AI output looks off, the brand damage compounds: it's not just a failed ad but a failed product presentation.

Inditex's Official Positioning

Inditex officially positions AI as being used "only to complement existing processes." Tiziana Pandolfi emphasized that the goal is not to show off technology but to make shopping easier and more enjoyable. That framing matters less as a PR position and more as an operational commitment. At Zara's volume — hundreds of new styles weekly across global markets — even a 20% shift toward AI-generated imagery represents thousands of images per month that previously required physical production. Whether "complementing" remains accurate depends entirely on where that proportion lands a year from now.

What This Means for Fashion Brands Beyond Zara

The New Content Velocity Benchmark

When a retailer operating at Zara's scale integrates AI imagery into its production infrastructure — not as a pilot but as a routine workflow — it sets a new content velocity benchmark. The market data reflects the scale of what's shifting:

- McKinsey projects generative AI could add $150–$275 billion to apparel sector operating profits within 3–5 years

- Precedence Research forecasts the AI fashion market will reach $60 billion by 2034, growing at ~40% annually

- Gartner reports 91% of retail IT leaders are prioritising AI implementation by 2026

- Average GenAI spend in 2024 was $1.9 million — yet fewer than 30% of AI leaders report CEO satisfaction with returns

Adoption is near-universal in intent. Execution quality is where brands diverge.

The Access Gap

Zara built this capability as part of an enterprise-level technology infrastructure. Smaller fashion brands face the same content challenges — high SKU volumes, multi-market visual needs, seasonal refresh cycles — without the in-house resources to match.

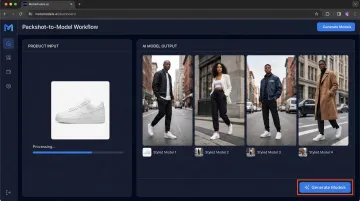

MetaModels.ai addresses this gap directly: converting packshots to AI model imagery using a curated library of diverse AI models, real-time fabric draping, and human-reviewed output. Brands get the same content scalability without a single photoshoot, starting from £20 per image rather than £10,000+ per photoshoot day.

The practical implication: content velocity is no longer gated by capital. A direct-to-consumer brand with 500 SKUs can now match the visual presentation standards of a multinational retailer at a fraction of the cost.

The Strategic Question

For most fashion brands, the decision to adopt AI content production is effectively made. The real work is in how: building workflows that are brand-consistent, ethically grounded, and built to last. Brands that establish clear practices now — covering consent, quality control, model diversity, and consumer transparency — won't just keep pace. They'll set the standard.

Frequently Asked Questions

How does Zara use AI in its model photography?

Zara uses generative AI to digitally dress real models in new clothing collections using existing approved photoshoot images, with model consent and equivalent compensation. This eliminates the need for repeated physical photo sessions while preserving the authenticity of real human models.

Do Zara models consent to having their images edited by AI?

Yes. Zara seeks explicit model permission before applying AI edits to their images, and compensates them at a rate equivalent to what they would receive for an additional photoshoot. Inditex's official policy is to work collaboratively with models on a mutual basis.

How does Zara's virtual fitting room work?

Users submit a selfie and full-body photo via the Zara app. The AI generates a personalised avatar within approximately two minutes, shown in high resolution and 360-degree video wearing selected garments. The feature is available in 43 markets as of April 2026.

Is Zara replacing human models with AI?

No. Zara's approach keeps real human models central to the process — AI is used to extend existing model images into new outfit variations, not to generate synthetic or fully AI-created model personas. Inditex officially positions AI as complementing, not replacing, human creative work.

What other fashion brands are using AI for model imagery?

H&M announced 30 AI "digital twins" of real models for marketing use, with models owning their digital likenesses and receiving payment per use. Zalando cut editorial imagery costs by 90% using AI-generated imagery — a result that signals how quickly the economics of fashion content are shifting.

Can smaller fashion brands adopt AI model imagery like Zara does?

Yes. While Zara operates at enterprise scale, AI fashion imagery platforms are now accessible to brands of all sizes. MetaModels.ai, for example, converts packshots into styled model photography using a diverse AI model library with human-reviewed output — no in-house tech team or physical photoshoot required.