Introduction

AI fashion models are photorealistic, computer-generated figures that display clothing and accessories without physical model bookings or studio shoots. They slot directly into e-commerce workflows, producing on-model imagery at a fraction of traditional photography costs.

For brands managing growing catalogs, that cost gap compounds fast. Traditional shoots struggle to keep pace with:

- Content production costs that multiply with every new SKU

- Catalog volume that outpaces studio scheduling

- Turnaround times that delay product launches

- Competitive pressure to show on-model imagery across every listing

A brand launching a modest seasonal collection of 50 SKUs needs listing images, lifestyle shots, color variants, and multi-angle views. That volume makes traditional photoshoots too costly and too slow to run at seasonal frequency.

This guide covers what AI fashion models are, why e-commerce brands are adopting them, how the process works end-to-end, and what factors affect output quality, including where the approach has real limitations.

TL;DR

- AI fashion models use generative AI to place clothing on photorealistic virtual figures, turning flat packshots into on-model e-commerce visuals — no model bookings, no studio costs

- Output quality depends heavily on input image quality, prompt precision, garment complexity, and whether human review is built into the workflow

- It scales well for catalog and product-page production, though lifestyle, editorial, and complex drape scenarios often still benefit from human review

- Brands adopting AI model workflows typically cut visual production costs significantly while compressing turnaround from days to hours

What Are AI Fashion Models?

AI fashion models are software-generated photorealistic human figures produced by generative AI systems trained on large datasets of fashion imagery. These systems simulate how clothing looks when worn—including drape, shadow, and fabric behavior—without requiring physical models or studio shoots.

How this differs from related terms:

- AI fashion models produce on-model imagery from product photos

- Virtual try-on focuses on fitting a specific garment to a shopper's own image

- AI image editing enhances existing photos but doesn't generate the model figure itself

Market Adoption and Growth

That distinction matters because adoption is moving fast. Key figures show the scale of the shift:

- The fashion technology market reached $189 billion in 2024 and is projected to reach $273 billion by 2030 at a 6.3% compound annual growth rate

- Generative AI could add $150–275 billion to apparel, fashion, and luxury operating profits within 3–5 years, according to McKinsey

- 63% of fashion executives cite generative AI as a top priority for 2025, with 25% already using it for creative design and marketing

Why E-Commerce Brands Are Adopting AI Fashion Models

The Cost Gap

Traditional fashion photography carries substantial line-item costs across every shoot:

- Photographer day rate: £1,000–3,500

- Model fees: £200–4,000

- Studio rental: £300–2,500

- Hair and makeup: £400–1,200

- Wardrobe styling: £400–1,000

- Retouching: £20–200 per image

Total daily production costs range from £5,000–25,000, translating to £150–1,500 per finished on-model image.

AI-generated photography costs approximately £0.50-5.00 per image—a reduction of roughly 95-99% compared to traditional methods. For a brand managing 500 SKUs, annual photography costs can drop from £125,000-250,000 to a small fraction of that amount.

The Scale Problem

A brand launching a seasonal collection of 50-200 SKUs needs listing images, lifestyle shots, color variants, and multi-angle views. The volume of assets required makes traditional photoshoots financially and practically unviable at that frequency.

Brands that rely exclusively on traditional photography for catalog-scale content face predictable bottlenecks:

- Delayed launches waiting for studio availability

- Inconsistent visual identity across SKUs

- High per-image costs that don't scale

- Dependency on model and studio booking windows

Model Diversity Drives Conversion

Research from the University of Bath, University of Groningen, and Vrije Universiteit Amsterdam found that size-inclusive model photography mitigates fit risk in online fashion retailing. Thin-size models hindered purchase decisions by making it difficult for customers of different body types to assess garment fit. The peer-reviewed study concluded that "not one of our studies shows that own-size model photography negatively affects purchase decisions."

Nielsen Norman Group research found that shoppers noticed and appreciated models of different sizes, ages, and races, reporting feeling more confident in their purchasing decisions when they saw many different people wearing a product. Platforms like MetaModels.ai offer curated libraries with diverse ethnicities, demographics, and body types — making representation achievable without booking multiple models for every shoot.

The Return Rate Problem

Online apparel returns average 20-26% globally, with fit-related issues driving 53% of those returns. On-model images that accurately represent how a garment fits and drapes reduce customer disappointment by helping shoppers visualize fit on bodies similar to their own.

The National Retail Federation forecasts $890 billion in US retail returns for 2025, with clothing ranking as the most returned online category. Accurate product imagery directly targets the most costly return driver in fashion e-commerce.

How AI Fashion Models Work

The Core Process

The process takes a product image as input, uses generative AI to analyze the garment's structure, shape, and material properties, and composites the clothing onto a selected virtual model figure with realistic lighting, shadow, and drape simulation.

Inputs required:

- The product image (packshot, flat lay, or ghost mannequin)

- The selected or custom AI model

- Creative parameters (pose, background, lighting mood)

- Brand-specific style or resolution requirements

The AI interprets fabric weight and texture, maps the garment geometry onto the model's body proportions, and renders fabric behavior — folds, draping, stretch — in a way that appears physically realistic rather than digitally painted.

Advanced platforms like MetaModels.ai use real-time fabric draping technology and include a human-review step to verify garment accuracy before delivery, addressing one of the most common failure points in fully automated AI workflows.

Step 1: Input Preparation

The quality and completeness of the product image determines the ceiling of the AI output. Ideal input images include:

- Full garment visibility with no cut-off edges

- Clean background without heavy compression artifacts

- Good lighting that shows fabric texture

- Multiple angles where possible

- Sharp focus throughout the garment

Poor inputs—blurry, low-resolution, or poorly lit product photos—produce models wearing indistinct or distorted garments regardless of how sophisticated the AI is. The AI cannot recover garment detail that doesn't exist in the source image.

Step 2: Model and Scene Selection

Users select or configure the AI model and creative context by:

- Choosing from a curated model library or defining custom model attributes (body type, ethnicity, age, pose)

- Setting scene parameters (studio, lifestyle, editorial backdrop)

- Specifying styling elements

Platforms with large curated libraries offer more diversity options compared to those offering only generic defaults.

Step 3: AI Generation and Quality Review

The AI renders the final image, which should then be evaluated for accurate garment representation — correct color, fit, and fabric behavior.

Automated-only pipelines carry a higher risk of subtle errors that erode customer trust. Platforms that incorporate human fashion specialists to review color accuracy, shape, proportions, and garment details before delivery reduce that risk. The result: images that reflect the actual product rather than an AI interpretation of it.

Key Factors That Affect AI Fashion Model Quality

Input Image Quality

The AI cannot recover garment detail that doesn't exist in the source image. Blurry, low-resolution, or poorly lit product photos produce models wearing indistinct or distorted garments regardless of how sophisticated the AI is.

Starting with sharp, well-lit product shots with the full garment visible sets the quality ceiling for the output.

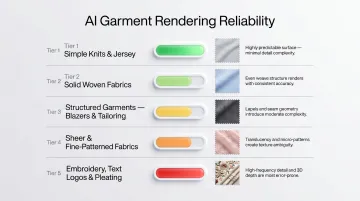

Garment Complexity

Simple knits and jersey fabrics render most reliably — their drape behaviour is predictable and surface texture is uniform. Structured and detail-heavy garments are a different story:

- Structured garments (blazers, tailored trousers) require the AI to understand how fabric holds shape rather than drapes freely

- Sheer fabrics suffer from aliasing and ghosting artifacts where the AI struggles to represent transparency accurately

- Fine patterns (small polka dots, intricate lace, thin stripes) may simplify into grey blobs or distorted zigzags

- Embroidery and pleating challenge current model resolution due to microscopic textural complexity

- Text and logos often become garbled when fabric is wrinkled or draped

Research published in the Cureus Journal identifies self-occlusion (crossed arms, turned bodies), high-frequency patterns, and long/loose garments as specific technical limitations in current AI fashion model generation.

Model and Brand Consistency Across a Catalog

When different model attributes, lighting treatments, or background styles are applied across SKUs without a unifying visual framework, the storefront looks inconsistent — even if individual images look polished.

This is a workflow and planning issue, not just a technology issue. Brands should define and lock down these parameters before generation begins, not after reviewing the first batch of outputs.

Common Misconceptions About AI Fashion Models

Misconception 1: "AI output is always ready to publish"

AI-generated images require review against the physical product before going live. Subtle color shifts, fabric smoothing, or incorrect fit representation can mislead shoppers and increase returns.

Fully automated pipelines without a human review step are a risk, not an efficiency gain. Platforms that incorporate fashion specialist review catch errors before they reach customers.

Misconception 2: "Any product photo is a good enough input"

Teams often assume the AI will compensate for weak source images, which leads to poor outputs and repeat regenerations. The AI amplifies what is in the input—it doesn't reconstruct missing detail.

Clean, well-lit packshots produce stronger outputs. There's no AI shortcut that recovers detail missing from the source image.

Misconception 3: "AI fashion models are only for large brands with big catalogs"

While scale amplifies the ROI, small brands and independent designers benefit from eliminating per-shoot fixed costs. A single-SKU startup and a 500-SKU retailer both gain from on-demand model imagery.

When the investment may not make sense:

- Very low-volume, one-off shoots where a simple flat lay already converts well

- Product categories where fit-on-body isn't the key visual (hard goods, footwear closeups, accessories)

- Brands whose entire value proposition is authentic behind-the-scenes content from real shoots

That said, fit and scale are only two parts of the equation. The fourth misconception is about what drives output quality once you've got the right input.

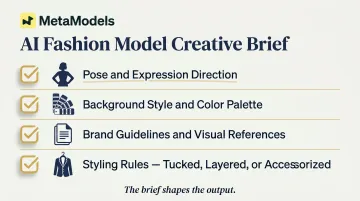

Misconception 4: "AI models eliminate the need for any creative direction"

The quality of the output still depends on how well creative parameters are defined upfront. Vague or absent instructions on pose, background, and styling produce generic results that don't reflect brand identity.

Treat AI fashion model generation the same way you'd brief a traditional shoot:

- Define pose and expression direction

- Specify background style and color palette

- Provide brand guidelines and visual references

- Set styling rules (tucked, layered, accessorized, etc.)

The brief shapes the output. The more specific it is, the more the final image looks like your brand — not a default.

Frequently Asked Questions

What is an AI fashion model?

An AI fashion model is a photorealistic virtual human figure generated by AI, used to display clothing on a human form without booking a physical model. It's produced from a product image input and configurable by pose, body type, and scene.

How much does using AI fashion models cost compared to traditional model photography?

Traditional on-model photography costs £150-1,500 per retouched image, while AI-generated images cost approximately £0.50-5.00 per image—a reduction of roughly 95-99%. For a 100-SKU catalogue requiring 3 images each, costs drop from approximately £60,000 to £300-1,500.

Can AI fashion models accurately represent fabric texture and garment fit?

Advanced platforms using fabric draping technology and human review can represent texture and fit accurately, but accuracy depends on input image quality and garment complexity. Simpler fabrics render more reliably than complex structured or sheer pieces.

Are AI-generated fashion model images accepted on platforms like Amazon and Shopify?

Yes, AI-generated images are accepted on major e-commerce platforms provided they meet resolution and content requirements. Amazon permits AI-generated images if they accurately represent the product. The brand remains responsible for ensuring accuracy.

What type of input images produce the best AI fashion model results?

Sharp, well-lit product shots with the full garment visible, minimal background clutter, and multiple angles where possible. These factors directly determine how accurately the AI can interpret and render the garment.

How do I maintain visual consistency across a large product catalogue using AI models?

Consistency requires standardising these attributes across all SKUs before generation: model appearance, lighting treatment, background type, and resolution. Platforms with brand-level style controls or human-reviewed production management make catalogue-wide consistency easier to achieve at scale.