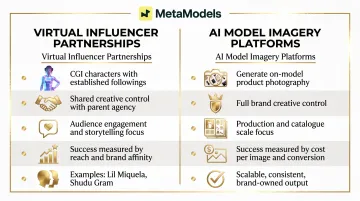

"Digital model collaboration" covers two distinct but related approaches: virtual influencer partnerships (CGI characters with established social followings like Lil Miquela or Shudu Gram) and AI model imagery platforms (services that generate product photography featuring AI models without physical shoots). Both eliminate traditional photoshoot logistics, but they serve fundamentally different brand objectives. This article explains how brands use each approach to drive measurable results, what the collaboration process actually involves, and where most brands go wrong.

TL;DR

- Brand-digital model collaboration uses CGI virtual influencers or AI-generated imagery to produce content at scale — no physical shoots required

- Brands choose digital models for cost savings (up to 90% vs. traditional shoots), SKU scalability, creative control, and inclusive representation

- Collaboration follows a clear flow: define objective → select or create model → produce and review content → deploy

- Virtual influencer partnerships and AI imagery platforms serve different goals and require distinct strategies

- Common misconceptions about digital models — especially around creative direction — lead to failed implementations

What Is a Brand-Digital Model Collaboration?

Brand-digital model collaboration takes two primary forms, each with distinct operational requirements and use cases.

| Type | How It Works | Brand Control |

|---|---|---|

| Virtual influencer partnerships | Brands license CGI characters with established followings (Lil Miquela, Shudu Gram, Noonoouri) to create content targeting the character's audience | Shared — the influencer's parent company retains creative control over the character |

| AI model imagery services | Platforms like MetaModels.ai convert product packshots into photorealistic on-model photography without a physical shoot | Full — brands select models, direct poses, and specify output channels |

Virtual influencer partnerships work similarly to human influencer marketing: brands negotiate licensing deals, align content briefs with the character's persona, and reach an existing audience. AI imagery services operate more like a production subscription — brands submit packshots and receive styled model photography at scale.

What "Collaboration" Means Operationally

Virtual influencer campaigns require audience alignment research, brand-character fit assessment, and multi-step content approval. Brud (which manages Lil Miquela) is a good example: the agency holds final say over the character's identity, so brands negotiate within those creative boundaries.

AI imagery platforms shift that dynamic entirely. Brands maintain full creative control — selecting models, directing poses, specifying output channels — but the quality of output depends on brief clarity. Precise direction produces precise results.

Both approaches eliminate physical logistics — no travel, no studio rental, no scheduling conflicts. The trade-off is a shift in where attention goes: toward brief clarity, quality verification, and (for AI imagery) technical accuracy checks for fabric draping and garment fit.

Why Brands Are Turning to Digital Models

Cost and Speed Advantage

Traditional fashion photoshoots involve model booking fees, photographer costs, studio rental, styling, travel logistics, and post-production retouching. While specific costs vary widely by market and production scale, WWD documented in 2005 that a single magazine cover production started at approximately $100,000 — focused on high-end editorial, not standard e-commerce photography.

AI-generated imagery platforms eliminate most of these cost components entirely. MetaModels.ai, for example, offers per-image pricing starting from ₹20 (approximately $0.24), with subscription plans providing 20-300 image credits monthly at tiered rates. This represents cost reductions of 80-90% compared to traditional production methods.

Speed matters too. Traditional campaigns require 2-4 weeks from planning through final retouched images. AI platforms can generate catalogue-scale imagery in hours, enabling brands to respond to trends faster and refresh product visuals across seasons without production bottlenecks.

Scalability Across SKUs and Markets

The global AI product photography tool for e-commerce market grew from $450 million in 2024 and is projected to reach $5 billion by 2035, driven primarily by brands managing large product catalogues.

E-commerce retailers launching hundreds of seasonal SKUs cannot economically photograph every item on human models. Fast fashion brands refreshing inventory weekly face even tighter constraints. Digital models solve this by enabling simultaneous content production across styles, colourways, and market-specific imagery.

Mango's 2024 AI campaign for its Teen line demonstrated this scalability — the brand generated an entire campaign using AI imagery and deployed it across 95 markets simultaneously. Traditional production would have required region-specific shoots or costly rights extensions.

Diversity and Inclusive Representation

Consumer expectations around representation have shifted from nice-to-have to commercial necessity. Deloitte's 2024 Digital Media Trends study found that 71% of entertainment spending among Black, Hispanic/Latinx, and LGBTQIA+ audiences is driven by feelings of inclusivity. These groups collectively drive more than a third of the U.S. media and entertainment market.

AI model libraries offer curated range across ethnicity, body type, age, and demographic — enabling brands to represent diverse audiences without the logistical complexity of casting across these dimensions for every shoot. Platforms provide customisation by ethnicity, body type, age range, and styling to match specific brand identities and target audiences.

This doesn't eliminate the importance of authentic representation — brands still need human creative direction to ensure diversity feels genuine, not tokenistic. But it removes production barriers that previously made inclusive casting prohibitively expensive at scale.

Creative Control and Brand Consistency

Human models bring scheduling conflicts, reputational risks, and natural variance in appearance between shoots. Digital models carry none of these constraints.

Brands maintain full visual control over styling, lighting, and model identity across all content outputs. A custom AI model can represent the same brand aesthetic consistently across hundreds of SKUs, multiple seasons, and different markets — eliminating the variance that comes from working with different models or the same model across different shoots.

This consistency accelerates brand recognition. The 3-7-27 rule in branding suggests it takes 3 impressions for consumers to recognise a brand, 7 to remember it, and 27 to build trust. Consistent visual identity across digital model content — same model aesthetic, styling cues, and brand elements — accelerates this familiarity curve.

Industry Adoption as Validation

Digital model use has moved beyond niche experimentation:

- Balmain launched a "virtual army" campaign in September 2018 featuring three CGI models — Shudu, Margot, and Zhi — created exclusively for the campaign

- Samsung recruited virtual influencer Lil Miquela for its global #TeamGalaxy campaign in 2019, alongside Steve Aoki and Millie Bobby Brown

- Mango created the first campaign generated entirely by generative AI for its Teen collection in July 2024, available across 95 markets

- H&M announced plans in March 2025 to create AI "twins" of 30 models for use in social media posts and marketing imagery

Fast fashion, luxury, and mid-market brands are all committing resources here — which means the question for most brands is no longer whether to use digital models, but how.

How the Brand-Digital Model Collaboration Process Works

The end-to-end process moves through five stages: define the objective, select or create the model, brief and produce content, review for quality, then deploy and optimise. Each stage builds on the last — skipping quality review, in particular, puts both output quality and brand safety at risk.

Step 1: Define the Collaboration Objective

Brands must first determine the primary use case. The choice between virtual influencers and AI imagery platforms hinges on one question: Are you building audience engagement or producing product content?

Social media storytelling and audience engagement point toward virtual influencer partnerships. These collaborations leverage the influencer's existing following, personality, and narrative. Success metrics focus on reach, engagement, and brand affinity.

Product imagery, e-commerce listings, lookbooks, or campaign visuals point toward AI imagery platforms. These tools solve production problems — generating on-model product photography at scale without physical shoots. Conversion rates, production efficiency, and cost per image are the measures that matter here.

Confusing the two creates real problems. A virtual influencer partnership won't efficiently produce 200 SKU images for a catalogue. An AI imagery platform won't build audience engagement through storytelling.

Step 2: Select or Create the Digital Model

For virtual influencer partnerships: Brands assess a virtual influencer's audience demographics, content personality, and brand alignment before approaching a licensing deal. The global virtual influencer market was valued at $6.06 billion in 2024 and is projected to reach $45.88 billion by 2030, with fashion and lifestyle accounting for the largest revenue share.

Evaluation criteria mirror human influencer assessment:

- Reach — audience size and demographic fit

- Relevance — content alignment with brand values

- Resonance — ability to drive meaningful engagement

Virtual influencers like Lil Miquela command premium rates because their audience trusts the character's recommendations.

For AI imagery platforms: Brands either choose from curated model libraries or commission custom AI models built to match brand visual identity. Platforms like MetaModels.ai allow brands to customise models across ethnicity, body type, age range, and styling — with no ongoing licensing fees or recurring model costs.

Custom model creation typically requires visual references, demographic criteria, and brand guideline documentation. The platform builds a model that can be reused across unlimited content outputs, maintaining consistent brand aesthetic.

Step 3: Brief, Produce, and Review

Content production requires a precise brief covering:

- Garment presentation requirements (how the product should be styled and displayed)

- Model pose and expression (appropriate for the product type and channel)

- Background and environment (studio white, lifestyle setting, or custom scene)

- Lighting direction (natural, studio, dramatic)

- Intended output channel (e-commerce product page, social media, lookbook, paid advertising)

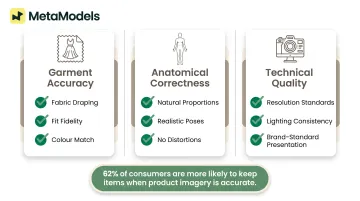

Human review of AI-generated imagery is essential at this stage. Quality checks must verify:

- Garment accuracy — fabric draping, fit, and colour match the actual product

- Anatomical correctness — no distorted proportions or unnatural body positions

- Technical quality — resolution, lighting consistency, and brand-standard presentation

Research shows that 62% of consumers are more likely to keep items when product information (including imagery) is accurate, whilst 43% of UK consumers returned a product specifically because pre-purchase information was incorrect. Quality review directly impacts return rates and customer satisfaction.

Key Factors That Affect Collaboration Success

Brand-Model Fit and Audience Alignment

The digital model's aesthetic, perceived identity, and demographic representation must match the brand's target customer. A mismatch in visual tone or audience demographics will undermine campaign effectiveness even if the imagery is technically flawless.

Two practical alignment checks apply here:

- Virtual influencers: Evaluate whether the character's personality and audience match brand values. Lil Miquela's audience skews young, diverse, and tech-forward — a fit for Gen Z-oriented brands, less so for heritage luxury labels targeting older demographics.

- AI-generated imagery: Select or create models whose appearance reflects the actual target customer. A brand serving plus-size shoppers needs models that authentically represent that demographic, not standard-size models with superficial adjustments.

Quality Assurance and Technical Accuracy

AI-generated images must accurately represent fabric texture, garment fit, and product detail. This is a commercial priority, not just an aesthetic one.

The scale of the problem is significant: the National Retail Federation reports the average retail return rate approaching 17%, costing the industry close to $900 billion annually. Akeneo's 2026 global study found that 43% of UK consumers returned products because pre-purchase information was incorrect.

Inaccurate product imagery directly contributes to higher e-commerce return rates. If an AI-generated image shows a garment in the wrong colour, with incorrect draping, or with distorted proportions, customers receive a product that doesn't match their expectations. Quality review must verify that fabric texture renders accurately, garment fit appears realistic, and colour representation matches the actual product. Platforms that include human review by fashion specialists — like MetaModels.ai's mandatory quality checks before delivery — reduce this risk materially.

Transparency and Ethical Compliance

Brands face growing consumer expectations and regulatory signals around disclosing AI-generated imagery.

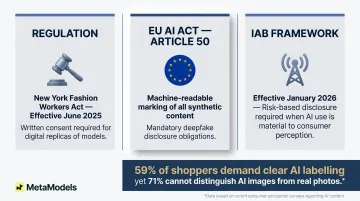

Regulatory developments:

- New York's Fashion Workers Act, signed into law in December 2024 and effective June 2025, requires separate written consent for creating or using a model's digital replica

- The EU AI Act Article 50 requires providers of AI systems generating synthetic content to mark outputs in machine-readable formats and deployers to disclose deepfake content as artificially generated

- The IAB released its first AI Transparency and Disclosure Framework in January 2026, recommending risk-based disclosure when AI use is material to consumer perception

Consumer attitudes:

Stylitics' survey of 411 shoppers found that 59% want clear labelling of AI-generated imagery (such as "Virtual Model" disclaimers), whilst 71% could not distinguish AI-generated images from real photos. Proactive transparency reduces reputational risk and builds trust with audiences increasingly aware of AI content.

Common Misconceptions and Pitfalls

Misconception: Digital Models Eliminate the Need for Creative Direction

Digital models don't replace creative teams — they shift where creative input is applied. Art directors, brand strategists, and stylists remain essential to brief the process, review outputs, and ensure visual coherence.

The AI generates the image, but humans define what "right" looks like. Without clear creative direction specifying model aesthetic, styling, pose, and brand alignment, AI platforms produce technically correct but off-brand and ineffective imagery.

Brands that treat digital model platforms as fully automated solutions consistently produce generic, off-brand content that doesn't convert.

Misconception: All Digital Models Are Interchangeable

Virtual influencer characters (narrative-driven, audience-focused) and AI model imagery (product-focused, production-tool-oriented) serve fundamentally different functions. Treating them as the same category leads to wrong platform choices and misaligned campaign expectations.

A brand that needs 500 product images for an e-commerce catalogue shouldn't approach a virtual influencer. A brand building long-term audience engagement through storytelling shouldn't rely solely on AI-generated product photography.

Pitfall: Skipping or Outsourcing the Quality Review Step

Publishing AI imagery with anatomical errors, garment distortions, or inaccurate colour representation directly damages brand credibility. In e-commerce contexts, it increases product returns and customer complaints.

Brands that skip human review to save time inevitably publish flawed imagery that wipes out the efficiency gains digital models provide. Quality review should cover:

- Garment accuracy — fabric draping, fit, and colour fidelity

- Anatomical correctness — proportions and natural pose

- Brand-standard presentation — lighting, styling, and overall aesthetic

Skipping any of these checks trades short-term speed for long-term credibility loss.

Frequently Asked Questions

What are some examples of brand collaborations with digital models?

Notable cases include Balmain's 2018 campaign with Shudu Gram and two exclusive virtual models (Margot and Zhi), Samsung's partnership with Lil Miquela for the #TeamGalaxy campaign in 2019, and H&M's announcement in March 2025 to create AI digital twins of 30 models for marketing content.

What are the 3 R's of influencer marketing?

The 3 R's — Reach, Relevance, and Resonance — apply to digital model collaborations too. Brands evaluate whether a virtual influencer's audience size, content fit, and emotional connection with followers align with campaign goals.

What is the 3-7-27 rule of branding?

The rule describes how repeated brand exposure builds recognition: 3 impressions for consumers to recognize a brand, 7 to remember it, and 27 to build trust. Consistent visual identity across digital model content — same model aesthetic, styling, and brand cues — speeds up both familiarity and trust.

Are AI-generated digital models the same as virtual influencers?

No. Virtual influencers are CGI characters with social media personas and followings used for storytelling and brand partnerships. AI-generated model imagery refers to photorealistic AI models used in product photography and e-commerce. They serve different purposes and require different collaboration approaches.

How do brands maintain quality and accuracy when using AI model imagery?

Quality comes down to a detailed content brief covering garment presentation and channel requirements, choosing the right AI model for the product type, and human review of outputs — checking draping, fit accuracy, and brand-standard presentation before publishing.