Introduction

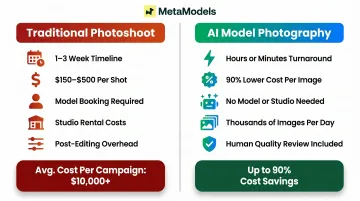

Apparel product photography has long been the most resource-intensive bottleneck in e-commerce operations. A single-day fashion shoot for just 10 outfits typically costs $2,750, with traditional on-model images running $150-$500 per shot before factoring in retouching, studio rental, and coordination overhead. For brands managing hundreds of SKUs, annual photography budgets routinely reach $125,000-$250,000.

Those costs compound against a fast-growing market. Fashion e-commerce has reached $997 billion globally, with online sales projected to exceed $1.6 trillion by 2030. Product listings with 5+ images convert at 2x the rate of single-image listings — yet traditional photoshoots can't scale at the pace modern e-commerce demands.

The representation challenge adds another layer: brands increasingly need the same garment shown across multiple model demographics, body types, and styling variations to meet consumer expectations for inclusive imagery.

AI model photography is changing this workflow. While most brands have heard of the technology, few understand how it actually works technically — from garment input to final publication-ready image. This guide breaks down the full technical process in practical terms.

TL;DR

- AI t-shirt model photography places real garment images onto AI-generated models — no physical photoshoot needed

- The workflow transforms a packshot or flat-lay into polished on-model photos ready for e-commerce and social media

- Garment fitting algorithms and fabric rendering map texture, drape, and shape onto virtual model bodies

- Brands control model diversity, body type, and styling for inclusive, on-brand imagery at scale

- MetaModels.ai applies human review to every output, catching garment inaccuracies before delivery

What Is AI T-Shirt Model Photography?

AI t-shirt model photography uses generative AI and computer vision to composite real garment images onto AI-generated human models, producing photos that look like professional studio shots—without models, photographers, or physical shoots.

Brands typically need dozens to hundreds of on-model photos per season. Booking models, coordinating studios, and editing shoots is expensive and slow—a weeks-long process that AI model photography compresses to hours or minutes.

It's worth distinguishing what this technology doesn't include:

- AI t-shirt graphic design tools (which generate artwork to print on shirts)

- Flat-lay photography automation

- Simple background removal or retouching software

Each serves a different function in the production pipeline.

Two main workflow types cover most use cases: packshot-to-model conversion (uploading an existing flat or hanger shot and having AI dress a model in it) and direct product upload. The process adapts based on input quality and garment style, but the core technical steps remain the same.

How AI T-Shirt Model Photography Works — Step by Step

The process follows a defined sequence of stages, each building on the last to produce a garment-accurate, photorealistic model image.

Image Input and Garment Preparation

The workflow begins when a brand uploads a product image—typically a flat-lay, packshot, or hanger shot. The AI system extracts the garment's visual data including shape, surface texture, color, print details, and silhouette.

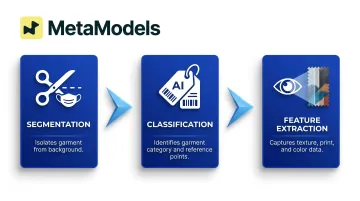

AI preprocessing works in three phases:

- Segmentation — The system segments the garment from the background using refined binary masks that isolate the front-facing relevant areas while excluding internal sections like inner collars or sleeves

- Classification — The AI identifies garment category (crew-neck tee, oversized fit, polo, etc.) and maps reference points that guide fabric draping

- Feature extraction — Computer vision algorithms capture texture patterns, print details, and color values that must be preserved in the final output

Professional packshots at 2000×2000 pixels or higher with neutral lighting give the AI the most accurate data to work from—and produce the most reliable output.

AI Garment Fitting and Fabric Rendering

This step handles the actual garment placement. Using a combination of pose estimation, body mesh modeling, and diffusion-based image synthesis, the AI places the garment onto the selected model body, adjusting fit, fold, and drape in real time.

The technical pipeline involves:

Pose estimation and body mesh modeling — Systems use DensePose and SMPL (Skinned Multi-Person Linear) body models to map 2D images to 3D body mesh coordinates. This creates a semantic body segmentation map that outlines the target body structure and identifies key regions for garment placement.

Garment warping and alignment — Dual parallel encoders extract garment texture/shape features and body features, then fuse them to align the garment with the target body pose. Pose-garment keypoints guide the inpainting process to represent the warped garment shape following the person's pose.

Texture-preserving diffusion — Advanced platforms employ Texture-Preserving Diffusion (TPD) models specifically designed to maintain high fidelity of garment textures, prints, and patterns during synthesis. This ensures logos, graphics, and fabric details remain sharp and accurate.

The AI doesn't just overlay an image — it simulates how a cotton tee would pull at the shoulders, bunch at the waist, or hang loosely on a specific body type.

MetaModels.ai applies this fabric draping approach to ensure the garment's original design — including prints, seams, and color — is faithfully represented on the model, not distorted or blurred by the compositing process.

Quantitative performance benchmarks on industry-standard VITON-HD datasets show state-of-the-art systems achieving LPIPS scores of 0.0581, SSIM scores of 0.8942, and FID scores of 11.24—indicating high perceptual similarity to real photographs.

Quality Control and Human Review

AI generation isn't deterministic — each output can vary, which means occasional garment inaccuracies, misaligned prints, or unnatural folds. Quality-focused platforms address this with a dedicated human review layer.

Trained reviewers — typically fashion specialists with experience in garment construction and photography — check each generated image for:

- Garment accuracy and proportion errors

- Print alignment and pattern continuity

- Fabric texture fidelity

- Color accuracy against the original packshot

- Visual artifacts or unnatural draping

MetaModels.ai implements this quality assurance step for every image, with human fashion specialists acting as a final filter between AI output and brand use. This review process catches issues before delivery and ensures garment details including color, shape, and proportions meet professional standards.

Commercial platforms like Stylitics report using this two-tier approach—automated AI quality agents catch obvious artifacts and hallucinations, then human reviewers apply final brand-level quality control. Some providers offer 100% quality guarantees backed by this hybrid review process.

Final Image Output

The output stage delivers a ready-to-publish image of the garment on the selected AI model, available at up to 4K resolution and formatted for e-commerce product pages, social media, digital ads, or lookbooks.

Integration into downstream brand workflows is straightforward. Export images directly into:

- Product listing tools (Amazon, Shopify, Myntra, Flipkart)

- Creative asset libraries and digital asset management systems

- Ad platforms (Facebook Ads Manager, Google Ads, Pinterest)

- Email marketing templates

- Seasonal lookbooks and line sheets

The end-to-end timeline is dramatically shorter than traditional shoots. A conventional photoshoot takes 1-3 weeks from booking to final edited images; AI model photography delivers publication-ready content in hours or minutes for most SKUs.

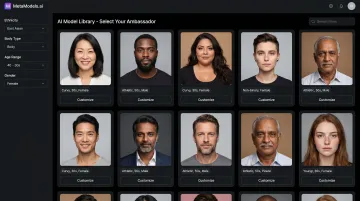

The AI Model Layer: Diversity, Customization, and Brand Fit

One of the most strategically important elements of AI model photography is the model selection layer. Brands aren't limited to a single generic model—they can access curated libraries of AI models that vary by ethnicity, body type, age, and gender, making inclusive representation achievable without extra shoot costs.

Customization works on two levels. MetaModels.ai offers a pre-built library spanning diverse demographics — ethnicity, body type, age range, and gender — so brands can match their visual identity to their target audience without casting. For deeper alignment, brands can create fully custom AI models trained on specific aesthetics or personas, ensuring visual consistency across all product imagery without managing ongoing talent contracts.

The commercial rights picture is straightforward. Because AI models are fully synthetic, there are no image licensing fees, model release forms, usage restrictions, or royalty costs. Brands own the output and can use it across channels without legal constraints or recurring payments. Traditional model photography ties usage rights to contracts, territories, and renewal fees — none of that applies here.

Inclusive representation also has a direct revenue connection:

- Harvard Business Review research found 64% of consumers took action after seeing a diverse or inclusive ad — rising to 75% for Black consumers and 85% for Latinx consumers

- Vogue Business survey data shows 67% of consumers are more likely to buy from brands that feature a range of body sizes in marketing

The model layer also controls styling variables — pose, background, lighting mood — that shape how consumers perceive the final image. That level of control, applied consistently across hundreds of SKUs, is where AI model photography creates its most practical advantage.

Where Apparel Brands Apply AI T-Shirt Model Photography

E-Commerce Product Pages

The primary use case is e-commerce product pages, where on-model imagery consistently outperforms flat-lay or mannequin shots in conversion rate. Industry data from Shopify indicates that product listings with 5+ images convert at 2x the rate of single-image listings, and 56% of online shoppers interact with product images before anything else on a page.

Fashion products specifically show a strong conversion lift with on-model photography—industry sources cite 20-30% higher conversion rates for on-model imagery compared to ghost mannequin shots, though exact figures vary by category and target demographic.

Secondary Applications

Social media content — Instagram, Facebook, Pinterest, and TikTok feed content where brands need fresh creative assets daily without the budget for continuous photoshoots

Paid ad creative — A/B testing multiple model variations, backgrounds, and styling options to optimize ad performance across platforms

Seasonal lookbooks — Quickly refreshing catalog imagery for new collections without scheduling full production shoots

Size-inclusive product pages — Showing the same garment on multiple body types to help customers visualize fit, addressing a critical pain point where 45% of all consumers feel alienated from fashion brands due to sizing

Which Brands Benefit Most

DTC brands with large catalogs — New direct-to-consumer brands need consistent, professional imagery fast. AI model photography lets them compete with established players on visual quality without matching their photography budgets.

Print-on-demand businesses — POD platforms offer 1,300+ customizable products, with each design variant creating a unique SKU that would traditionally require individual photography. Generating on-model images from flat garment artwork makes this cost-prohibitive at scale.

Enterprise retailers refreshing seasonal content — Brands like Le Coq Sportif, ECCO, and Zalando are replacing up to 70% of traditional production workflows with AI-generated imagery, producing thousands of images weekly at 90% lower cost per image.

The technology performs best for standard apparel categories like t-shirts, polos, hoodies, and athleisure where garment geometry is well-defined and fabric behavior is predictable. Highly complex garments with intricate 3D details or heavily textured fabrics may require additional quality review to ensure accurate representation.

Conclusion

AI t-shirt model photography works by extracting garment data from a product image, fitting it onto an AI-generated model through physics-informed fabric rendering, and delivering a reviewed, finished photo ready for listing or campaign use—all without a physical shoot. The technical process combines computer vision segmentation, pose estimation, texture-preserving diffusion models, and human quality control to produce images that match professional studio photography standards.

Brands that understand the underlying process are better equipped to prepare clean input assets, choose the right platform, and build a content workflow that actually delivers at volume. As the fashion e-commerce market approaches $1.6 trillion and consumer expectations for diverse representation keep rising, the question for most brands isn't whether to adopt AI model photography—it's how quickly they can operationalize it.

Frequently Asked Questions

Can AI design a T-shirt for me?

No—AI graphic design tools (which generate artwork to print on shirts) are separate from AI model photography (which photographs an existing garment on an AI model). These are two distinct tools serving different parts of the product workflow.

Can I sell t-shirts with AI-generated images?

Yes. Images generated through AI model photography are commercially safe for product listings, ads, and marketing. Most platforms provide them with no royalties, model licensing fees, or usage restrictions — brands retain full commercial rights.

How accurate is AI model photography at showing fabric texture and drape?

Accuracy depends on input image quality and the platform's rendering capabilities. Advanced platforms simulate real-time fabric draping to preserve texture, print, and fit details — and human review catches any inaccuracies before final delivery.

Do I need a photographer or studio to use AI model photography?

No physical photographer, studio, or model is required. Brands only need a product image—typically a flat-lay or packshot—to upload. The platform handles garment fitting, model selection, background customization, and quality review.

How long does it take to generate AI model photos for a t-shirt collection?

Turnaround depends on platform and volume. What traditionally takes days or weeks — model booking, shooting, editing — shrinks to hours or minutes for most SKUs. Enterprise platforms can process thousands of final images per day.

Can AI model photography work across different t-shirt styles, colors, and prints?

Yes. The technology handles color variations, print patterns, and basic style differences well. Highly complex garments—such as those with heavily textured fabrics or intricate 3D embellishments—may require additional quality review to ensure accurate representation of all details.