Introduction

A marketing team at a fast-fashion brand hits "publish" on a new product collection—only to discover the next morning that AI-generated product descriptions cite incorrect care instructions, and several hero images show garment draping that doesn't match the actual fabric weight. Customer service is flooded with returns. Trust erodes overnight.

AI tools generate content faster than teams can verify it, and the output often looks convincingly accurate despite containing critical errors. According to a 2023 analysis of AI query accuracy by Gartner, roughly 45% of AI responses contain at least one significant issue—a failure rate that becomes a direct business liability when content reaches customers at scale.

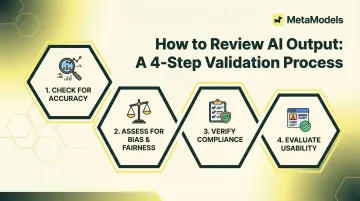

This guide walks fashion brands, e-commerce teams, and marketing professionals through a clear, repeatable 4-step process for reviewing AI output—both written and visual—before it goes live. You'll be able to protect brand quality without slowing production.

TLDR

- AI output can look accurate while hiding factual errors, outdated data, or brand inconsistencies — manual validation catches what automation misses

- The 4-step process: Accuracy Check, Context and Relevance Check, Brand Alignment Check, Final Human Judgment Review

- Each step targets a distinct failure type: fabricated facts, contextual misfits, off-brand output, and subtle issues only humans catch

- Applies to both text (product descriptions, captions) and visuals (AI-generated imagery, model photography)

- Skipping steps trades short-term speed for downstream costs: corrections, complaints, and reputational damage

What Is the AI Output Validation Process?

AI output validation is a structured review method applied to AI-generated content before publication, designed to catch inaccuracies, inconsistencies, and quality issues that automated generation cannot self-correct.

The process confirms that AI output is accurate, contextually appropriate, brand-consistent, and fit for its intended purpose—before it reaches a customer or audience. It's the quality gate between AI generation and public-facing use.

Proofreading catches surface errors like spelling and grammar. Validation goes deeper — evaluating factual correctness, contextual fit, and strategic alignment to determine whether content is usable, not just grammatically correct:

- Proofreading: Fixes spelling, grammar, and punctuation

- Validation: Confirms accuracy, brand alignment, and fitness for purpose

Why Fashion and E-Commerce Brands Need to Validate AI Output

AI-generated content poses specific risks in fashion and e-commerce. Tools can hallucinate product details, misrepresent garment colors or materials, and generate imagery with anatomical errors. They can also produce copy that directly contradicts a product's actual specifications.

The financial exposure is real. Business losses from AI errors reached $67.4 billion globally in 2024, with 82% of production bugs stemming directly from hallucinations. In legal and compliance contexts, AI models hallucinate an average of 18.7% of the time — a failure rate that creates serious liability in regulated product categories.

What typically goes wrong without validation:

- Brands publish AI imagery that doesn't accurately represent fit or texture

- Product descriptions cite incorrect fabric content or care instructions

- Campaign visuals contradict established brand identity

- Model imagery contains anatomical impossibilities or garment physics errors

Each mistake erodes buyer confidence. 52% of consumers reduce engagement when they suspect content is AI-generated. Separately, brands that maintain visual and messaging consistency see revenue lift of 23% to 33%. For categories subject to advertising standards or product labeling regulations, validation isn't optional — it's a compliance requirement.

The 4-Step AI Output Validation Process

This framework is sequential. Each step filters a different category of error. Completing all four steps before publishing is what makes the process effective.

Step 1: Accuracy and Factual Check

Verify that all factual claims in AI output match original source data or brand-approved product information. This includes:

- Product dimensions and sizing

- Material composition and fabric content

- Pricing and promotional details

- Care instructions

- Model specifications and garment details

Surface hidden errors by breaking the output apart. Check each factual claim independently rather than reading the content as a whole. Surface-level fluency masks underlying errors — don't let a well-written sentence skip the fact check.

Common accuracy failures:

- AI tools confidently generating plausible but incorrect product details

- Fabricated statistics or invented alphanumeric reference codes

- Garment descriptions that don't match the actual item

- Outdated pricing or discontinued product references

Cross-reference every claim against the original product brief or data sheet. AI models use 34% more confident language when generating incorrect information—fluency is not proof of accuracy.

Step 2: Context and Relevance Check

Assess whether the AI output is appropriate for its intended use case and platform. A product description written for a Google Shopping feed has different requirements than a caption for Instagram or a line in a digital lookbook.

Check that the output addresses the right audience. Tone, vocabulary, and detail level should match the buyer persona. AI tools default to a generic register that rarely serves the target customer well.

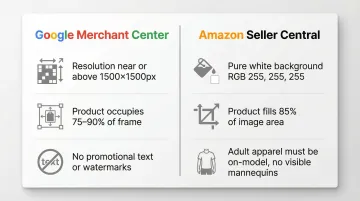

Platform compliance matters. Major e-commerce platforms enforce rigid image specifications:

- Google Merchant Center: Images near or above 1500×1500 pixels, product must occupy 75-90% of frame, no promotional text or watermarks

- Amazon Seller Central: Pure white background (RGB 255,255,255), product must fill 85% of image area, adult apparel must be on-model, no visible mannequins

Identify content that is technically accurate but contextually mismatched—for example, AI-generated imagery styled for luxury retail appearing in a fast-fashion context, or copy using formal language in a casual brand environment.

Step 3: Brand Alignment and Visual Quality Check

Review AI-generated content against the brand's visual and verbal guidelines: color palette, model representation standards, tone of voice, and style consistency.

For AI-generated imagery, check these quality issues specifically:

- Garment draping accuracy and fabric texture realism

- Model proportions and anatomical correctness

- Background consistency and resolution suitability for the target platform

- Color accuracy and embellishment detail preservation

Human detection accuracy for AI-generated images has dropped to just 29.04% for advanced models, meaning hyper-realistic textures often mask severe structural errors. Reviewers frequently overlook geometric errors (unrealistic proportions, perspective issues) and physical violations (implausible shadows, incorrect reflections) because the surface quality looks convincing.

This is why embedding review at the production stage—not just at the end—matters. MetaModels.ai, for example, routes every AI image and video through human fashion specialists before delivery, with color, shape, and proportions verified before output reaches the client.

Confirm that AI output reinforces rather than dilutes brand identity. Inconsistent visuals or off-brand copy across channels creates confusion and undermines the trust that consistent branding is designed to build.

Step 4: Final Human Judgment Review

This is the stage where the reviewer reads or views the output holistically, not item by item, and applies the kind of contextual judgment that rule-based checking cannot replicate.

What to look for in the final pass:

- Subtle tonal issues that feel strategically off

- Edge cases the checklist didn't anticipate

- Whether the content would hold up to scrutiny from a customer, regulator, or brand partner

- Gaps in specificity—claims that are technically true but vague enough to mislead

Technical checks catch errors. Human judgment catches everything else. That distinction is what makes this final step non-negotiable.

Common Mistakes When Reviewing AI Output

The most frequent error is reviewing AI output as a whole rather than in discrete parts. This allows individual inaccuracies to hide inside coherent, well-structured content. AI is skilled at making wrong information sound right.

Two patterns cause most of these failures:

- The fluency trap: Content that reads smoothly — or imagery that looks realistic — is often assumed to be accurate. Surface quality is not a proxy for factual or brand correctness.

- AI-to-AI validation: Running output through a second AI tool for review introduces the same blind spots and error patterns. A widely reported analysis of over 4,000 research papers found that AI-hallucinated citations slipped past multiple peer reviewers because the citation format was correct and the invented titles sounded plausible.

Human verification is essential, particularly for high-stakes or customer-facing content.

When a Validation Process Alone May Not Be Sufficient

Post-generation validation is insufficient in certain scenarios:

- High-volume output pipelines that generate faster than teams can review

- Highly regulated product categories (claims about textiles, allergens, certifications) where accuracy must be guaranteed upstream

- Use cases where even a small error rate is unacceptable

In these cases, the solution is upstream quality control: better prompting, constrained AI parameters, or platforms that build human review into the generation process itself. Increasing the rigor of downstream checking alone won't close the gap.

Compliance Requirements Are Already in Effect

Three regulatory frameworks now directly affect AI-generated content:

- Google Merchant Center requires IPTC metadata tags like

TrainedAlgorithmicMediafor AI-generated product images - The EU AI Act mandates explicit transparency disclosures for synthetic content

- The Green Claims Directive requires companies to substantiate environmental claims using verifiable, science-based methods

Brands need to integrate metadata preservation directly into their digital asset management pipelines — not as an afterthought, but as a built-in step before content goes live.

Conclusion

A structured review process isn't a vote of no-confidence in AI. It's how you protect the quality and accuracy your brand has built — and what your customers expect every time they interact with your content.

Teams that treat validation as a built-in step — not an afterthought — move faster without the reputational cost of unchecked errors. That's the real payoff. Validation isn't overhead; it's what makes AI-assisted workflows reliable enough to depend on.

Frequently Asked Questions

What is the four-step process for reviewing AI output?

The process includes: (1) Accuracy Check—verifying factual claims against source data, (2) Context and Relevance Check—confirming platform and audience fit, (3) Brand Alignment and Visual Quality Check—ensuring consistency with brand guidelines, and (4) Final Human Judgment Review—applying holistic professional instinct before publication.

Does the same validation process apply to AI-generated images and AI-generated copy?

The core steps apply to both, but the focus shifts. For copy, accuracy and brand tone are the primary checkpoints. For AI-generated imagery—such as model photos or styled product visuals—garment accuracy, fabric rendering, and visual consistency with brand standards require dedicated review before any asset goes live.

Why is it important to review AI-generated content before publishing?

AI tools generate plausible-sounding content that can contain factual errors, outdated information, or brand inconsistencies. With 45% of AI responses containing at least one significant issue, reviewing before publication prevents customer-facing mistakes that erode trust, trigger returns, and require costly corrections downstream.

What are the most common errors found in AI-generated fashion and e-commerce content?

The most frequent issues include incorrect product specifications (fabric content, sizing, care instructions), garment draping or texture inaccuracies in imagery, off-brand tone in copy that doesn't match the target audience, and contextually mismatched content for the target platform or channel.

Can AI be used to review its own output?

While AI tools can assist with checks like grammar and formatting, using AI to validate AI introduces shared blind spots and error patterns. AI hallucination is a well-documented problem—outputs can look structurally correct while containing fabricated facts. Human review remains essential, especially for factual accuracy and brand judgment.

How long should the AI output validation process take?

A structured checklist for each step reduces time-per-asset while maintaining consistency. High-volume teams can integrate validation into existing workflows without bottlenecks by focusing on claim-level verification and clear brand standards.